the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Reproducible and relocatable regional ocean modelling: fundamentals and practices

James Harle

Jason Holt

Anna Katavouta

Dale Partridge

Jenny Jardine

Sarah Wakelin

Julia Rulent

Anthony Wise

Katherine Hutchinson

David Byrne

Diego Bruciaferri

Enda O'Dea

Michela De Dominicis

Pierre Mathiot

Andrew Coward

Andrew Yool

Julien Palmiéri

Gennadi Lessin

Claudia Gabriela Mayorga-Adame

Valérie Le Guennec

Alex Arnold

Clément Rousset

In response to an increasing demand for bespoke or tailored regional ocean modelling configurations, we outline fundamental principles and practices that can expedite the process to generate new configurations. The paper develops the principle of reproducibility and advocates adherence by presenting benefits to the community and user. The elements of this principle are reproducible workflows and standardised assessment, with additional effort over existing working practices being balanced against the added value generated. The paper then decomposes the complex build process, for a new regional ocean configuration, into stages and presents guidance, advice and insight for each component. This advice is compiled from across the NEMO (Nucleus for European Modelling of the Ocean) user community and sets out principles and practises that encompass regional ocean modelling with any model. With detailed and region-specific worked examples in Sects. 3 and 4, the linked companion repositories and DOIs all target NEMOv4. The aim of this review and perspective paper is to broaden the user community skill base and to accelerate development of new configurations in order to increase the time available for exploiting the configurations.

- Article

(1013 KB) - Full-text XML

- BibTeX

- EndNote

There is internationally an increasing demand for simulations of the marine environment to deepen our understanding of the marine system and its sensitivities in a changing climate. High-profile issues include marine hazards from storms (Harley et al., 2022; Masselink et al., 2016), sea level rise (Fox-Kemper et al., 2021; Ponte et al., 2019), management of blue carbon resources and understanding the potential marine impacts of climate change mitigation interventions, such as marine offshore renewable energy (Dorrell et al., 2022) and land use change (Felgate et al., 2021).

While global ocean modelling products and research activities are increasing in resolution and sophistication, they are still a long way from the scale and process representation required to deliver accurate information on the coastal ocean. There are a number of reasons why it is advantageous to configure bespoke regional models: though data catalogues like the Copernicus Marine Service (CMS: https://marine.copernicus.eu, last access: 20 February 2023, and others) are rich resources for regional and global marine data, these cannot always satisfy all user requirements. Motivations need not always be about spatial resolution. For example, missing processes can be an important driver for building a new configuration (perhaps addressing a lack of integrated physics and biogeochemistry or a lack of tidal processes in the “off-the-shelf” catalogue products). Alternatively, bespoke model outputs might be required (such as high-frequency output for specific sub-regions or new metrics). So, the key advantages of regional over global configurations include benefits associated with resolution enhancements, design flexibility and computational efficiency (to contain only the region and processes that matter and to not worry about degrading the solution in other regions). On the other hand, key disadvantages include the need for lateral boundary conditions (which can be hard to obtain and a potential source of error), the human resources required to configure and maintain multiple regional domains, and the lack of a common experience across a global community of coastal ocean modellers.

The configuration of a regional ocean model has traditionally been a one-off event taking many months and requiring many, often subtle, expert decisions. Consequently, descriptions of the set-up (e.g. in the literature) are relatively limited or hard to reproduce in their entirety. The need to configure multiple regional models in many different seas around the world has led us to develop a systematic workflow where NEMO (the Nucleus for European Modelling of the Ocean, Madec and Team, 2022, http://www.nemo-ocean.eu, last access: 20 February 2023) regional ocean configurations could be more efficiently built, deployed and reproduced. This reproducible workflow is not intended to be the sole authority on regional configuration set-ups or to provide a turn-key or black-box solution; instead, it is designed to provide a set of guidelines for modellers to follow in order to capitalise on the usability and interoperability of the resulting simulations. Indeed, other modelling systems, such as the Massachusetts Institute of Technology general circulation model, MITgcm (Marshall et al., 1997), the Regional Ocean Modeling System, ROMS (Shchepetkin and McWilliams, 2005) and the Finite Volume Community Ocean Model, FVCOM (Chen et al., 2006), can also be readily configured for new regional applications. All have their own strengths which are largely set according to the model system's development history and the user's familiarity with the system. For example, ROMS was specifically designed as a regional ocean model, and MITgcm has been a model of choice for numerous idealised process studies. On the other hand, FVCOM's unstructured mesh has made it a popular research code choice for multi-scale coastal hydrodynamics. The concepts presented here are intended to be broadly applicable to any modelling system, though the worked examples are implemented within NEMO.

NEMO is an ocean modelling framework underpinned by a consortium of five large European research, climate and operational centres. It is well supported as an international community modelling code and consequently is employed as the ocean component for 9 of the 35 widely used Coupled Model Intercomparison Project Phase 6 (CMIP6) models (Eyring et al., 2016, https://pcmdi.llnl.gov/CMIP6/, last access: 27 July 2022) and is used in the CMS catalogue of freely available marine data products. As a regional model it benefits substantially from these investments of effort. However, its origins in large research and operational centres (where teams focus on specific configurations) has led, quite naturally, to barriers to NEMO being more widely used in regional applications.

In our experience, each research question addressed with a regional model configuration requires a subtly different workflow. Sometimes this would be the requirement of different forcing, sometimes the use of ensemble simulations, or sometimes different domain files. The intention here, therefore, is not to provide a manual on how to build a configuration but instead to share the concepts that need to be considered and practices that can be utilised when building a new configuration. This is in part done with code examples. Note that the intention is not to create an automatic method for generating new configurations (e.g. Trotta et al., 2021); NEMO is a continually evolving code base with frequent releases and updates, so any turn-key solution would be quickly depreciated and less appropriate for cutting-edge scientific endeavours. Furthermore, since the process as a whole is complex and an unwelcome barrier to new starters, we have found it instructive to offer recipes that guide the user through the stages that need to be considered. Users are then encouraged to modify these recipes for their own purposes, gaining insight by doing so whilst simultaneously preserving the reproducibility documentation.

On this journey we have developed methodologies that reinforce the principle of reproducibility, which is fundamental to the scientific method. In particular, these practices are aimed at making large modelling frameworks more accessible. These concepts and benefits are discussed in Sect. 2. In Sect. 3 we step through the important considerations that can guide the construction of a new regional configuration. Model- and configuration-specific details are abstracted into worked examples in linked repositories. In Sect. 4 important considerations are described for a selection of modules that can expand the suite of process representation beyond the hydrodynamics (i.e. biogeochemistry, waves, nested domains, and ice processes). Finally, discussions and conclusions are in Sect. 5. The paper is specifically targeted at the NEMO framework, with the hope of thereby making NEMO a more accessible framework for regional ocean modelling. However, the concepts (if not the details) are readily transferable to any regional modelling system.

The scientific method requires reproducibility. However, there is no defined level of documentation or code sharing required to meet this requirement. Here we specifically consider the activities required to reproduce a simulation on the assumption that the numerical solution is independent of the discretisation implementation (e.g. machine architecture, processor decomposition, or grid orientation, which are handled by the modelling framework developers). In our discipline, code has always been available on request from authors, but with increasingly complex code bases, significant levels of expert knowledge are increasingly required to be able to compile and implement the code. The established modelling frameworks such as the MITgcm (https://mitgcm.org/documentation/, last access: 9 February 2023), ROMS (https://www.myroms.org/wiki/Documentation_Portal, last access: 9 February 2023) and NEMO (https://www.nemo-ocean.eu, last access: 9 February 2023) all have comprehensive documentation, with large self-supporting user communities and online forums. Within the NEMO framework this support is invaluable for new users getting started and for community engagement with system development. However, this alone cannot deliver reproducibility, which arguably is minimally implemented within our community.

It is easy to understand how the additional time burden and potential loss of “intellectual property” might disincentivise an individual in making their science too easy to reproduce. Indeed, the strategy for how one chooses to make their work reproducible, or the level one must attain, is not prescribed, and perhaps nor should it be. However, this lack of prescription and the non-trivial amount of expert knowledge required to generate or reproduce simulations can enhance the barrier to new adoption of modelling frameworks.

Beyond being a mandate, reproducibility offers clear benefits to the community. Reproducibility leads to enhanced efficiency, with less time “reinventing the wheel” or consumed by software problems and more time dedicated to science discovery or project deliverables. Reproducibility can lead to a democratisation of skills across a user base and an upskilling for individuals. It can accelerate debugging and therefore accelerate development. Enhanced levels of reproducibility in existing configurations make delivery of new working configurations a realistic prospect within smaller research projects and furthermore make the process more accessible to new regional modellers.

Furthermore, with the progression towards increasingly automated and integrated systems (e.g. https://www.ukri.org/wp-content/uploads/2022/05/NERC-170522-NERCDigitalStrategy-FINAL-WEB.pdf, last access: 9 February 2023), there will be an increasing demand for machine-capable reproducibility.

In recent years, and in response to an increasing demand for new regional configurations, the authors sought to develop such practices. The concepts outlined here are intended to transcend code versions and even modelling frameworks. They are emergent rather than novel, born and distilled from experience and intending to borrow good working practices from the software development community. To be effective, they must be memorable, even obvious.

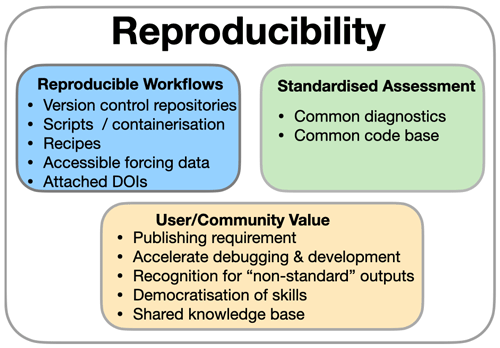

Figure 1The principle of reproducibility is delivered by reproducible workflows and standardised assessment, but this ideal can only be maintained when its contribution is understood and valued.

There are three elements that have precipitated out of our work towards reproducibility (and we are still on the journey). There are two activities, reproducible workflows and standardised assessment, and a third element, value. For the endeavour to be sustainable, the additional activities must produce a recognisable value that exceeds the effort. Schematically summarised in Fig. 1, these are each addressed in the following.

2.1 Reproducible configurations

The first activity within the enhanced reproducibility principle is reproducible workflows (Fig. 1). Reproducible relocatable regional modelling workflows already exist. Indeed, established and alternative workflows should be reviewed and considered when choosing a template appropriate for a new project. Seminal examples include the following.

-

A Structured and Unstructured grid Relocatable ocean platform for Forecasting (SURF: Trotta et al., 2016, 2021, https://www.surf-platform.org/tutorial.php, laast access: 1 July 2022), also using NEMO (and unstructured modelling) to rapidly build and deploy configurations for real-time maritime disaster response. The focus is on operational deployment and reliability. This necessitates a high level of automation and reliance on mature code versions.

-

The NEMO nowcast framework (https://nemo-nowcast.readthedocs.io/en/latest/, access: 1 July 2022). This is a well-documented collection of Python modules that can be used to build a software system to run the NEMO ocean model in a daily nowcast/forecast mode. NEMO nowcast has different, though complementary, ambitions for this paper but is likely to be of interest to the reader.

-

The Salish Sea MEOPAR project documentation (https://salishsea-meopar-docs.readthedocs.io/en/latest/code-notes/salishsea-nemo/index.html, last access: 1 July 2022). This includes extensive documentation for a regionally specific NEMO configuration of the Canadian Salish Sea, which is deployed in various research projects (e.g. Soontiens and Allen, 2017).

Guided by existing examples and our own experiences, in this section the focus is on workflows that can enhance reproducibility whilst maintaining scientific flexibility.

2.1.1 Organised workflows

The key route to effective workflow reproducibility and its benefits is via systematic documentation.

Central to the structure advocated here is the use of the following.

- i.

Version control repositories for modifications to the standard NEMO source code. We arbitrarily choose git and GitHub.

- ii.

Scripts for configuring parts of the set-up that can be automated and labour-saving. These also reside in the git repositories.

- iii.

Recipes to describe the whole process.

It was found that the recipes, which make the whole process transparent from software installation through to assessment and analysis, were especially important in democratising the build process. Even though they took some time to document, the benefit was immediate since multiple scientists and students could independently work on projects without continual reliance on overburdened NEMO specialists.

Documenting the whole process in detail was important since the recipes form a template for subsequent configurations, for which the required modifications are hard to anticipate and could vary in nature from high-performance-computing (HPC) architecture changes to alternative boundary forcing data.

We found the https://github.com (last access: 20 February 2023) platform convenient for workflow management, since the code modifications, scripts and recipes (the latter being in the form of associated wikis) could be co-located under one repository. We also found that the design of an “optimal” template repository was elusive since our various projects and experiments had subtly different requirements, making a universal template unwieldy. We found, therefore, that the most efficient approach to getting the benefits of an accelerated start on new projects was to clone and modify an existing project.

Excellent (and inspirational) examples of this workflow include the long-standing and extensive Canadian Salish Sea MEOPAR project documentation. In the UK the requirements have not been geographically focused and so led to an emphasis on building new relocatable configurations, starting with Lighthouse Reef in Belize (https://pynemo.readthedocs.io/en/latest/examples.html, last access: 6 January 2023) as a demonstrator. Subsequently this was iterated to build early versions of the SEAsia repository (https://doi.org/10.5281/zenodo.6483231, Polton et al., 2022a), which in turn spawned Caribbean (https://doi.org/10.5281/zenodo.3228088, Wilson et al., 2019), which was modified to have scripts to auto-build and run clean prescribed experiments using data recovery from remote storage (https://jasmin.ac.uk, last access: 20 February 2023) and compute on a remote HPC resource (https://www.archer.ac.uk, last access: 20 February 2023). These documented experiences underpinned an ability to scale the number of new configurations, spawning configurations in the Bay of Bengal and East Arabian Sea (BoBEAS: https://doi.org/10.5281/zenodo.6103525, Polton et al., 2020a) and a number of other configurations listed in the Appendix.

2.1.2 Containerisation

Containerisation presents a complimentary route to reproducible workflows that addresses the challenge of code portability between machines. The container provides a reproducible environment in which code, an ocean model in this instance, can be developed and executed. This greatly simplifies issues associated with conflicts between software library versions and operating system versions. Through the use of containers, we can begin to construct an end-to-end scientific study, even including the pre-/post-processing tools used in a peer-review publication. The use of container images has been gaining traction in academia over the last decade, with several instances of their use in the geophysical sciences, e.g. Hacker et al. (2017), Melton et al. (2020) and Cheng et al. (2022).

Container images contain pre-built applications together with their dependencies, such as specific versions of programming languages and libraries required to run the application. This image file is used by the container software on the host system to construct a run-time environment from which to run the application, providing an attractive and lightweight method of virtualising scientific code. It provides a run-time environment that is independent of the host system and highly configurable and removes the set-up and compilation issues potentially faced by the user.

The idea of moving towards full reproducibility makes it easier for peers to appraise these numerical scientific studies and possibly build on them in future. Providing consistent compute environment containers will also benefit the development cycle of the code and increase its longevity. There are many software containers to choose from: Docker (https://www.docker.com, last access: 9 February 2023), Shifter (https://github.com/NERSC/shifter, last access: 9 February 2023), Charliecloud (https://hpc.github.io/charliecloud, last access: 9 February 2023), RunC (https://github.com/opencontainers/runc, last access: 9 February 2023), SingularityCE (https://docs.sylabs.io/guides/3.10/user-guide/, accessed 9 January 2023), Apptainer (https://apptainer.org, last access: 9 February 2023) and Podman (https://podman.io/, last access: 9 February 2023). Each offers its own advantages and compromises. Two that have gained a lot of traction within the scientific community over recent years are Docker (with a focus on cloud computing and local desktop deployment) and Singularity (in the realm of HPC systems). Though this is not yet a routine part of our workflow, it has been an essential part of several successful projects, and its use continues to be explored.

- Example: Docker

-

Docker is an established containerisation software package that effectively streamlines the process of building, launching and managing containers. It originally required root privileges, and message passing was not trivial. However, installing Docker and running a demonstration NEMO container on new machines was so simple that we were able to deliver workshop training to run NEMO configurations on participants' consumer-grade laptops. Docker abstracted all the challenges of participants' subtly different Microsoft and Mac software libraries into a single controlled build within a container (with a Linux OS) that they could each install. This demonstration is made available through the Belize configuration.

A more complex example was to build a Docker container with MPICH – a portable implementation of the Message Passing Interface (MPI) standard – to compile and run the NEMO ocean engine with parallel processing to run on Google Cloud for a much larger computational problem, with message passing between containers, in the Bay of Bengal and the East Arabian Sea (see e.g. the BoBEAS example with MPI Docker implementation). This ocean configuration is subsequently implemented in a coupled ocean–land–atmosphere regional suite (Castillo et al., 2022).

- Example: Singularity

-

One of the main strengths of Singularity is that it provides a means of containerisation to the scientific computing and HPC communities. It is increasingly gaining traction within academic communities, with the key motivators being that Singularity is an open-source project and that Singularity can be run without root privileges on the host machine.

In a recent eCSE (https://www.archer2.ac.uk, last access: 20 February 2023) project, CoNES (https://cones.readthedocs.io/en/latest/, last access: 20 July 2022), we developed a general method of containerising NEMO using Singularity. We provided automated recipes by which a user can build a Singularity image file with their chosen version and components of the NEMO code. This immutable image file is then portable to a range of host systems. As part of the CoNES project the performance of the containerised code was compared against a natively compiled code on the ARCHER2 HPC system (https://www.archer2.ac.uk, last access: 20 February 2023). With minimal optimisation, the NEMO containers performed, on the whole, within a few percent of the native code's run times (in some instances actually more quickly).

Developing workflows for research can be a complicated and iterative process, and even more so in a shared and somewhat rigid production environment. Containers provide a flexible working environment for development and production. As demonstrated, they can be a useful tool for teaching and removing barriers for new users by removing the overhead of setting up software in new environments and the challenges faced when attempting to adapt incompatible instructions to their bespoke environment.

2.1.3 Accessibility of forcing and input data

Accessible input and forcing data are fundamental to (e.g. machine-readable) reproducible workflows and merit some comment. There are two issues here. Firstly, a working configuration can only be uniquely defined if it includes specification of the external input data (e.g. bathymetry file, initial conditions) and any forcing data (e.g. meteorology, tides, rivers) as well as complete details of the model parameters and code used. Therefore, replicating the results is only possible with those same inputs. At one level this appears to be an issue of semantics, but precise terminology here offers clarity on what is required in order to satisfy the expected publishing requirement of reproducibility. In short, all the input data should be available.

The second, follow-on, issue is to address how this requirement can be satisfied when for large model simulations this level of data storage might be problematic without advanced planning.

Clearly, adopting a recipe approach or even a container approach has much to offer here. Effective scripting with these tools can decrease the expertise level required to reproduce a configuration build. For established community models, such as NEMO, the resulting “configuration-defining” material can be reduced to a number of scripts and a small collection of curated files that specify and execute modifications to standard downloadable source code and datasets.

Similarly effective scripting can alleviate the data storage burden associated with making the forcing data available. Atmospheric forcing, in NEMO for example, is specified via namelist definitions and is mediated through weight files that transform the original data onto the target grid. Effective scripting can be used to download the raw forcing files and generate the weight files. In this way, a configuration with a namelist that specifies a particular forcing can be reproduced with reduced effort.

These recommendations require the input and forcing data to be openly available. Of course, this is not always possible if input data are commercially sensitive. This can be problematic to the scientific method, though data that have had some level of processing can sometimes be made available to satisfy both privacy and reproducibility requirements.

In summary, there are a number of forcing datasets that are required, which need to be available if the configuration is to be reproducible. By way of example, for a regional operational-type ocean model (e.g. Graham et al., 2018), forcings include rivers, lateral boundary conditions (time-varying and tidal harmonics, as appropriate) and surface boundary conditions. Finally, in addition, the bathymetry and grid configuration are required. All these data require a level of pre-processing to prepare them for use. A pragmatic middle ground would be to include the pre-processing methods, from the point of externally available sources, in the configuration along with a small sample of processed model-ready files. To ensure that (i) the model can be run for a short period with demonstration files, (ii) forcings for long simulations can be replicated in the same way.

2.2 Standardised assessment

The second activity within the enhanced reproducibility principle is standardised assessment. The accuracy of output from any model configuration should be assessed by comparing it to equivalent observations. In the case of idealised configurations an assessment can be made against expected outcomes from theory or laboratory experiments. This is done to quantify how closely the model is able to simulate the reality it attempts to replicate. For forecasting models it is clear why this important: the accuracy of predictions can have significant impacts on the communities involved. For scientific applications it is equally important when simulating realistic regions, as the scientist must have confidence in analyses, inferences and conclusions about the physical processes of interest. Error compensation may mean that improving the model with new and realistic processes degrades the comparison with observations. This is likely to be acceptable for scientific applications but less so for operational cases. Note that this is a principle rather than a prescription, since the requirements will vary according to the modelling application. In this section we provide an outline of the key ideas behind the principle, highlighting the net benefits and advocating its importance. We consider there to be two elements to standardised assessment (see Fig. 1).

- A standardised framework

-

The framework prescribes templates for how different (class) objects should be structured and requires all ingested data to be of a defined class. For example, all data are transformed into Xarray (Hoyer and Hamman, 2017) objects with standardised dimensions and variable names according to their data type. This means that an equivalent assessment may be applied to data from different models (e.g. NEMO, ROMS, FVCOM) and comparisons made to observations from any source (e.g. profile data from EN4, Good et al., 2013, or World Ocean Database, Boyer et al., 2018a, sources).

- Common diagnostics

-

By defining classes to be built upon Xarray datasets, the powerful data handling capabilities are accessible to the framework. Furthermore, since all data loaded into the framework are of known types and properties, generic diagnostics can be written for each class, which avoids the requirement for hardwired details relating to the data origin. The data-source-specific details are abstracted to the framework.

With this separation, contributors can more cleanly add to the diagnostics or the framework according to their interests and skills. This philosophy has been implemented, for example, in the Coastal Ocean Assessment Toolkit (COAsT) framework (https://british-oceanographic-data-centre.github.io/COAsT/docs/, last access: 9 February 2023).

2.2.1 Benefits to the user

Standardised assessment workflows can benefit the user. Efficient workflows can accelerate the development process in highlighting how to iterate the configuration's tunable inputs. This practice may appear obvious, though in our experience tools for test-driven development are far from standard in the oceanographic modelling community. The practice comes from software design where an extreme form of test-driven development would be to write the tests before the application (see e.g. Beck, 2002, for background). This extreme form may not be appropriate for ocean modelling, where the simulations are governed first by physical laws rather than skill, but the practice of having a standardised assessment that can be easily executed (e.g. in a script form) could nonetheless accelerate refinement of tunable parameters that are otherwise unconstrained by physical laws.

Progress in HPC performance produces simulations with increasingly larger volumes of data. These datasets increasingly require specialised tools to diagnose or manipulate. Standardised workflows for assessment can be built that abstract aspects of the high-performance computing data manipulation into generalised software libraries, thereby jointly freeing up science time whilst also increasing access to a broader range of scientific users. Furthermore, with community engagement in a standardised workflow, a broad range of specialist skills can each contribute their expertise to the mutual benefit of all the users.

2.2.2 Benefits to the scientific knowledge base

Ideally, standardised assessment is bound with the principle of reproducibility and reproducible workflows, so that any shared configuration would include the scripts to generate it and also the means to generate a verification report. This practice would appear similar to the Copernicus Marine Service, who provide Quality Information Documents (QUiDs) with the catalogue (e.g. the Atlantic-European North West Shelf-Ocean Physics Reanalysis product and QUiDs: https://resources.marine.copernicus.eu/product-detail/NWSHELF_MULTIYEAR_PHY_004_009/DOCUMENTATION, last access: 9 February 2023), but could be generated entirely automatically.

Standardised assessment makes it easier for the community to assess simulations of the same domain, and the full battery of results could be expected to appear as part of a published configuration (not necessarily peer-reviewed). This would allow for the quick and easy identification of the resultant impact of changes to the code or alterations to parameterisations or boundary conditions for certain pre-defined cornerstone metrics.

This transparency would relieve problems associated with selectively presenting only the most favourable outcomes when publishing in the peer-review literature (under page count constraints).

2.2.3 Examples

The concept of standard or shareable verification, or assessment, tools is not novel. Indeed, the concept is born from demonstrated successes with increased productivity (in more rapidly developing cumbersome NEMO simulations) and increased user engagement (using well-written worked examples to develop NEMO and Python skills). Many package examples exist, each with their specific motivations and specifications. Notable mentions include the following.

-

ESMValTool (https://www.esmvaltool.org/index.html, last access: 8 February 2023): a community diagnostic and performance metric tool for routine evaluation of Earth system models in CMIP.

-

COAsT (https://doi.org/10.5281/zenodo.7352697, Polton et al., 2022b): a diagnostic and assessment toolbox targeted at kilometric-scale regional models leaning heavily on the Xarray and Dask Python libraries (Hoyer and Hamman, 2017). The package brings simulations and observations into a common framework to facilitate assessment (see Byrne et al., 2022).

-

Pangeo (https://pangeo.io/, last access: 8 February 2023): a community project promoting open, reproducible and scalable science providing documentation, developing and maintaining software, and deploying computing infrastructure to make scientific research and programming easier. Pangeo offers scientists guides for accessing data and performing analysis using open-source tools such as Xarray, iris, Dask, jupyter and many other packages.

-

nctoolkit (https://nctoolkit.readthedocs.io, last access: 9 February 2023): a comprehensive and computationally efficient Python package for analysing and post-processing netCDF data.

Taking an example from the open-source Python user community, much of the scientific software written is underpinned by open-source packages such as NumPy, for scientific computing (Harris et al., 2020), Matplotlib, for plotting (Hunter, 2007), and Xarray, for manipulating multi-dimensional data, originally with a geoscientific focus (Hoyer and Hamman, 2017). Though these do not directly lead to standardised assessment tools, it is important to highlight (a) their fundamental importance in underpinning any development of open-source Python scientific software development. (b) They also serve as successful templates for how to coordinate, develop and maintain standardised assessment tools.

2.2.4 Cautions

There are finally two notes of caution. The rise and fall of software stacks is strongly influenced by the ease with which they can be adapted (and kept up to date) and adopted (for new users, is the learning investment required offset by the expected gains?). The former is addressed by the aforementioned design choices, with the additional observation that (at least in a Python environment) software appears to benefit from regular refreshing to update libraries and avoid obsolescence through depreciated code. The latter is aided with thorough documentation and working worked examples: worked examples accelerate user adoption and give insights into how the package is designed to be used.

The second note of caution is in “standardised assessments” being applied beyond the scope of their design specification or expected use. For example, erroneously inputting daily instantaneous temperatures when daily averages were expected would produce biased results, or a more subtle example can be demonstrated when computing flux quantities as the product of two variables: combining 5 d-averaged fields can miss the bolus interaction if the fields are correlated at a higher frequency (e.g. heat or volume transport calculations). To this end, the code would prioritise readability over efficiency, and users would be encouraged to help improve the code base.

2.3 Sustainability: a tension between cost and value

The above recommendations to enhance reproducibility have a fundamental problem: they require additional work from individuals.

Clearly, a user can benefit from workflows and tools that others have created without adding to the knowledge base. This would be of no additional cost to themselves. A user could also choose to curate their code and notes in version-controlled repositories. Except for some initial familiarisation with new tools and practices, this is not additional work. Furthermore, they would likely benefit from accelerated debugging, particularly if help is sought.

However, for the process to work, content must be created by people with appropriate expert knowledge and disseminated for the benefit of those seeking to upskill in this area. In some national laboratories or under large consortium programmes, roles can exist to support this community endeavour. For example, NumPy has direct sponsorship, and NEMO itself is sustained by significant support across an international consortium. However, with limited resources, any activities not appropriately valued will suffer neglect. A crucial aspect, therefore, is to appropriately recognise and reward the contributors for the value realised in shareable model configurations and assessment approaches, for example through well-thought-out career pathways that acknowledge non-traditional science outputs where peer review is not appropriate. Output metrics could include repository page views, downloads or other esteem indicators. A practical recommendation towards this would be to normalise inclusion of non-traditional contributions to curricula vitae (CVs), which could be facilitated by the adoption of narrative-format CVs. However, regardless of CV format, the change would fundamentally require a culture shift towards valuing community contributions alongside traditional peer-reviewed publications.

This section seeks to distill important considerations when building a new configuration. These insights are synthesised from a wide range of experience and expertise across the ocean modelling community and can help prioritise elements in a sequential build plan.

The ordering is progressive, starting with prioritisation of the leading-order processes for simulating, obtaining and building the code, proceeding through domain construction and then on to construction of external forcings. Each stage has a discussion highlighting the major options or considerations to be factored into the design. Each stage also has links to worked examples.

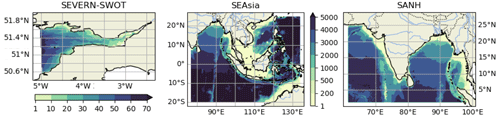

The technical detail in these worked examples is given in the accompanying repositories. These are SEAsia, a South-East Asian domain (https://doi.org/10.5281/zenodo.6483231, Polton et al., 2022a), SANH, a South Asian domain (https://doi.org/10.5281/zenodo.6423211, Jardine et al., 2022), and SEVERN-SWOT, a 500 m macro-tidal coastal domain (https://doi.org/10.5281/zenodo.7473198, De Dominicis et al., 2022) (see Fig. 2). Each configuration has subtly different requirements and subtly different challenges which influence configuration design, so we cite this range of examples to demonstrate a range of use case scenarios that might be instructive for the reader and to demonstrate the reproducible workflows that we are advocating. (At the time of writing these configurations are all being actively developed. Though the releases pointed to herein are static and valid, they may be improved upon.)

Figure 2Bathymetry (m) from the Severn Estuary, South-East Asia and South Asia worked example configurations.

3.1 Build planning and process prioritisation

The first stage consists of planning the new configuration and how to build it sequentially. The concept is to systematically increase the complexity of the processes represented with the associated efficacy testing. This is done in order to verify that the simulated processes behave as expected and also to assist with error trapping should unexpected behaviours manifest. The first step, therefore, is to prioritise processes that need careful attention or that dominate the system. For example, many simulations in the South China Sea may be dominated by tides, whereas simulations in the Mediterranean or other inland seas may be dominated by winds. Alternatively, for simulations in the northern Bay of Bengal, special consideration may be required to accurately represent the freshwater input and its seasonal variation. Similarly, in the case of models of the Arctic or Antarctic shelves, special care should be paid to properly represent sea ice and ice shelf processes. In practice, these priorities cannot be set without consideration of the intended use of the model and will therefore be application-specific. However, having formulated a priority list, experiments can be designed whereby separate processes can be tested to sequentially build complexity in the target configuration. This is particularly useful in light of the fact that the choice of the numerical techniques adopted to solve the governing equations will inevitably affect the realism and accuracy of many of the physical processes explicitly resolved or parameterised by the ocean model (e.g. Haidvogel and Beckmann, 1999; Griffies et al., 2000). Not all processes are separable or easy to separate, and so a measure of pragmatism is required to get to the final configuration with a minimum of unnecessary complications.

As a guideline, a number of worked examples are set out in the associated repositories, which include adding tidal processes to configurations where the parent models did not have tides. For these simulations, proper representation of tides was considered fundamental. For this we choose to use terrain-following coordinates to better represent shallow-water processes and tidal forcing from FES2014 (Lyard et al., 2021). For the SANH and SEAsia examples, this represents a significant departure from the parent model (without tides and computed on geopotential levels), though many other aspects of the parent and child configurations are similar. Another example, SEVERN-SWOT, details the workflow for (one-way) nesting of a 500 m resolution child configuration in a macro-tidal coastal regime.

3.2 Obtain code, compile model executables and build tools

This step is to obtain the code and build the required NEMO-supported tools. This process is generic for all configurations. This step is largely software maintenance, rather than natural science, and can be largely automated once a successful workflow has been established. Official NEMO guidance can be found on the consortium web pages (https://sites.nemo-ocean.io/user-guide/setup.html, last access: 8 February 2023). As a necessary precursor to subsequent steps, we provide linked scripts and associated wiki documentation in the SEAsia repository (https://github.com/NOC-MSM/SEAsia/, last access: 8 February 2023) as a NEMO4 worked example on ARCHER2 (https://www.archer2.ac.uk, last access: 20 February 2023). From experience we note that there is no single “best way” to structure the directory tree, and flexibility should be encouraged according to the simulation or simulations required.

At this point it is worth briefly mentioning how users can implement choices in modelling frameworks, such as NEMO, since some choices are made at compilation and are therefore hard-wired into the executable. In NEMO there are two tiers of hard-wired choices. At the upper tier choices are made that activate NEMO modules, in addition to the core ocean (OCE) physics module, for example enabling ice or biogeochemistry capability (see Sect. 4). These modules are then compiled together. At the lower tier, choices are made within modules about which code blocks need to be compiled. These are set with compiler keys (e.g. https://github.com/NOC-MSM/SEAsia/blob/master/BUILD_EXE/NEMO/cpp_file.fcm, last access: 8 February 2023) and are used to activate, for example, MPI capability (key_mpp_mpi) and XIOS coupling (key_iomput) for input–output management. However, the majority of parameters that the user will edit, typically those which define details of and control the simulation (e.g. time step and duration, forcing data location, parameterisation choices and coefficients), are contained within namelist files that are read at run time. Therefore, for many applications, on completion of these compilation steps, the resulting NEMO executables and tools can be used for many configurations. The general direction of travel for NEMO is away from compiler keys to run-time configuration. Further NEMO-specific details are given in the worked examples.

3.3 Model domain and geometry

After planning the new configuration and successfully building the machinery needed to run it, the following stage is to identify the most appropriate model domain, horizontal and vertical resolutions, and discretisation schemes needed to adequately resolve the spatial scales and the processes the new configuration is targeting. The final outcome of this crucial stage is the definition of the 3D geometry of the model domain, including the horizontal and vertical coordinates and the 3D grid spacings, which typically vary for variables defined on the different faces, corners and centre points of the grid cells.

Starting from version 4.0, NEMO loads at run time an externally generated domain configuration file containing all the relevant grid geometry information. This separation of the grid-generation process from the dynamical core permits a flexible approach to grid construction. If the planned configuration is based on an existing NEMO configuration (or idealised geometries), then the work to build a new domain configuration file can be done by tools and guidance that are supplied with NEMO. However, if the proposed work is for a new regional configuration, as is the main theme of these workflows, then the guidance outlined below is indispensable for directing the process.

-

Locations of boundaries. For the worked example, these are chosen with consideration of the tidal harmonics. It was verified (using TPXO9, Egbert and Erofeeva, 2002, FES2014, Lyard et al., 2021, or another tidal product) that none of the four largest semi-diurnal or four largest diurnal species had amphidromes near the proposed boundary, since for fixed relative errors in tidal amplitudes, small absolute errors at the boundary could scale to large absolute errors in the interior.

A principle of regional ocean modelling is to nest with the parent model in the deep ocean. Ocean–shelf exchange processes are complex and fine scale and exert a first-order control on shelf seas (Huthnance, 1995), so it is expected that they would be better represented by the child model than the parent model. This choice comes with two penalties. The deeper water necessitates a shorter barotropic time step, and steep continental slopes can cause issues of horizontal pressure gradient error for terrain-following coordinate models. So, the alternative of nesting on-shelf is also preferred for some studies (e.g. Holt and Proctor, 2008).

-

Boundary bathymetry. In a regional model, the boundary interface with the parent model is a likely source of instability, especially if the grids are different. In light of this, the boundaries can be chosen to avoid grid-scale highly irregular bathymetry near the boundary (small islands or sea mounts and steep bathymetry) and to seek near-orthogonal intersections between the boundary and the features, as done in the SEAsia configuration. An alternative is to precisely match the bathymetries at the boundary, with the child being interpolated to the target resolution and then having a halo region inside the boundary, where the bathymetry is smoothly transitioned to the target child bathymetry. However, this sophistication is not required for our examples.

-

Bathymetry pre-processing. The final bathymetry will be mapped onto the target grid. For a wide-area domain this may require patching together bathymetries from separate surveys or, more likely, using a gridded product where merging has already been performed. It is worth checking the data for spatial discontinuities and applying appropriate filtering (though being cautious not to oversmooth length scales explicitly resolved at the model's resolution). Conversely, bathymetry projected onto the target resolution may adversely affect the large-scale flow (e.g. if a narrow strait becomes closed or the flow is otherwise restricted, or islands are lost). Similarly, bathymetry at the target resolution may produce instabilities (if model levels get too thin with ebbing tide) or generate spurious currents for terrain-following coordinates over steep bathymetry. In these situations user-defined modifications to the bathymetry might be necessary according to the target grid (see e.g. the SEVERN-SWOT configuration). Note that the absence of systematic recording of the steps taken for bathymetric pre-processing is an endemic problem in model reproducibility that should be avoided.

-

Bathymetry reference datum. An important aspect of processing bathymetry data, for use in a numerical model, depends on the vertical reference datum in the source grid. Configurations with large tides and/or surges need to pay particular attention to the source bathymetry datum. Often the bathymetry is referenced to the lowest astronomical tide (LAT), e.g. EMODnet (Lear et al., 2020). While it is useful for chart data to be referenced to LAT for navigational purposes, the bathymetry needs to be referenced to the mean sea level (MSL) for modelling purposes. There are however fundamental limitations to the accuracy of this process since LAT is not accurately known in the absence of multi-year tidal records. Therefore the process of referencing and de-referencing the bathymetry to LAT is problematic. Nevertheless, it may be achieved to first order from a long multi-decade integration of a tide-only model of the region of interest by using the lowest obtained sea level as a proxy for LAT.

-

Negative bathymetry and reference geopotential height. For configurations that involve inter-tidal zones, the bathymetry can be negative relative to MSL. To deal with negative bathymetry, NEMO can use a reference geopotential level defined at some height above MSL, so that all potentially wet points are below this reference level. Care is required when generating initial conditions and stretching of vertical coordinates to take into account the use of a non-zero reference depth.

-

Critical depth for wetting and drying. In NEMO there is the option to allow for a grid cell to dry out as the tide ebbs. This is implemented in practice by limiting the fluid flux out of the cell when a user-defined minimum depth is reached. The specification of this minimum depth will be application-dependent (typically a few centimetres) and requires a compromise between maintaining numerical stability, for a given time step, against the enhanced realism of thinner critical depths (see for example the SEVERN-SWOT configuration).

-

Grid discretisation. When designing the computational mesh, the lateral extent of the domain and the 3D resolution are likely to be determined by the experiment requirements and the available HPC resources. In the horizontal direction, NEMO supports structured quasi-orthogonal curvilinear quadrilateral Arakawa These types of grids can offer a goodC grids. These types of grids can offer a good degree of compromise between flexibility and accuracy, allowing one to improve the representation of many coastal processes (e.g. the propagation of Kelvin waves and land–ocean interactions through the aligning of grid lines with the coast Adcroft and Marshall, 1998; Greenberg et al., 2007; Griffiths, 2013). In addition, they can be used to refine the resolution in a specific location of the domain to improve for example shelf–open-ocean exchanges (e.g. Bruciaferri et al., 2020). However, since they rely upon analytical coordinate transformations, they typically have limited multi-scale capability in comparison to more versatile (e.g. triangular-mesh) unstructured grids (Danilov, 2013). In the vertical direction, NEMO takes advantage of quasi-Eulerian generalised vertical coordinates , where the time dependence allows model levels to “breathe” with the barotropic motion of the ocean. In domains with shallow seas and tidal dynamics, a species of terrain-following coordinates is often adopted in order to provide vertical resolution to resolve highly dynamic tidal processes on the shelf (Fig. 2) as well as being able to resolve the open-ocean forcing conditions and structured water-mass properties (see for example Wise et al., 2022). In a linked example (e.g. SEAsia) we chose 75 levels of hybrid z-sigma vertical coordinates. These were configured so that below the 39th level (at around 400 m) the coordinates would transition to the z-partial step so as to favourably compare with the parent z-partial step.

-

Process-oriented experiments. It is often useful to conduct simple numerical experiments to assess whether the chosen 3D model geometry is numerically stable and accurate enough for the target application. For example, steeply sloping model levels can introduce errors into the computation of the horizontal pressure force (e.g. Mellor et al., 1998). In such a case, conducting idealised horizontal pressure gradient tests can be instructive to ensure that the chosen vertical discretisation scheme does not introduce undesirable spurious velocities (e.g. see experiment 1 of Bruciaferri et al., 2018, or Wise et al., 2022, for details on the idealised or realistic scenarios, respectively). Similarly, geopotential z coordinates can introduce excessive spurious entrainment and mixing when simulating gravity currents (e.g. Legg et al., 2006). Therefore, idealised cascading experiments similar to the ones of Wobus et al. (2013) or Bruciaferri et al. (2018) can be useful for revealing excessive dilution of dense overflows. Finally, tide-only forced experiments in barotropic (first) and stratified (after) set-ups can be extremely useful for early detection of issues in the model geometry that could negatively affect the accuracy of the simulated tidal dynamics (see experiment 3 of Wise et al., 2022, for details).

Worked examples are given for the https://doi.org/10.5281/zenodo.6423211 (Jardine et al., 2022), https://doi.org/10.5281/zenodo.6483231 (Polton et al., 2022a) and https://doi.org/10.5281/zenodo.7473198 (De Dominicis et al., 2022) domains. For these new configurations, initial tests were conducted to ensure that horizontal pressure gradient errors were acceptable and that the tides were simulated accurately. Having addressed any emergent problems with these tests, additional complexity can be sequentially added: realistic initial conditions, realistic temperature, salinity and velocity open boundaries, meteorological forcing and finally freshwater forcing.

In summary, the steps to create the domain file are to first create a set of coordinates for the target grid and then make a bathymetry for these coordinates. Finally, extend the domain in the z direction with the chosen types of vertical coordinates to complete the 3D discretisation of the domain.

Note that the domain configuration file is static with respect to time. Any time variability in, for example, the vertical grid can be captured at run time with the output files.

3.4 Initial conditions

Initial conditions can be idealised or realistic. Effective use of appropriate initial conditions can expedite the spin-up of a model in slowly evolving regions of the domain (e.g. deep-water salinity). However the initial conditions are constructed, it is likely that they are imperfect and that at least some spin-up time is required for dynamical adjustment on the child grid. For example, the default initial condition machinery in NEMO uses only temperature and salinity with the expectation that the velocity field can be spun up from rest.

An alternative is to pose a “soft-restart” state rather than initialising the model from rest. In the soft-restart method, salinity, temperature, current velocities and sea surface height from, for example, a coarser-resolution model or a reanalysis product are interpolated into the model grid and are then used to create a pseudo-restart file. Using this method requires a shorter spin-up to allow the model to adjust to any instabilities. The worked example in the SEAsia repository details how to generate a pseudo restart for a reanalysis product.

A principle is to match initial-condition and lateral-boundary-condition data (for temperature and salinity). A mismatch here can generate density-driven currents tangential to the open boundary, which may persist long into the simulation under geostrophy. The most common potential challenges can be grouped into issues arising from inconsistencies across products.

-

Parent-to-child grid interpolation. The parent and child grids are likely to be different. Therefore, the coastline and bathymetric features will likely have different representations such that the child might have land points where the parent has wet points or vice versa. Flood filling prior to interpolation (lateral filling of all land points with nearest-neighbour wet-point values) and downfilling isolated canyons (using e.g. SOSIE tools (https://github.com/brodeau/sosie, last access: 27 May 2022) can address issues of bathymetric representation following interpolation. Additional smoothing of tracer fields may also be required if, for example, new straits are opened by the child grid. Furthermore, the representation of the ocean interior might be different between the two grids. For example, a pycnocline might be poorly represented between two thick levels in the parent grid, but how should this “step” be represented in a finer-resolution child with increased vertical resolution? Whatever method scheme is chosen, it is likely therefore that some spin-up will be required to let fine-scale features evolve. This spin-up should be of a similar order to the flushing time of the shelf sea basin (ranging in coastal ocean regions from several days to multiple years: Liu et al., 2019).

-

Equation of state. With a non-linear equation of state, interpolating temperature and salinity onto a child grid will not ensure preservation of static stability. Alternatively, the equation of state that generated the parent data might be subtly different from the equation of state in the child model. Though these effects are likely to be small and quickly dissipate, in practice they have been seen to trigger convection in marginally stable environments. So, checking for static stability of the initial condition is recommended if stability issues arise in the first few time steps.

However the initial conditions are constructed, it is likely that they are imperfect and that at least some spin-up time is required for dynamical adjustment to the child grid to occur.

Even if the target initial conditions are prescribed as being from a “realistic” source, it can be an instructive and time-saving route to a final configuration to start with idealised initial conditions. NEMO has the facility to compile user-defined initial conditions into the executable, which can be invoked by namelist parameter choices at run time. In the supporting SEAsia repository, two examples of idealised initial conditions are used: (a) domain-constant temperature and salinity; (b) horizontally homogeneous temperature and salinity, constructed from the World Ocean Circulation Experiment (WOCE) climatology to be broadly representative of the region. The latter is used to assess the horizontal pressure gradient errors in an unforced run, thereby testing the limitations of the vertical discretisation.

3.5 Open-boundary conditions

The lateral boundaries are the points that define the horizontal extent of the model domain. Information must be specified at these points to constrain the interior solutions, effectively providing a forcing to the model. When the regional model differs from the model that was used to generate the boundary data, which typically is the case, differences between the interior solutions will emerge. An open boundary is a way to specify the external forcing while allowing phenomena produced within the interior domain to exit across the boundary without disturbance. In some sense open boundaries allow the physical domain to extend beyond the boundary of the computational domain, for example by allowing a wave to exit the domain without reflecting back into the domain.

It is important to recognise that the formulation of open-boundary conditions tends to be based on simplified physics, focusing in particular on the hyperbolic part of the dynamics. In general, these open-boundary conditions will not be perfect, and care must be taken to assess instabilities and model inaccuracies attributable to the boundary conditions. For example, a parent model that is eddy-rich may result in data that appear noisy, leading to a mismatch in dynamics at the boundary.

NEMO offers a number of namelist options to specify different open-boundary condition formulations as well as set the frequency of the supplied data. These data typically come from an external parent model with a much lower frequency (typically daily, 5-daily or even monthly for global products). There is an option to interpolate in time.

It is possible to specify “structured” open boundaries that define the northern, southern, eastern and western boundaries as well as “unstructured” open boundaries. While the former is useful in idealised set-ups, unstructured boundaries enable complex geometries defined by a supplied coordinates file. In cases where different boundaries have different requirements, it is possible to define multiple sets of unstructured open boundaries that can use different namelist options and datasets.

The namelist is organised so that boundary conditions are separated into the 2D depth mean velocities and sea surface height, the 3D depth-dependent velocities (perturbations from the depth mean) and the 3D tracer fields. Tidal harmonics can also be specified as part of the 2D fields. Following the principle of building up complexity, it is worth configuring the open boundaries for the depth mean velocities first. This can include tidal harmonic forcing.

The choice of boundary condition, for the 2D velocities, is primarily a choice of which radiation condition to use (e.g. Flather, 1976, or an Orlanski e.g. Marchesiello et al., 2001, scheme). For the 3D velocities and tracers, one can also choose to relax the child field to the external data over a buffer zone or apply a condition to the normal flux or normal gradient. For example, it is possible to apply a radiation condition to the 2D velocities, a flow relaxation scheme to the tracers and a zero gradient to the 3D velocities.

The key considerations are whether the open boundaries are affecting either stability or accuracy. Some specifics to consider include the following.

-

Parent to child. The boundary data will likely be associated with bathymetry that is different from that used by the child model. This can result in differences between the parent and child in terms of transport across the boundary. It may therefore be beneficial to match the bathymetry along the boundary. Another consideration is whether to pre-process the velocities so that the transports in the child match those of the parent when regridded. Note that NEMO allows the user to provide files that contain the full velocities (2D + 3D), which it will then separate at run time.

The boundary data will also likely be associated with a vertical grid that is different from the child vertical grid. If they have not been regridded, then NEMO provides an option to vertically interpolate onto the native grid at run time. For 3D velocities this could lead to inconsistency with interpolated tracer fields.

Changes in grid and bathymetry may also result in sections of the boundary that are separated from a land point by thin strips of wet grid points. This may result in spurious currents and a need to mask out certain grid points.

The boundary velocities may also need to be rotated. If the external velocities are specified as rectangular, for example, they might require rotation to be correctly oriented on a spherical grid.

There may also be temporal differences between the child and the parent. Specifically, models can be set up to ignore leap years, which may result in the boundary data becoming out of synchronisation with the child model time.

Finally, even if the vertical grids are the same, mismatches can occur if different types of free surfaces are applied: many regional applications use non-linear free surfaces, whereas global models often use fixed z levels. These effects are strongest in the surface layers and could be mitigated by constructing boundary conditions from volume fluxes, if appropriate.

-

Tides. As previously noted, tidal amphidromes should ideally be away from the boundary. Additionally, as previously noted, a mismatch in parent–child bathymetry can result in a mismatch in transports; this also affects transports due to tides. Relatedly, it should be ensured that tides are not present in the external boundary data if tides are also specified with harmonics.

-

Volume conservation. Open boundaries can allow gain and loss of water through the boundaries which may result in drift in mean sea level and accumulate dynamical errors. NEMO provides an option to maintain constant volume via a correction. For a model including tides, however, this could be considered inappropriate.

-

Spurious currents. Spurious currents can be generated at open boundaries that appear trapped but that may affect the interior momentum over time. Areas where the boundary intersects the continental margin are particular areas of concern because the sloping bathymetry can act as a wave guide for spurious variability. A further consideration is the effect on non-physical aspects of the model, such as biogeochemistry (see Sect. 4.1). High vertical velocities at the boundary may not be apparent due to flow relaxation at the boundary. However, tracers that are not relaxed at the boundary will feel the effect of spurious vertical currents.

Following the above guidance to build sequentially, whereby complexity is incrementally introduced, it can be instructive to include open-boundary conditions with a sequence of developments. Our workflows lean heavily on the PyNEMO community Python tool (https://github.com/NOC-MSM/PyNEMO, last access: 4 July 2022). A tide-only example (forcing by FES2014 tides, initial temperature and salinity are set constant, velocities are initialised as zero and boundaries are set to initial values) can be found in the SEAsia and SEVERN-SWOT repositories. The documentation includes generation of the boundary conditions and running of the model. For boundary conditions including 2D and 3D velocities as well as temperature and salinity, see the SEVERN-SWOT repository. The documentation includes generation of the boundary conditions and setting of the namelist.

3.6 Atmospheric forcing

In this discussion we consider atmospheric forced ocean models and atmosphere–ocean coupled models.

3.6.1 One-way atmospheric coupling

In the one-way (forced-ocean) set-up, it can be helpful to consider that the meteorological processes can affect either the thermohaline properties (via heat and radiation fluxes or precipitation and can be applied as boundary conditions in the tracer equations) or can affect the ocean momentum (via pressure and wind speed). These atmospheric boundary layer processes must be parameterised in order to compute fluxes with which the ocean can be forced. NEMO has a number of parameterisation options (or Bulk formulations): (i) NCAR (Large and Yeager, 2004), designed for the NCAR forcing, but also appropriate for the DRAKKAR Forcing Set (DFS) (Brodeau et al., 2010); (ii) COARE3.0 (see Fairall et al., 2003); (iii) COARE3.6 (see Edson et al., 2013); (iv) ECMWF, appropriate for ERA5 data (Beljaars, 1995); (v) ANDREAS (see Andreas et al., 2015). Alternatively, if all the atmospheric fluxes are known, these can be supplied directly as surface boundary conditions.

In addition to choosing the appropriate source and type of surface boundary condition, there are a few additional considerations to be borne in mind when preparing the atmospheric forcing dataset for a target child model that has different spatial or temporal discretisations from the parent. (1) Calendar stretching (e.g. 3 h forcing on a 360 d calendar being mapped to a Gregorian calendar). (2) Land–sea masks can differ between the parent atmospheric grid and the child ocean grid. This is especially problematic when a coarse parent grid is naively interpolated onto a finer child grid. The mismatch in coastlines results in misrepresentation of near-coast heat fluxes and sea breeze dynamics but can be alleviated using flood-filling techniques whereby extrapolation and interpolation are applied separately to land points and sea points, though problems can still arise if the child grid has islands that simply do not exist in the parent grid. Finally, subtle differences in the atmospheric data expected by the bulk formulae are a common source of error (e.g. reference levels, specific versus relative humidity, net versus downward long-wave and short-wave radiation). So, as is often the case when re-purposing a complex system by modification, “trust but verify”. A worked example is given in the SEVERN-SWOT repository using ERA5 surface forcing data (data access: https://www.ecmwf.int/en/forecasts/dataset/ecmwf-reanalysis-v5, last access: 9 February 2023). The documentation includes these global data being cut down and manipulated for use in forcing a regional configuration and then running the model with one-way meteorological forcing.

3.6.2 Two-way atmospheric coupling

In regional two-way coupled atmosphere–ocean models, the information transferred to the ocean from the atmosphere is essentially very similar to that provided in a one-way forced set-up, when the fluxes are known. The solar and non-solar surface heat flux, mean sea level pressure and freshwater flux are transferred as well as the momentum fluxes from atmosphere to ocean (Lewis et al., 2019a). Then either the surface temperature, surface currents or both can be sent back to the atmosphere. The variables are exchanged between both models via a coupler such as OASIS3-MCT (Valcke et al., 2015) and interpolated between the two grids, typically using first-order conservative interpolation for scalars and bilinear interpolation for vector fields.

The coupling frequency can be optimised by considering the region over which the model is being run and the features and dynamics that dominate that area. However, it must be set to a value larger than the model time step. Wang et al. (2015) use a 3 h coupling frequency for their climate atmosphere–ocean model located over the Baltic and North seas, but Zhao and Nasuno (2020) found that a coupling frequency of hourly or sub-hourly better reproduced the sea surface temperature and consequently stronger convection during the passage of the Madden–Julian Oscillation. In the regional coupled suite RCS-IND1 (Castillo et al., 2022), an hourly coupling frequency was used to capture the temperature diurnal cycle; however, options to move to using a 10 min coupling frequency are mentioned, as this might prove beneficial for modelling rapidly changing conditions as in squalls and tropical cyclones.

There is a risk that coupled atmosphere–ocean models may become unstable and drift when run over long periods of time due to the feedbacks between both models. To constrain the drifts, nudging or weakly coupled assimilation may be required. However, not all models require corrections, the decadal-scale run carried out by Wang et al. (2015) and the 100-year simulation in Primo et al. (2019) being examples of this. Alternatively, mixed two-way and one-way forcing approaches can be applied if either coupling or direct forcing is not appropriate for the entire domain.

3.7 Terrestrial river forcing

This aspect of configuring a regional model is uniquely challenging: river flow data typically come from gauges, which are typically far upstream from the model's coastal grid point, or from hydrological models, where the data are gridded but not necessarily at fine enough resolution for the target application (e.g. many global products have a ∘ resolution). Pre-processing freshwater data can be particularly time-consuming, so it is worth giving careful consideration to design choice options at the outset. Where possible, consistent atmospheric precipitation and riverine data are preferred for consistent freshwater budgets. For example, the JRA-55 (Tsujino et al., 2018) and COREv2 (Large and Yeager, 2009) datasets have an accompanying freshwater river dataset. However, while consistent forcing is desirable, a dataset with a range of consistent variables may have lower accuracy than e.g. a region-specific flow-only dataset. In some strongly forced applications, forcing accuracy in specific variables may be more important than consistency across all forcing variables. See the SANH repository for an example that generates river forcing from different sources and Sect. 4.1.3 for specific guidance on constructing riverine biogeochemical fluxes.

Having identified the data sources and the corresponding model grid points for freshwater inputs (itself a heavily labour-intensive exercise that defies straightforward automation), there are further choices to be made regarding the implementation. (i) How is the freshwater distributed horizontally? If the coastal outflow is a river delta, the freshwater load should be distributed between the tributary channels. Similarly, if the volume flux is large and baroclinic dispersal processes are not resolved, then unrealistic freshwater lenses can accumulate at the coastline. This can also be remedied by redistributing the freshwater flux across neighbouring grid cells. (ii) How is the freshwater distributed vertically? The freshwater can be vertically mixed to a specified depth or enter as a surface plume. (In our mid-latitude and tropical applications with biogeochemistry, we typically mixed the freshwater over the top 10 m for numerical stability.) Increasing vertical diffusion at river points can be used to compensate for unresolved estuarine mixing. (iii) What are the salinity and temperature of the river water? The default implementation is often to add freshwater (zero salinity) at the temperature of either the sea surface or the atmosphere; however, better results are likely if observed or river model values are used. These choices can be adjusted to achieve target temperature and salinity characteristics of the plume. To date there has been no accommodation for groundwater fluxes, though these can be considerable (Zektser and Loaiciga, 1993). Finally, validation to ensure accurate estuary-mouth forcing is challenging. Satellite salinity has a resolution of approximately 35–50 km for the Soil Moisture and Ocean Salinity (SMO) and 100–150 km for the Aquarius satellites, whereas in situ measurements capture plume features (and freshwater intensifications) at scales O(10 m) that are much finer than the resolved scale but that lack spatial coverage. Where possible, use should be made of near-coastal buoy and survey data, but in the absence of this we must settle for far-field validation against hydrography, accepting that this conflates the effects of circulation and surface forcing.

The worked example in the SEAsia repository details how rivers fluxes were taken from the JRA-55 dataset (Tsujino et al., 2018) and mapped to the nearest coastal grid point. Subtleties for large rivers include (i) avoiding placement of domain boundaries near large river outflows and (ii) laterally spreading the coastal source points to represent deltas and also to avoid unrealistic numerical issues if outflow values are locally too large.

3.8 High-performance computing: decomposition and optimisation

Modelling frameworks like NEMO are equipped to be run on high-performance computing machines. This is facilitated by the optional abstraction of input–output procedures (XIOS) from the dynamical model (NEMO). These can then be separately optimised for the target machine and, crucially, most I/O to disk can be overlapped with the continuing computational tasks. Most HPC platforms will have multiple nodes, each comprising a number of CPU cores and some shared memory. However, NEMO and XIOS have different computation and memory requirements. Making the best use of each HPC platform can be further complicated by the service's charging algorithm and possible non-linearities in performance scaling arising from subtleties in hardware design. Nevertheless, when configuring a simulation, there are only two sets of fundamental considerations.

-

How many compute cores will be allocated to the dynamical core and how many cores should be assigned to each node? Each core will have a roughly equal grid cell fraction of the surface map of the whole domain (parallelised sub-domains contain the full water column).

-

How many cores should be dedicated to the XIOS processes, and how many cores should be assigned to each node?

Some general guidance can be offered to address these questions.

-

Computational resource splitting. The best ratio of XIOS to NEMO cores depends on the volume of data to be written. Since this is configurable at run time via the XML files, some care is needed to provide the XIOS processes with sufficient resources to cope with any expected variations. Though there are no easy answers as to how to optimise domain decomposition, a starting point would be to allocate cores between NEMO and XIOS in a ratio of . Often, fewer XIOS cores are required, but each XIOS process is likely to require access to more memory than each ocean core. Typically, this is achieved by running XIOS cores sparsely populated on dedicated XIOS nodes or by using options to control placement of processes to CPUs on nodes running a mix of XIOS and ocean cores. The best solution will be hardware-specific. For example, in a system with four NUMA regions per node, you may choose to place one XIOS process alone in the first NUMA region (thereby giving it access to the maximum amount of shared memory) and to fully populate the remaining NUMAs with ocean processes. Performance optimisation of the NEMO-to-XIOS decomposition comes with experimentation. Timing information provided in the