the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

A nested multi-scale system implemented in the large-eddy simulation model PALM model system 6.0

Antti Hellsten

Klaus Ketelsen

Matthias Sühring

Björn Maronga

Christoph Knigge

Fotios Barmpas

Georgios Tsegas

Nicolas Moussiopoulos

Siegfried Raasch

Large-eddy simulation (LES) provides a physically sound approach to study complex turbulent processes within the atmospheric boundary layer including urban boundary layer flows. However, such flow problems often involve a large separation of turbulent scales, requiring a large computational domain and very high grid resolution near the surface features, leading to prohibitive computational costs. To overcome this problem, an online LES–LES nesting scheme is implemented into the PALM model system 6.0. The hereby documented and evaluated nesting method is capable of supporting multiple child domains, which can be nested within their parent domain either in a parallel or recursively cascading configuration. The nesting system is evaluated by first simulating a purely convective boundary layer flow system and then three different neutrally stratified flow scenarios with increasing order of topographic complexity. The results of the nested runs are compared with corresponding non-nested high- and low-resolution results. The results reveal that the solution accuracy within the high-resolution nest domain is clearly improved as the solutions approach the non-nested high-resolution reference results. In obstacle-resolving LES, the two-way coupling becomes problematic as anterpolation introduces a regional discrepancy within the obstacle canopy of the parent domain. This is remedied by introducing canopy-restricted anterpolation where the operation is only performed above the obstacle canopy. The test simulations make evident that this approach is the most suitable coupling strategy for obstacle-resolving LES. The performed simulations testify that nesting can reduce the CPU time up to 80 % compared to the fine-resolution reference runs, while the computational overhead from the nesting operations remained below 16 % for the two-way coupling approach and significantly less for the one-way alternative.

- Article

(18551 KB) - Full-text XML

- BibTeX

- EndNote

Large-eddy simulation (LES) has been used for basic research of atmospheric boundary layer (ABL) phenomena using idealized model setups for decades. At present it is becoming an important method in applied research on realistic, very detailed, and complicated flow systems such as urban ABL problems (Britter and Hanna, 2003; Tseng et al., 2006; Bou-Zeid et al., 2009; Tominaga and Stathopoulos, 2013; Giometto et al., 2016; Buccolieri and Hang, 2019; Auvinen et al., 2020a). Until recent years, there were no ABL LES models capable of modeling detailed surface structures, such as buildings or steep complex terrain shapes in the ABL. Nowadays, it is possible to carry out LES for complex built areas (e.g., Letzel et al., 2008), but this is still limited to relatively small areas because of the high spatial resolution requirement. Concerning urban LES, Xie and Castro (2006) have shown that at least 15 to 20 grid nodes are needed across street canyons to satisfactorily resolve the most important turbulent structures within the canyons. This requirement typically leads to grid spacings on the order of 1 m. However, the vertical extent of the LES domain should scale with the ABL height, and the horizontal size should span over several ABL heights in order to capture the ABL-scale turbulent structures (de Roode et al., 2004; Fishpool et al., 2009; Chung and McKeon, 2010; Auvinen et al., 2020a). To adequately capture processes on the street scale and to simultaneously capture large ABL-scale turbulence, sufficiently large model domains at small grid sizes are required, posing high demands on the computational resources in terms of CPU time and memory. Moreover, the uncertainty related to the lateral boundary conditions usually decreases as the domain is made larger.

Many conventional continuum-based numerical solution methods (e.g., finite-element and finite-volume methods) allow variable resolution so that the resolution can be concentrated to the area of principal interest and relaxed elsewhere. However, only unstructured grid systems allow full advantage to be taken of spatially variable resolution. Many general-purpose computational fluid dynamics packages offer unstructured grid systems, but according to our experience such solvers are usually computationally decidedly less efficient than ABL-tailored LES models, such as PALM (Raasch and Schröter, 2001; Maronga et al., 2015, 2020), the Weather Research and Forecasting Model (WRF) (Skamarock et al., 2008) with its LES option, and the Dutch Atmospheric Large-Eddy Simulation (DALES) (Heus et al., 2010) that are based on structured orthogonal grid system with constant horizontal resolution. The model nesting approach can be exploited to further speed up ABL LES models or to allow larger domain sizes without compromising the resolution in the area of primary interest. The authors acknowledge that alternative solution approaches, such as lattice Boltzmann method (e.g., Aidun and Clausen, 2010; Ahmad et al., 2017), also offer strategies for computational efficiency improvements. The presented nesting approach is primarily relevant for the family of structured, finite-difference Navier-Stokes solvers.

The idea of grid nesting is to simultaneously run a series of two or more LES model domains with different spatial extents and grid resolutions. In this implementation the outermost and coarsest-resolution LES domain (termed “root” domain henceforth), which acts as a “parent” to its “child” domains, obtains its boundary conditions in a conventional manner, whereas the nested LES domain (child) always obtains its boundary condition from its respective parent domain through interpolation. In one-way coupled nesting only the children obtain information from their parents. In such a coupling strategy, the instantaneous child and parent solutions can deviate within the volume of the nest. If a stronger binding between the solutions is desired, the child solution needs to be incorporated into the parent solution. This is achieved in two-way coupled nesting, where the parent solutions are influenced by their children through so-called “anterpolation” (Clark and Farley, 1984; Clark and Hall, 1991; Sullivan et al., 1996). The term anterpolation was coined by Sullivan et al. (1996), although the concept is older.

The child-to-parent anterpolation can be implemented using, for instance, the post insertion (PI) approach (Clark and Hall, 1991), where the parent solution is replaced by the child solution within the volume occupied by both domains. In practice, some buffer zones where anterpolation is omitted are necessary near the child boundaries to avoid growth of unphysical perturbations near the child boundaries (Moeng et al., 2007). An example of a two-way coupled nesting implemented in the WRF-LES model is given by Moeng et al. (2007), and later successfully applied to a stratocumulus study by Zhu et al. (2010). The WRF-LES nesting system can also be used in one-way coupled mode (Mirocha et al., 2013), and it has been applied in this way, e.g., to a complex terrain study (e.g., Nunalee et al., 2014; Muñoz-Esparza et al., 2017) and to an offshore convective boundary layer study (Muñoz-Esparza et al., 2014). Subsequently, Daniels et al. (2016) introduced a vertical interpolation into the WRF model, but this method is restricted to one-way coupled nesting. Recent implementation of an immersed boundary method has made WRF-LES with a one-way coupled nesting approach applicable to obstacle-resolving LES (Wiersema et al., 2020). In addition to WRF-LES, the numerical weather prediction model ICON (Zängl et al., 2015) features an LES mode and includes an online nesting capability (Heinze et al., 2017). However, due to its terrain-following coordinate system, ICON-LES cannot resolve sharp obstacle structures.

Recently, Huq et al. (2019) implemented a purely vertical nesting system into PALM in which the child and parent domains are required to have the same horizontal extent. Although this approach is useful, e.g., when the grid resolution near the surface needs to be refined to better capture the atmosphere–surface exchange, the requirement of equal horizontal domain extensions poses high demands on the computational resources, limiting this approach to only academic studies. This implementation is also limited to have a single child domain only. For these reasons, we decided to develop the present, more general, fully three-dimensional nesting system in PALM. It can also be run in a pure vertical nesting mode.

One-way coupled obstacle-resolving LES has been applied to a built environment by Nakayama et al. (2016) and by Vonlanthen et al. (2016, 2017). The present PALM implementation has also already been demonstrated by Maronga et al. (2019, 2020) and applied to obstacle-resolving urban studies (Kurppa et al., 2019, 2020; Auvinen et al., 2020a; Karttunen et al., 2020) using one-way coupling. At present we are not aware of any research on obstacle-resolving LES in the ABL context employing a two-way coupled nesting approach. Through our studies, we have observed that the application of two-way coupling in obstacle-resolving LES can become problematic. Therefore, in addition to documenting and evaluating the newly implemented nesting method in the PALM model, this paper addresses the applicability of the two-way coupled nesting approach in obstacle-resolving LES.

The paper is organized as follows. Section 2 gives a brief description of the LES mode of the PALM model system 6.0. Section 3 presents the technical, algorithmic, and numerical aspects of the implemented nesting. In Sect. 4 the implemented nesting is evaluated for a series of test cases featuring different kinds of boundary layer flow. Finally, Sect. 5 summarizes the results and gives and outlook of future developments.

The PALM model system (Raasch and Schröter, 2001; Maronga et al., 2015, 2020) is based on the non-hydrostatic, filtered, Navier–Stokes equations in a Boussinesq-approximated or anelastic form. It solves the prognostic equations for the conservation of momentum, mass, energy, and moisture on a staggered Cartesian Arakawa-C grid. Subgrid-scale turbulence is parameterized using a 1.5-order closure following Deardorff (1980) in the formulation of Saiki et al. (2000). In its standard configuration, PALM thus has seven prognostic quantities: the velocity components ui (where ), the potential temperature θ, specific humidity qv, a passive scalar s, and the subgrid-scale (SGS) turbulent kinetic energy e. By default, discretization in time and space is achieved using a third-order Runge–Kutta scheme following Williamson (1980) and a fifth-order advection scheme following Wicker and Skamarock (2002). The horizontal grid spacing is always equidistant, whereas it is possible to use variable grid spacing in the vertical direction. Often, the vertical grid spacing is set equidistant within the boundary layer, and stretching is applied above the boundary layer to save computational time in the non-turbulent free atmosphere. At the lateral boundaries cyclic conditions or more advanced in- and outflow conditions can be employed.

Both the Boussinesq and the anelastic approximation require incompressibility of the flow. To provide this feature a predictor–corrector method is used where an equation is solved for the modified perturbation pressure after every Runge–Kutta sub-time step (e.g., Patrinos and Kistler, 1977). The method involves the calculation of a preliminary prognostic velocity. Divergences in the flow field are then attributed solely to the pressure term, leading to a Poisson equation for the perturbation pressure. In the case of cyclic lateral boundaries, the Poisson equation is solved by using a direct fast Fourier transform (FFT) method. However, in the case of non-cyclic boundary conditions, an iterative multigrid scheme is used (e.g., Hackbusch, 1985).

Parallelization of PALM is achieved by using the message passing interface (MPI, e.g., Gropp et al., 1999) and a two-dimensional (horizontal) domain decomposition.

PALM offers several embedded models to simulate physical processes within the urban environment. Namely, without the intention of providing an exhaustive list, this embraces a land surface (Gehrke et al., 2020) and a building surface model (Resler et al., 2017) to consider the surface–atmosphere exchange of heat and moisture, a radiative-transfer model (Krč et al., 2020) to include complex three-dimensional mutual radiative interactions between surfaces and plants, an indoor and building energy demand model, an aerosol (Kurppa et al., 2019) and an air chemistry model (Khan et al., 2020), and an embedded Lagrangian particle model for dispersion. For a complete overview we refer to Maronga et al. (2020).

3.1 General concept

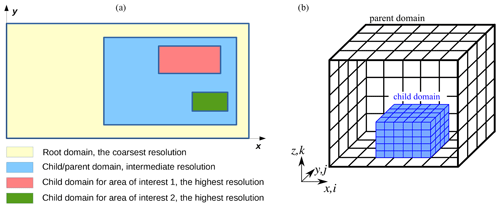

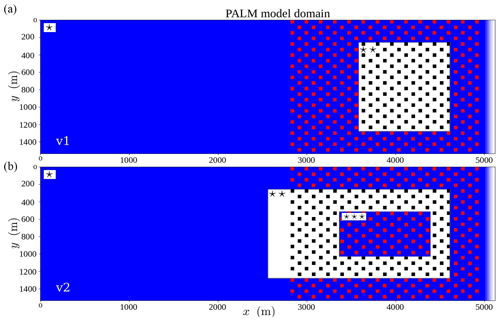

The nesting system we have developed is based on the concept of parent and child domains. Each parent domain can enfold multiple child domains, but a child domain can, naturally, only have one parent domain. The top-level domain, also called the root domain, acts as a parent domain to child domains at the first nesting level. The child domains at first nesting level might have subsequent child domains for which they then act as parent domains (cascading arrangement); see Fig. 1. Our nesting system allows for up to 63 nested domains plus the root domain. The implementation requires that all child domains are always completely located inside their respective parent domain. In addition, the grid spacings of a child domain naturally have to be smaller than the grid spacings of its parent domain. The grid-spacing ratios must always be integer-valued, although different ratios may be used in different directions. Therefore, in nested runs the grid stretching is only allowed in the root domain and only above the top boundary of the highest nested domain. There may be multiple child domains at the same nesting levels, but overlapping child domains at the same nesting level are not permitted. Finally, all the nest domains have to be surface-bound, meaning that elevated child domains are not allowed. Time synchronization is taken care of by simply selecting the minimum of the time steps determined by each model independently and broadcasting this time step value for all models. Each model inputs and outputs in the same way.

Figure 1A schematic example of a nested configuration involving both cascading and parallel child domains is shown on x–y plane on the left-hand side. On the right-hand side, a three-dimensional view of a nested child domain inside its parent domain is shown.

In general, the system is designed as two-way coupled nesting, in which a child domain can affect its parent domain and vice versa. It is possible, however, to run the system in a one-way coupled mode where no feedback from the child domain is incorporated in its parent domain. Moreover, it is possible to use the system as a pure vertical one-dimensional nesting, where the lowest part of the model (e.g., the atmospheric surface layer where the dominant turbulent eddies are usually very small) can be run as a child domain with finer grid spacing than its parent domain that compasses the entire boundary layer. In the case of pure vertical nesting, cyclic boundary conditions must be set on all the lateral boundaries. Unlike the method proposed by Huq et al. (2019), the present method also allows a cascade of more than one child domain in the pure vertical nesting cases.

The present nesting approach is a variant of the PI method, in which the communication between each parent–child couple is realized via interpolations (from parent to child) and anterpolations (from child to parent) after each Runge–Kutta sub-step and just before the pressure solver. The latter then ensures that mass conservation is enforced in the anterpolated solution in the parent domain.

3.2 Restrictions

The current implementation poses a few restrictions for the nested setups. Moreover, the interpolation and anterpolation methods, which are discussed in the following sections, are based on certain assumptions, e.g., on the grid line matching between parent and child domains leading to a few more restrictions. Altogether these restrictions are as follows:

-

the child domain must always be completely inside its parent domain, and there must be a margin of four parent grid cells between the boundaries of child and parent domains;

-

parallel child domains must not overlap each other, and there must be a margin of at least four child grid cells between two parallel child domains;

-

the domain decomposition of all child domains must be such that the sub-domain size is never smaller than the parent grid-spacing in the respective direction;

-

buildings or any other topography or geometry must not reach the child domain top;

-

all the grid-spacing ratios must be integer-valued;

-

the outer boundaries of child domains must match with grid planes in its parent domain;

-

no grid stretching is allowed in the child domains, and in root domain it is allowed only above the top boundary of the highest child domain.

3.3 Structure of the nesting algorithm

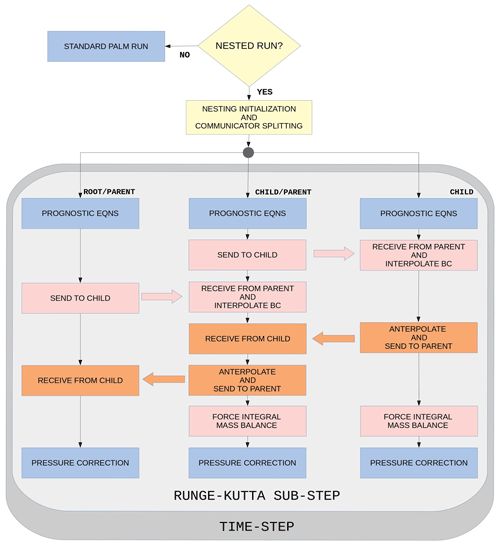

Ideally, the coupling actions, i.e., data transfers between the domains, anterpolation, and interpolation, would be performed after the pressure-correction step using the final divergence-free velocity field on both parent and child. To achieve this in the context of the pressure-correction method employed in PALM requires a staged arrangement of the coupling actions such that a child first sends data to its parent and after receiving the data the parent anterpolates and performs the pressure correction step. After the pressure-correction step the parent sends data to the child, which interpolates new boundary conditions from the received data and performs the pressure-correction step. The purely vertical nesting method implemented in PALM by Huq et al. (2019) features this kind of staged structure. However, Huq et al.'s method may lead to excessive waiting times as the child has to wait until the parent performs the pressure-correction step and vice versa. Moreover, the staged coupling approach becomes more complicated and more inefficient when a cascade of several nested domains is used. Therefore, Huq's implementation allows for only one child domain. The possibility to employ cascades of child domains was an initial requirement for the present system design, and therefore the staged coupling arrangement had to be abandoned. In principle, it would be possible to perform the pressure-correction step twice,: the first time before the coupling actions for all domains, and the second time for all parent domains after the coupling to make the anterpolated fields divergence free. However, this would be computationally very expensive, severely compromising the benefits from the nesting. This is because the pressure solution is typically the most time-consuming part of the solution process. To avoid this extra penalty, the coupling is based on the preliminary prognostic velocity fields upre in the present implementation. The sequence of the coupling actions is illustrated in Fig. 2. This choice has the consequence that the interpolated velocity boundary conditions for a child domain may violate the global mass balance over the child domain such that

where the tilde symbol is the interpolation operator and n is the unit inner surface normal vector of the child domain boundary S excluding the bottom boundary. This mass-conservation error, though typically small, is eliminated in an integral sense by adding a constant velocity correction on each boundary l∈{left, right, south, north, top}

to the interpolated child boundary values to exactly eliminate the global mass-balance error in Eq. (1). In the case of a purely vertical nesting mode, the correction is applied only on the top boundary and S only spans over it. This correction is made for all child domains right before the pressure-correction step. According to our tests, Δupre is typically 3 or 4 orders of magnitude smaller than the dominant velocity scales of the flow.

Figure 2Flow chart illustrating the nesting actions in the case of three domains in cascading order. In the case of more than three domain levels, more branches similar to the current middle branch would be added. Blue boxes represent baseline PALM actions, while the other colors indicate nesting-specific actions. In one-way coupling only the actions indicated by pink color are invoked.

Huq et al. (2019) showed results for a zero mean-wind convective boundary layer (CBL) case. In this case, especially if the nest-top boundary is set on a relatively low level, unphysical overestimation of horizontal velocity component variances easily develop if the coupling is based on upre. Huq et al. (2019) showed that using the staged sequence of coupling actions, allowing the coupling based on the final velocity field u, mostly removes the overestimation of the horizontal velocity variances. We have confirmed this by temporarily modifying the current implementation to adhere to Huq et al.'s staged arrangement and simulating a vertically nested zero mean wind CBL case similar to Huq et al.'s test case.

In the present method, the overestimation of the horizontal velocity component variances can be mostly avoided by using the integral mass-balance forcing (Eq. 2) and further by setting a narrow buffer zone below the top boundary in which the anterpolation is not performed. This is described in more detail in Sect. 3.5.

In addition to the velocity field, all other prognostic variables are also coupled, except for the SGS turbulent kinetic energy (e), as it depends on the resolution by definition and therefore is not straightforward to couple between parent and child domains that have different resolutions. The anterpolated values should be increased by some unknown resolution-dependent factor, and the interpolated values should be reduced accordingly. e strongly follows the velocity gradient field, and therefore it tends to adapt to the anterpolated velocity field on the parent side during the next Runge–Kutta step without being anterpolated itself. Relying on this reasoning, we omit the anterpolation of e. Moreover, we assume that the local generation of e often dominates its advection, implying that replacing the interpolation of its child boundary values with simple zero-gradient conditions may be acceptable. In our numerical tests we compared the zero-gradient conditions with interpolated boundary values reduced by an estimated resolution-difference-dependent factor. The comparisons revealed only negligible differences in the results.

Further technical implementation issues are discussed in Appendix A.

3.4 Interpolation (parent to child)

3.4.1 Emphasis on conservation properties

Conservation of fluxes through the nest boundaries is an essential condition for a nesting algorithm. By flux conservation we mean that the total flux through a nest–domain boundary is equal to the total flux through the corresponding plane in the parent domain. This must not be confused with the mass-conservation error discussed in Sect. 3.3 Eq. (1), which results from the fact that the nest boundary conditions are interpolated from the preliminary velocity field instead of the final divergence-free velocity field.

Earlier studies by Kurihara et al. (1979) and Clark and Farley (1984) focused mostly on conservation of prognostic variables but not on conservation of their advection fluxes. Later, Sullivan et al. (1996) and Zhou et al. (2018) also paid attention to conservation of fluxes, which according to our observations is very important. For example, if no attempt is made to minimize the flux-conservation errors of the velocity components on the nested boundaries, the mean flow angle across the whole system of domains may become significantly deflected. We observed this unacceptable phenomenon in an early phase of this work while experimenting with an interpolation scheme that produces non-negligible flux-conservation errors. Therefore, we construct the interpolation method such that the flux conservation errors on the nested boundaries are minimized. In our view, the conservation properties are far more important than local accuracy at the nest boundaries. It will be shown in Sect. 3.4.2 that zeroth-order interpolations are favorable in terms of conservation properties, although their local accuracy is obviously lower than those of higher-order interpolations. Here, it should be noted that increasing the order of interpolation accuracy on the child boundary planes is irrelevant because in all cases the solution requires a development zone (i.e., a border margin) as it adapts to the increased resolution. Therefore, a low-order interpolation method with acceptable conservation properties should be preferred.

In principle, the most straightforward way to satisfy flux conservation is to directly use the flux on the parent grid cell face on the nested boundary and to distribute it onto the underlying child grid cell faces akin to the finite-volume method. However, PALM is based on the finite-difference method, and thus its architecture does not support this method. Therefore, it is necessary to construct an interpolation procedure that is at least approximately flux-conservative.

3.4.2 General conservation considerations

Before laying out the new interpolation procedure in Sect. 3.4.3, the earlier developments are reviewed, and their merits and weaknesses are discussed in this section.

We first consider the work by Kurihara et al. (1979), who required conservation of prognostic variables in the form

where ΔSI and Δsi are the face areas of the parent and child grid cells on the nested boundary and . Here the child variables and indices are denoted by lowercase letters and the parent variables and indices are indicated by uppercase letters. Clark and Farley (1984) later applied this condition to both interpolation and anterpolation and called it the reversibility condition. The reversibility condition can also be written as

where the tilde is the interpolation operator and the hat is the anterpolation operator. Based on this condition, Clark and Farley (1984) derived a quadratic interpolation scheme that forms a reversible pair with their anterpolation scheme. This reversibility guarantees the mass-flux conservation (in incompressible flows). However, it does not guarantee conservation of other fluxes, consisting of products of the advected variable and advective velocity component. As recently noted by Zhou et al. (2018), the conservation of other fluxes is violated if both advective velocity component and advected variable are interpolated using the Clark and Farley scheme. Although not mentioned by Zhou et al. (2018), this actually applies to any interpolation scheme higher than the zeroth order due to the nonlinearity of the advection terms. Instead of applying the reversibility requirement, Zhou et al. (2018) require global conservation of both prognostic variable ϕ and its resolved-scale turbulent flux through the boundary as

where the superscript (N) refers to a boundary-normal velocity component and 〈⋅〉b denotes spatial averaging over the child domain boundary. It is straightforward to show that if Eq. (5) holds, the flux conservation condition Eq. (6) can be also be written for the total flux as

We shall study the flux conservation using this condition instead of Eqs. (5) and (6).

In order to ensure flux conservation for all prognostic variables, Zhou et al. (2018) chose to only use the Clark and Farley method for the advective velocity component, and for all advected variables they used the simple zeroth-order method:

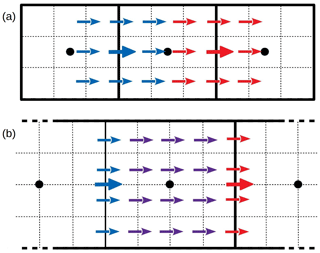

Obviously this zeroth-order interpolation also satisfies the reversibility condition Eq. (4) in addition to Eq. (5). This choice readily satisfies the flux-conservation condition for all variables. However, in cases with even-valued grid-spacing ratios, Eq. (8) cannot be applied to the velocity components that are in staggered positions relative to u(N) and U(N) on the boundary plane. These velocity components are denoted by u(S) and U(S). Equation (8) is not applicable in this case because the odd number of child grid u(S) nodes cannot be evenly associated to the surrounding U(S) nodes; see Fig. 3. The method by Zhou et al. (2018) is indeed strictly limited to odd-valued grid-spacing ratios.

Figure 3Staggered velocity component nodes in cases of odd (3) (a) and even (4) (b) grid-spacing ratios. The staggered velocity component nodes are shown as arrows: thick arrows are for the parent grid, and thin arrows are for the child grid. The parent scalar grid cell faces are drawn with solid lines, and the corresponding child grid cells are drawn with dotted lines. Locations of the corresponding parent grid scalar nodes are shown as black dots. The blue color indicates the left-hand parent grid node, and red indicates the right-hand node. In (a) the child grid values could be obtained from Eq. (8), which cannot be applied to (b). The violet-colored child grid nodes in (b) receive the averaged values according to Eq. (9).

3.4.3 Construction of an approximately flux-conservative method

In our view, the limitations of the method by Zhou et al. (2018) are too restrictive. However, we were not able to find any alternative zeroth-order interpolation that would exactly satisfy the condition Eq. (7) for u(S) and also be applicable to even-valued grid-spacing ratios. Instead, we found a zeroth-order interpolation that approximately satisfies the condition Eq. (7) for u(S), as will be shown below. This interpolation reads as follows:

This interpolation is also applicable for u(S) in cases of even-valued grid-spacing ratios since the child grid nodes between the parent grid nodes need not to be associated with the surrounding parent grid nodes, see Fig. 3.

In another deviation from the Zhou et al. (2018) method, we do not employ the quadratic scheme of Clark and Farley (1984) at all. The reason is that in PALM the interpolation algorithm has to cope with complex geometries, and the application of such a complicated wide stencil interpolation scheme might become excessively cumbersome. Instead, we use Eq. (8) for the advective velocity component u(N), Eq. (9) for the advected components u(S), and Eq. (8) for all other advected variables. Equation (9) is also used for the staggered velocity components in cases of odd-valued grid-spacing ratios even though Eq. (8) would also be applicable in such cases.

As stated above, the flux conservation condition is satisfied approximately for u(S) for both odd- and even-valued grid-spacing ratios by using Eq. (9) for u(S) and Eq. (8) for u(N). As an example and for the sake of clarity (but without any loss of generality), we demonstrate the spatially averaged fluxes of v and V components for a nested domain boundary and assume that the boundary-normal direction is x. The velocity components in the x direction are u and U. Within the advection scheme, the advective u velocity for the flux is interpolated linearly to the flux point of v as . This becomes simply UJ−1 for those child flux points of v that are located between VJ−1 and VJ and for those v flux points coinciding with VJ. Let us begin by applying the chosen interpolation technique to an extremely simplified example with only two U grid cells along the left nest boundary. Then the y-averaged flux 〈uv〉y (we omit the z averaging at this stage) consists of only one V node J in the parent grid. Let the integer-valued grid-spacing ratio in the y direction be Ry=4. We also omit the SGS fluxes, which are assumed to be small, as in the entire discussion above. With these choices, 〈uv〉y consists of seven child grid nodes as follows:

The first term represents fluxes from those child flux points lying between VJ−1 and VJ, the second term is the flux at the child flux point coinciding with VJ, and the last term is a similar contribution as the first term but from the child flux points lying between VJ and VJ+1. Equation (10) can be rearranged as follows:

where we can identify the factor 4 in the inner term as Ry and the factor 3 in the edge terms as Ry−1. This can be generalized to an arbitrary integer Ry and to an arbitrary number of parent grid nodes across the boundary . By doing so, and by also incorporating the z averaging, we obtain

On the parent grid, the correspondingly averaged advection flux of V is (as expanded by Ry for easier comparison)

Here, and is the vertical parent grid index range covering the nest boundary. Clearly Eqs. (12) and (13) are not exactly equal because of the additional edge terms in Eq. (12) containing and , and because the denominator of Eq. (12) deviates from RyNyNz. It is important to note, however, that 〈uv〉b−〈UV〉b tends towards zero as Ny becomes large. In typical applications, the order of magnitude of Ny is hundreds, making the flux conservation error negligibly small. Moreover, if we can assume that

which is a reasonable assumption in many cases, then , even with small values of Ny.

3.4.4 Effects of the advection scheme on flux conservation

Above, the flux conservation was discussed generally without taking into account the effects of the actual discretization scheme employed in the advection algorithm. In this subsection, a mismatch of the advection term approximations on the child and parent sides in PALM is first identified and discussed, and subsequently a method to reduce the flux-conservation error resulting from this mismatch is proposed.

In Sect. 3.4.3 it was assumed that the advected parent grid variable values on the child boundary (VJ,K in Eqs. 12 and 13) are equal to the values used for the fluxes in the advection term computation. This is not the case in PALM, as they are interpolated onto their flux points using the fifth-order scheme by Wicker and Skamarock (2002). Here, it is important to understand that the interpolation onto the flux point in the advection scheme is a separate procedure from the parent-to-child interpolation, and it is performed in a different phase of the time step cycle (Fig. 2) in the prognostic equation step. In PALM, the fifth-order interpolation is not employed at the boundaries (except at the cyclic boundaries), instead a so-called advection scheme degradation procedure is utilized. The degradation procedure entails degrading the flux-point interpolation within the advection scheme on first two layers of nodes. The first-order upwind scheme is applied on the first layer, and the third-order Wicker and Skamarock (2002) scheme is applied for the second layer. This way, only one layer of boundary ghost points is needed at the boundary. Technically, three grid layers are allocated in PALM for boundary ghost points, but using all of them at child boundaries would lead to no gain in accuracy as the second and third layers would be just copies of the first layer due to the zeroth-order parent-to-child interpolation.

The first-order upwind scheme makes the advected values on child-boundary flux points independent of the child solution itself if the local flow direction is into the child domain. This is important from a flux-conservation point of view as the flux into the child domain should be entirely controlled by the parent solution. On the other hand, the first-order upwind scheme leads to values on the child boundary flux points that may differ from those on the corresponding grid plane on the parent side as those are interpolated with the fifth-order scheme. Therefore, additional flux-conservation errors may be generated.

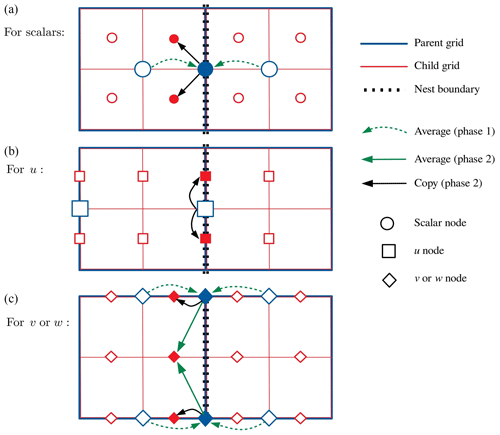

We have not found any way to totally eliminate the resulting additional flux-conservation error, but we can reduce it in the following way. Instead of using the original parent grid values in the parent-to-child interpolation, we replace them with values pre-interpolated (Fig. 4, phase 1) onto the parent flux points using a scheme that is higher than the first order and use these values in the parent-to-child interpolation. From here on, we refer to this pre-interpolation as transfer to boundary plane (TBP). As a result, the formally first-order upwind advection scheme becomes the selected higher-order scheme if the local flow direction is into the child domain.

Figure 4A schematic illustration of the interpolation operations on the left child boundary as an example. The child grid nodes on the left side are boundary ghost nodes. Phase 1 operations belong to the TBP, and phase 2 operations belong to the actual parent-to-child interpolation using Eqs. (8) and (9). Filled blue symbols denote intermediate values on the boundary plane (flux plane) resulting from the TBP. Filled red symbols denote the final boundary condition values for the child boundary. In this example, u is u(N), while v and w are u(S).

The TBP must not employ more than one parent grid layer behind a child domain boundary because the child has no information about the parent domain geometry outside the first parent grid layer. An interpolation stencil reaching further away could penetrate a vertical wall leading to erroneous interpolation. Therefore, the best available choice is to simply use the average of the parent grid values on both sides of the child domain boundary, i.e., a second-order interpolation. Obviously it is different from the fifth-order scheme, but we argue that the difference between values interpolated onto the boundary plane using the fifth-order and second-order schemes can be expected to be smaller than the difference between those interpolated using the fifth-order and first-order schemes. Our numerical tests support this argument.

On the top boundary there is no geometry, and hence we can use a wider TBP stencil there. We ended up using the third-order Wicker and Skamarock (2002) scheme there for the TBP because in our numerical tests it yielded almost indistinguishable results from the more complicated and more communication-intensive fifth-order scheme. Note that the TBP reduces the flux-conservation error only on those boundary regions where the flux is into the child domain.

The sequence of interpolation operations is illustrated in Fig. 4 using the left child boundary as an example. Phase 1 operations belong to the TBP, and phase 2 operations belong to the actual parent-to-child interpolation using Eqs. (8) and (9). Note that TBP is not applied to the boundary-normal velocity component u(N).

We evaluated the flux-conservation error in a simple test run modeling a horizontally homogeneous slightly convective boundary layer over flat terrain with capping inversion at z=450 m. The constant kinematic surface heat flux is 0.025 K m s−1, and the wind is driven by the geostrophic balance with a geostrophic mean wind angle of 11∘ relative to the x axis. Within the boundary layer the mean wind angle is close to 40∘, making the u and v components roughly equal to each other on average. The root grid dimensions are in the x, y, and z directions, respectively, with isotropic grid spacing of 12 m. The nest domain is placed in the middle of the root domain, and its grid dimensions are . The nest grid spacing is isotropic 6 m, thus . Periodic boundary conditions are given on the lateral root boundaries. The u and v flux-conservation errors are evaluated for the left nest boundary for which Ny=128 and Nz=32. The relative errors and fluctuate in time within ±3 %, with an average of 0.04 % and root-mean-square value of 1.7 % for , and 0.02 % and 0.6 % for , respectively. It is expected that with larger grid dimensions these errors will become even smaller.

According to our numerical tests presented in the Sect. 4, the proposed zeroth-order interpolation method, i.e., Eqs. (8) and (9) together with TBP, has proven to be fully sufficient and remains the only nesting interpolation method implemented in PALM. We have also considered an alternative interpolation approach for the advected variable based on trilinear interpolation with a specific reversibility correction. Although it is not implemented, a short discussion is provided in Appendix B.

3.5 Anterpolation (child to parent)

Anterpolation is used to feed the child domain solution back to its parent domain. Generally, anterpolation consists of filtering the fine-grid child solution and mapping it to the parent domain grid. We choose to employ the anterpolation scheme proposed by Clark and Farley (1984), which consists of simple averaging over one parent domain grid volume around the parent grid node of the variable in question corresponding to top-hat filtering, viz.

The original parent solution is replaced by the anterpolated solution in the domain of overlap. Here, and are the child and parent grid indices, respectively, and the hat is the anterpolation operator. The summation index limits, i.e., the span of the anterpolation cell i1(I), i2(I), j1(J), j2(J), k1(K), and k2(K), are pre-computed during the initialization, and they depend on the grid configuration and the variable in question; i.e., the staggered velocity components have different index limits to the grid-cell-centered scalars. Note that for the velocity components, the anterpolation volume is reduced to the grid-cell face on which the velocity component is defined. This means that the upper index limit in the direction of the velocity component is reduced to the lower one, for instance i2=i1 for u, because the coordinates of the velocity component node in the respective direction in the parent and the child readily coincide, and thus there is no need for anterpolation in this direction. is the number of child domain values used for anterpolation at a given parent grid location and is pre-computed during the initialization as

Note that due to the staggered grid, four sets of the index limits and are pre-computed and stored: one for each velocity component and one for all scalars. Generally, the anterpolation cells can be spanned in more than one way. We define the anterpolation cells similarly to Clark and Farley (1984) in order to ensure the reversibility discussed in Sect. 3.4.2. For scalar variables (non-staggered variables) the anterpolation cell spans , where Xi are the coordinates of the scalar node in the parent grid. For the velocity components (staggered variables), for example for u, the anterpolation cell spans X1, , and , where Xi are the coordinates of the staggered u node in the parent grid.

Buffer zones where the anterpolation is omitted are applied next to the child domain boundaries (except the bottom boundary). The main purpose of the buffer zones is to avoid an unstable feedback loop between the anterpolation and interpolation. The default width of these buffer zones is two prognostic grid nodes. The user may choose a different value for the buffer width, but the minimum allowed width is one parent grid spacing. This is because the layer of nodes nearest to the child boundary is directly used in the interpolation and using an anterpolated value for interpolation leads to a strongly unstable behavior. The buffer zones are comparable to the relaxation zones applied in the nesting system of the WRF-LES model (Moeng et al., 2007). In the WRF-LES nesting system the anterpolation is under-relaxed within these zones such that the under-relaxation coefficient varies linearly across the relaxation zones, which are five grid spacings wide. As mentioned in Sect. 3.3, the buffer zone below the top boundary also reduces the overestimation of the horizontal velocity variances observed in zero mean wind CBL tests in a purely vertical nesting mode. According to these tests in a purely vertical nesting mode, simulation results are not particularly sensitive to the extent of the vertical downward shift of the upper edge of the anterpolation domain.

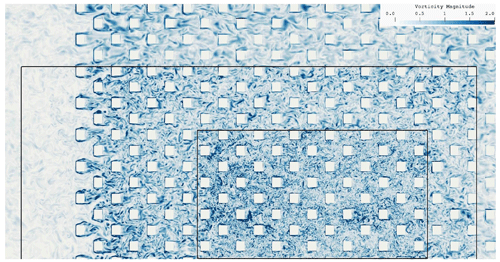

Canopy-restricted anterpolation

The anterpolation algorithm is implemented in the PALM model with a feature that enables its application in a spatially selective manner such that the operation is only performed within the computational domain that is above a user-defined vertical threshold. This practice is discovered to resolve complications that arise when two-way coupled nesting is applied in obstacle-resolved LES simulations where the anterpolated solution within the obstacle canopy introduces discrepancies in the coarser parent solution. Thus, we label this approach canopy-restricted (CR) anterpolation, and the coupling is referred to as two-way CR. The necessity of this anterpolation strategy is motivated and its effectiveness demonstrated in Sect. 4.2.3 where nesting is applied to obstacle-resolved LES test case.

In order to evaluate the nesting strategy, show its benefits, and point out its limits, we performed a series of nested model simulations for different grid-spacing ratios and respective non-nested reference simulations for different atmospheric situations. The idea is not to mainly validate the PALM model against experimental data but instead to systematically compare the nested-domain results to corresponding non-nested fine- and coarse-grid reference results and to show that the nested-domain solutions are closer to the fine-grid reference solutions than the coarse-grid reference solutions. PALM has been already evaluated for various ABL flows against measurement data (Letzel et al., 2008). Nevertheless, we show one comparison against wind tunnel data in Sect. 4.2.2. Furthermore, the idea is not to present grid convergence studies since the grid convergence of the PALM model has been demonstrated previously, e.g., for the convective boundary layer by Hellsten and Zilitinkevich (2013).

We simulated a homogeneously heated flat-terrain convective boundary layer and a purely shear-driven flat-terrain boundary layer. Further, to investigate the performance of the grid nesting in more complex situations where non-flat topography is present, we performed two-staged nested simulations for a neutrally stratified flow over a smooth three-dimensional hill and will compare the results against wind tunnel data. These three test cases were simulated only employing the two-way coupling. Second, we simulated a neutrally-stratified urban boundary layer flow over a regular staggered arrangement of building cubes using one- and two-staged nesting, and will compare the nested simulation results to corresponding non-nested fine- and coarse-grid simulation results. Details concerning the different simulation setups are given in their respective sections. Note that for the sake of simplicity velocity components will hereafter be addressed by lower case variable names only, regardless of whether they refer to the flow in the parent or the child domain.

4.1 Convective boundary layer

The nesting method is first evaluated for a pure convective boundary layer (CBL) with zero mean wind. We set up one child domain that is centered within the parent domain. For the root domain, cyclic lateral boundary conditions were set. A homogeneous and time-constant surface sensible heat flux of 0.1 K m s−1 was prescribed. The simulation was initialized with a potential temperature profile that increases linearly with height at a lapse rate of . The root model domain size is in the x, y, and z directions, respectively, with an isotropic grid spacing of 20 m. The top of the child domain is set to be within the middle part of the CBL, and the domain size is in the x, y, and z directions, respectively, with an isotropic grid spacing of 10 m, resulting in a grid-spacing ratio of 2. In order to examine how turbulence statistics behave for different grid-spacing ratios between parent and child in the CBL, we additionally run nested simulations with grid-spacing ratio of 3 and 4 by increasing the isotropic grid spacing in the parent domain to 30 m and 40 m, respectively. Non-nested coarse- and fine-grid reference simulations were carried out corresponding to the nested simulations with different grid-spacing ratios. The simulated time was 4 h for all convective cases. Data analysis started after 2 h of simulated time when model spin-up effects are not present any more and the simulations reached steady-state conditions. In order to perform a spectral analysis of time series data, the time step was held constant at 1.0 s in all convective simulations during the data analysis period.

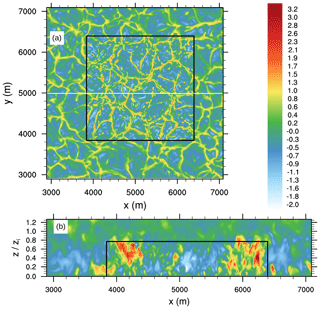

Figure 5Instantaneous horizontal (a) and vertical (b) cross section of w after 4 h of simulated time for the grid-spacing ratio case 2. The horizontal cross section is given at a height of 40 m. The black box indicates the lateral and top boundaries of the child domain. The white line indicates the y position of the vertical cross section of w shown in (b). The vertical axis in (b) is normalized with the horizontal mean boundary layer depth zi. Note that only part of the parent domain is shown for the sake of visibility.

Figure 5a shows an instantaneous horizontal cross section of the w component at a height of 40 m for the parent and child (overlaid) domains for the grid-spacing ratio 2. A hexagonal pattern of convective cells with strong updrafts and weaker downdrafts is visible, as can be typically observed in LES. The transition between parent and child appears smooth and the flow structures are continuous in terms of shape and amplitude, while within the inner part of the child domain more fine-scale structures can be observed with slightly stronger updrafts and downdrafts, as also reported by Moeng et al. (2007). Furthermore, Fig. 5b, showing an instantaneous vertical cross section for the w component, also depicts how the updrafts and downdrafts are consistently maintained across the child boundary without any obvious impact on the turbulent structures.

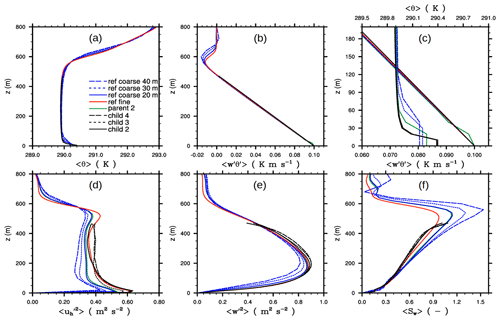

Figure 6The 30 min time-averaged and horizontally averaged profiles of (a) 〈θ〉 and (b) . (c) A close-up view of 〈θ〉 and . (d) Variance of the horizontal velocity component, (e) variance of the vertical velocity component, and (f) skewness of the vertical velocity component after 4 h of simulated time. Note the second upper abscissa in (c). Profiles are shown for the grid-spacing ratio of 2, 3, and 4 for the respective child domains, indicated by the respective numbers. All of these child domains have the same resolution as the fine-grid reference simulation. The corresponding profiles from the coarse-grid reference simulations for the 20 m, 30 m, and 40 m grid spacing are indicated the same. For the sake of clarity, the resulting profiles for the parent domain are only shown for the grid-spacing ratio of 2. Angle brackets indicate a horizontal average over the domain.

Figure 6 shows horizontally- and time-averaged vertical profiles of potential temperature θ, vertical turbulent heat flux , variances of horizontal and vertical velocity components, and the skewness of the vertical velocity component w, being one of the most grid-sensitive quantities (Sullivan and Patton, 2011). The profiles of 〈θ〉 indicate a well-mixed CBL. With increasing grid-spacing ratio the corresponding parent and coarse-grid simulations deviate from the fine-grid reference, particularly near the surface and within the inversion layer, while the child results adhere well with the non-nested fine-reference simulation, indicating that the profiles of 〈θ〉 in the child domains are rather independent of the parent grid for the employed grid spacings.

The heat flux profiles in the child and parent simulations decrease linearly with height within the CBL and are in good agreement with the fine-reference simulation. For the parent simulation we note the near-surface kink in the heat flux (see Fig. 6c for a close-up view). Moeng et al. (2007) observed a similar kink in the heat flux and attributed it to inaccuracies in the statistical evaluation of the heat flux, more precisely, to errors that arise from interpolation from a mass- to a height-coordinate system. However, to evaluate fluxes PALM does not apply any interpolations but uses directly the resolved- and subgrid-scale fluxes as calculated in the advection scheme and the subgrid model, respectively, and thus interpolation errors cannot explain the kink in this case. Instead, we attribute the kink in the parent domain to the anterpolation from the fine child solution. In simulations with different vertical grid spacing, the vertical gradients of 〈θ〉 within the unstable near-surface layer are differently resolved, resulting in slightly different near-surface temperatures, as it can, e.g., be observed between the fine- and coarse-reference simulations in Fig. 6a. This indicates that the parent simulation will yield slightly different 〈θ〉 profiles than the child simulation. After the anterpolation is performed, the parent solution is replaced by the underlying child solution, where the near-surface vertical gradients of 〈θ〉 in the parent domain partly deviate from the ones the model would create without feedback from the child domain, i.e., the near-surface 〈θ〉 profile in the parent is not in equilibrium with the applied surface boundary condition any more. In the following time step the parent model tries to re-adjust the post-inserted 〈θ〉 to the vertical gradients as being present without feedback from the child, altering the heating rates and thus the near surface vertical gradients of the heat flux, which in turn becomes visible as near-surface kink. In fact, we verified this hypothesis in a test case by using identical vertical grid spacing in parent and child. In this case, no kink in the vertical heat flux was visible any more (not shown).

The variances of the horizontal and vertical velocity components, as well the skewness of the vertical component, depend strongly on the grid spacing as the coarse- and fine-grid reference simulations show, where the variances (skewness) become smaller (larger) for increasing grid spacing. The parent simulation agrees well with the coarse-resolution simulation, indicating that the anterpolation changes the parent flow field only marginally. The variances and skewness in the child simulations agree with the fine-reference profiles, except for the upper regions of the child domain where the variances are slightly overestimated. The child profiles are almost independent of grid-spacing ratio and are close to the reference simulation profile. This indicates that the child solutions are almost independent of the chosen grid-spacing ratio in the studied cases.

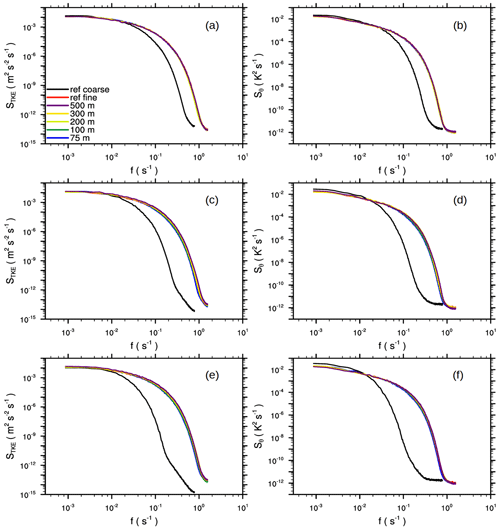

Although there is no mean horizontal advection in the zero-mean wind CBLs, spatially and temporally local horizontal advection always takes place, and therefore flow structures are advected locally from parent to child (and vice versa). Therefore, advected flow structures may need a certain fetch to adjust to the changed grid spacing. In order to get an idea of how much distance from the lateral child boundaries is required to observe similar turbulence properties as in a non-nested fine-resolution reference simulation, we performed a spectral analysis. Therefore, we sampled time series of turbulent kinetic energy (TKE) and θ at different locations inside the child domain and calculated frequency spectra from the sampled time series. Subsequently, we averaged spectra over all sampling locations with the same distance from the lateral child boundaries. Overall, we calculated spectra inside the child domain at locations 75, 100, 200, 300, and 500 m away from the lateral boundary. It should be noted that transforming frequency spectra into wave number spectra using Taylor's hypothesis in order to directly link spectral information and grid spacing is not strictly correct in this case where we have no background wind; nevertheless, we will assume that frequency and wave number space are connected, i.e., that large frequencies belong to small spatial scales and vice versa. Figure 7 shows the resulting frequency spectra, as well as corresponding spectra from fine- and coarse-grid reference simulations. As expected, the coarse-resolution spectra exhibit less spectral energy at larger frequencies compared to the child and fine-resolution spectra. This is due to the larger filter length assumed for the subgrid model removing more energy at larger spatial scales and thus also affecting smaller frequencies. The child spectra agree well with the fine-reference spectra, especially for the grid-spacing ratio case 2, where even locations close to the lateral boundaries show good agreement with the reference. We attribute this to the nature of the CBL, where turbulence is mostly produced locally by buoyancy and horizontal advection is almost negligible, and thus turbulence is almost unaffected by any transport from the boundaries. For the grid-spacing ratios of 3 and 4 the differences are also generally small, but locations close to the lateral boundaries show slightly smaller spectral densities at larger frequencies, indicating that for larger grid-spacing ratios the flow needs a fetch of a few tens of meters in a purely buoyancy-driven boundary layer to adjust to the finer grid spacing.

Figure 7Frequency spectra of the TKE (a, c, e) and θ variance (b, d, f) inside the child domain for the grid-spacing ratio of 2 (a, b), 3 (c, d), and 4 (e, f). The colored curves represent spectra taken at different distances away from the lateral child boundaries. Furthermore, spectra for fine-grid (and corresponding coarse-grid) reference simulations are displayed. TKE and θ were sampled at z=120 m.

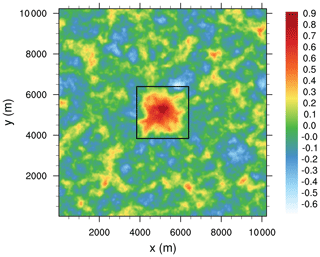

Figure 8Horizontal cross section of 5 h time-averaged vertical velocity at z=400 m in a nested simulation with a grid-spacing ratio of 2. The black box indicates the location of the child domain.

Even though the child simulations yield turbulence profiles, spectra, and instantaneous flow patterns similar to the fine-grid reference simulation for a pure buoyancy-driven flow, the nested simulation nevertheless creates side effects on the flow which appear as a secondary circulation (SC). This SC is not caused by a violation of mass conservation that has been discussed in Sect. 3.4. Figure 8 shows the 5 h time-averaged w component at the middle part of the CBL in a homogeneously heated nested simulation. In order to compute the 5 h time average, we continued the simulation with grid-spacing ratio of 2 for a further 3 h. Within the region of the nested child domain, a mean updraft can be observed, which is in the range of 0.4–0.9 m s−1 and extends throughout the entire depth of the CBL (not shown). At the child domain boundaries and outside the child domain region the flow subsides on average and horizontally directed branches at the upper and lower parts of the CBL occur, giving the overall picture of a SC. The strength of this SC, indicated by the amplitude of the mean updraft, is on the order of the strength of SCs observed in previous simulations over idealized stripe-like surface heterogeneities (Sühring et al., 2014) and even exceeds the strength of SCs observed in simulations over realistic surface forms (Maronga and Raasch, 2013).

SCs develop above surface heterogeneities mainly due to differential surface heating of the air, resulting in mean updrafts and downdrafts over the stronger and less-heated patches, respectively. However, since we prescribe the same surface sensible heat flux in the parent and the child simulations, differential surface heating cannot be the reason for the SC in the nested simulation. Moeng et al. (2007) observed a temperature bias in their child domain that led to mean vertical motion to compensate the temperature bias. They observed temperature biases that go either way, i.e., a too cold or a too warm child domain, which they attributed to a nested child domain of too small horizontal extent. If only a few updrafts or downdrafts are resolved in the child domain, the vertical transport is dominated by these updrafts or downdrafts, and thus a warmer or cooler CBL can be quickly produced in the child domain, respectively. They showed that for larger horizontal child domain size the temperature bias and thus the associated vertical motion vanished. However, they only considered instantaneous differences between parent and child, meaning that the temperature bias is a result of insufficient sampling of the large updrafts and downdrafts rather than an inherent feature of the nesting which can only be observed after time-averaging. In our case the SC becomes visible only after considerable time-averaging. The updraft branch of the secondary circulations is always located within the child domain for larger child domain extensions as well. We hypothesize that this SC is triggered by a slightly different divergence of the vertical heat flux between the region occupied by the child domain and the remaining parent domain due to different grid spacing. It might be impossible to eliminate because a higher resolution better represents the turbulent mixing, and thus differences between the parent and the child solutions are to be expected in general.

Even though this inherent artificially induced SC only appears when the flow is averaged over a longer time under quasi-stationary conditions (no diurnal cycle, no change in the mean wind, etc.), nested simulation results should be interpreted carefully in terms of SCs. In particular, since the strength of the artificial SC is on the order of “real-world” circulations over heterogeneous terrain, these two may become superimposed, altering the pattern of the vertical transport of sensible and latent heat. Although we did not succeed in proving our hypothesis, we encourage other researchers to look for the existence of such SCs in any nested models by analyzing the time-averaged results.

4.2 Neutrally stratified boundary layer tests

Initialization and inflow conditions

As further test cases, we set up a series of boundary layer flow simulations with increasing order of complexity. First, to evaluate the performance of grid nesting in shear-driven boundary layer flows, we simulated a flow over a homogeneous flat surface in order to compare first- and second-order moments from a nested simulation against reference simulations. In a second step, we simulated a flow over a smooth three-dimensional hill for comparison of nested simulation results against wind tunnel data. Finally, in order to illustrate the advantages of the grid nesting in more complex setups, we simulated a flow over a staggered arrangement of cubes mounted on a flat surface.

The parent domain size for all neutrally stratified simulations was in the x, y, and z directions, respectively. In all neutral simulations we prescribed a homogeneous roughness length of z0=0.01 m. At the top boundary we applied a free-slip condition for the horizontal wind components and zero vertical motion. At the spanwise lateral boundaries (north and south boundary), we applied cyclic conditions. At the left lateral boundary (hereafter referred to as the inflow boundary), we prescribed mean inflow profiles for the u and v component obtained from a cyclic precursor run. Two different precursor simulations were employed for the subsequent test cases. The one used for the flat surface and for the smooth hill featured a geostrophic wind of and at a latitude of 55∘, adjusted such that the surface layer mean flow became parallel with the x axis. This precursor simulation ran for 36 h to reach a stationary state. The second precursor simulation, used for the cuboid case, was driven by a fixed pressure gradient angled to result in a mean flow of at z=Lz with a 3∘ angle from the x axis.

In order to obtain a turbulent inflow, we applied a turbulence recycling method according to Kataoka and Mizuno (2002), where the inflow mean vertical profiles of u and v are superimposed by turbulent fluctuations sampled at a recycling plane, which is placed at xrc=1.5 km downstream from the inflow boundary. The recycling plane is placed sufficiently far apart from the inflow boundary to allow for statistically independent turbulence but also sufficiently far apart from the location of the child domain to avoid any feedback between the grid nesting and the inflow conditions. For further details on the implementation of the turbulence recycling method, see Maronga et al. (2015).

Further, in order to avoid persistent streaks in the u component, which may develop in neutrally-stratified flows and will be recurrently recycled in case of vanishing v component, we shifted the recycled turbulent signals along the y direction at the inflow boundary, following Munters et al. (2016). At the eastern outflow boundary we set a radiation boundary condition (Miller and Thorpe, 1981). The root domain was initialized with three-dimensional data recursively copied from the precursor run, while the child domain was initialized with data obtained from the parent. We used an isotropic grid spacing of 4 and 2 m within the root and the nested child domain, respectively. The cuboid and the smooth hill case also encompass a third domain with 1 m resolution (two-stage nesting).

In order to evaluate the effect of the nesting, we performed additional non-nested reference simulations with 4 and 2 m grid spacing. However, due to its high computational demands, a 1 m non-nested reference simulation was not performed. The simulated time of the neutrally stratified simulations ranged from 4 to 7 h. Data analysis started after 2 h of simulated time. When spectral analysis was performed (homogeneous flat case), the time step was held constant at 1.0 s for that simulation.

4.2.1 Neutrally stratified boundary layer flow over flat terrain

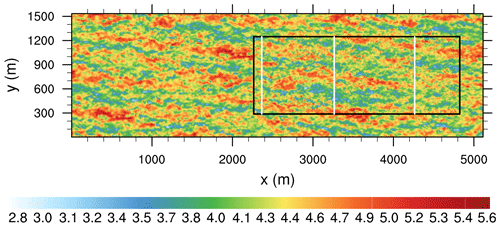

Figure 9 shows an instantaneous horizontal cross section of the u component for the nested simulation. As is typical for a neutrally stratified boundary layer, elongated streak-like structures can be observed (Hutchins and Marusic, 2007; Hutchins et al., 2012). These elongated structures preserve their size and amplitude when entering the child domain from the left and exiting to the right.

Figure 9Instantaneous horizontal cross section of the u component in m s−1 at z=40 m. The black box indicates the location of the child domain. The solid white lines indicate the x locations where the profiles shown in Fig. 11 are averaged over the y direction.

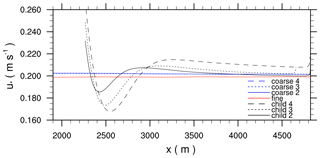

Figure 10The 2 h time-averaged and y-averaged horizontal profiles of the friction velocity for the grid-spacing ratio of 2, 3, and 4, as well as the corresponding coarse and fine-grid reference simulations.

Figure 10 shows horizontal profiles of the time-averaged and y-averaged friction velocity u* within the child domain and the corresponding coarse- and fine-grid reference cases. In the coarse and fine-reference cases u* is constant along the x axis, indicating that the flow is in equilibrium with the surface friction. In the coarse-grid simulations u* shows slightly higher values compared to the fine-grid reference simulation, even though the prescribed surface roughness is identical in all simulations. This suggests that the flow in the coarse-grid simulations sees a slightly rougher surface, which we attribute to the less accurate representation of the vertical near-surface gradients of the wind profile compared to the fine-grid simulation. When the flow enters the child domain, the coarse-grid inflow wind profile is not in equilibrium with the surface friction any more and the near-surface flow decelerates, indicated by the higher values of u* near the child inflow boundaries. With increasing distance to the inflow boundary, u* rapidly decreases and reaches a minimum with lower values compared to the reference cases, until it increases again, reaching a secondary maximum, and then asymptotically approaches a constant value, which is similar to the value of the fine-grid reference case in the grid-spacing ratio cases 2 and 3. However, at least for the given model domain size, u* does not approach the fine-grid solution in grid-spacing ratio case 4 but still exhibits higher values. This kind of spatial oscillation of u*, which indicates an alternating deceleration and acceleration of the near-surface flow along the x direction, shows that the surface-momentum exchange in the child domain needs a sufficiently large development length. For grid-spacing ratio case 2 the required fetch length is at least 1 km to adjust to the fine-grid resolution. With increasing grid-spacing ratio the amplitude of the spatial oscillation increases and the fetch length becomes longer and even exceeds the model domain size (as in grid-spacing ratio case 4).

Figure 10 shows that u* gradually adjusts to the fine-reference value, at least for grid-spacing ratio cases 2 and 3. This is in contrast to Moeng et al. (2007), who revealed a friction velocity bias between parent and child in their neutrally stratified simulation when employing a grid-size-dependent SGS model, which is also the case in this study.

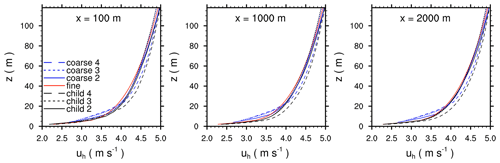

Figure 11The 2 h time-averaged and y-averaged profiles of the horizontal wind speed for the grid-spacing ratio of 2, 3, and 4, taken at different distances downstream of the inflow boundary, indicated by the solid white lines in Fig. 9. In addition, corresponding time-averaged and y-averaged profiles from the fine- and coarse-reference simulations taken at the same locations are shown.

Figure 11 shows time-averaged and y-averaged profiles of the horizontal wind speed within the child domain for different grid ratios, taken at different distances downstream of the inflow boundary, indicated by the solid white lines in Fig. 9. At a distance of 100 m, the profiles in the child domains agree well with the fine-reference profile within the lowest 10 m. Even though the surface momentum exchange is still not in equilibrium at that position (see Fig. 10), one could already conclude from the near-surface wind profiles that the flow has already been adapted to the finer grid resolution. However, further above this point, the wind profiles of the child model still deviate from the fine-reference solution and are closer to the coarse-reference profiles. This is especially obvious for the grid-spacing ratio case 4, where the wind profile shows a discontinuity at a height of z=20 m. With increasing distance from the child inflow boundary, the child profiles gradually adjust to the fine-reference simulation, while at a distance of 2000 m the child profiles agree with the fine-reference solution, except for the grid-spacing ratio case 4, which still deviates from the fine-reference solution.

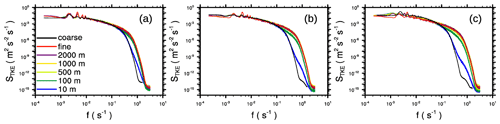

Figure 12Frequency spectra of the resolved-scale TKE taken at different sampling locations downstream of the inflow boundary for the neutrally stratified boundary layer at z=40 m for grid-spacing ratio (a) 2, (b) 3, and (c) 4. TKE spectra for the fine-grid (and the corresponding coarse-grid) reference simulations are also shown.

In order to further analyze the flow adjustment within the child domain, we computed resolved-scale TKE spectra at different distances from the child inflow boundary. The spectra were calculated from time series of the three velocity components that were sampled at different locations within the domain. The final spectra were then obtain by averaging individual spectra over all locations with identical distance to the inflow boundary, assuming that the flow is parallel to the x axis. Figure 12 shows TKE spectra obtained from the child domain and for the corresponding reference simulations. At low frequencies (large wave numbers), the spectra look quite similar and no obvious differences with the fine- and coarse-reference spectra can be observed, indicating the grid nesting does not induce any larger-scale oscillations that propagate through the model domain. At higher frequencies, however, especially at the near-inflow boundary, the child spectra differ from the fine-reference spectra and more resemble the corresponding coarse-reference spectra. With increasing distance from the inflow boundary, the spectral properties gradually adjust to those of the fine-reference case, while at a fetch of 500–1000 m almost no differences can be observed at that height level.

In contrast to a buoyancy-driven boundary layer, the flow in a purely shear-driven boundary layer requires a sufficiently large development distance to adjust to the finer grid resolution. However, a purely shear-driven flow over a flat homogeneous surface can certainly be considered as an extreme case in terms of flow adjustment, as the vertical turbulent exchange, which is primarily driven by surface-roughness-induced shear, is rather low compared to less idealized flows over non-flat terrain or with obstacles included. Hence, we expect that the required fetch length may decrease for rougher surfaces and more complex surface geometries.

4.2.2 Neutrally stratified boundary layer flow over a smooth three-dimensional hill

The hill case is studied to compare flow statistics against the wind tunnel observations conducted by Ishihara et al. (1999), who sampled data at different upstream and downstream locations along the center hill axis. This flow is simulated using a two-stage nesting configuration in which a second child domain with 1 m resolution is placed within the first child domain with 2 m resolution.

The terrain height of the smooth three-dimensional hill is given by

with the hill height H=40 m and a hill radius l=100 m, while x and y indicate the location on the discrete grid, and x0=2024 m and y0=384 m indicate the location of the hill top with respect to the parent domain dimensions. Note that we upscaled the hill dimension by a factor of 1000 with respect to the wind tunnel model. Again, the parent domain size is in the x, y, and z directions, respectively. The child domain sizes are and for the first and second child domains, respectively. The upstream boundaries of the first and second child domains are placed about 9.7H and 6.3H upstream of the hill top, respectively. The smooth hill geometry is approximated with the grid-following stair step geometry in PALM due to its orthogonal grid arrangement and its topography description system; see (Maronga et al., 2015).

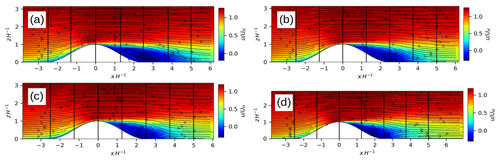

Figure 13The 2 h time-averaged vertical cross section of the u component field (colored contours) and the u–w mean flow field (vector arrows) displayed along the centerline of the three-dimensional hill for (a) the 4 m reference simulation, (b) the 2 m reference simulation, (c) the 2 m nested child simulation, and (d) the 1 m nested child simulation. Vector arrows and the u component are normalized, with the reference wind speed taken at z=H upwind of the hill. The ordinate and the abscissa are scaled with the hill height H. Note that the abscissa is centered at the hill top. The vertical black lines indicate the positions of the profiles displayed in Figs. 14 and 15. Note that the ordinate in (d) is constrained by the vertical dimension of the 1 m child domain. Moreover, note that the x dimension in (c) and (d) do not show the entire child domain extents but instead cut out minor parts at the left and right.

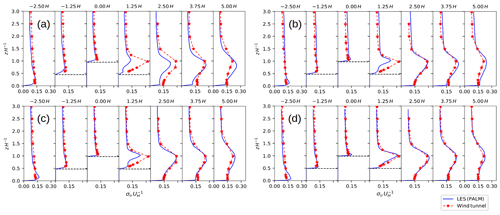

Figure 14The 2 h time-averaged vertical profiles of the standard deviation of the u component for the LES and the observed wind tunnel flow for (a) the 4 m reference simulation, (b) the 2 m reference simulation, (c) the 2 m nested child simulation, and (d) the 1 m nested child simulation. The ordinate is scaled with the hill height H. The standard deviation is normalized with the reference wind speed taken at z=H upwind of the hill. The horizontal dashed black lines indicate the discrete height of the surface at the sampling location. Note that the vertical dimension of the 1 m nested child domain does not cover the entire vertical range of the measurements, and thus some data points from the LES are missing on the normalized height coordinate in (d).

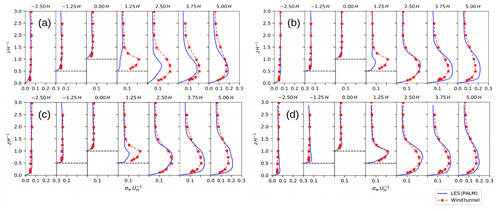

Figure 15The 2 h time-averaged vertical profiles of the standard deviation of the w component for the LES and the observed wind tunnel flow for (a) the 4 m reference simulation, (b) the 2 m reference simulation, (c) the 2 m nested child simulation, and (d) the 1 m nested child simulation. The ordinate is scaled with the hill height H. The standard deviation is normalized with the reference wind speed taken at z=H upwind of the hill. The horizontal dashed black lines indicate the discrete height of the surface at the sampling location. Note that the vertical dimension of the 1 m nested child domain does not cover the entire vertical range of the measurements, and thus some data points from the LES are missing on the normalized height coordinate in (d).

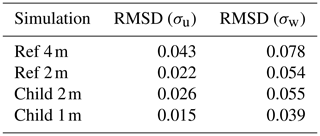

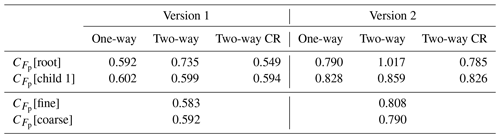

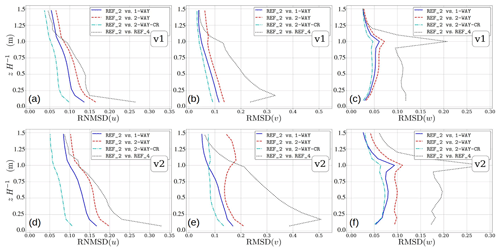

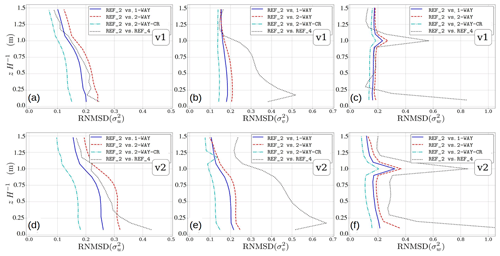

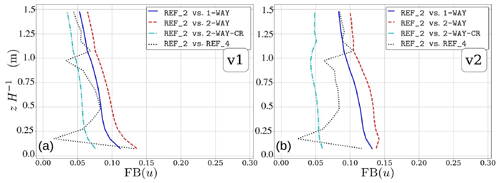

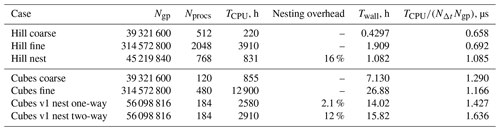

Figure 13 shows the mean flow field along the centerline of the three-dimensional hill for the nested child simulations and the fine- and coarse-grid reference simulations. Upwind of the hill, the mean flow in the nested simulations agrees with the one in the fine- and coarse-reference simulations. In the coarse-grid reference simulation the recirculation extends further downstream up to about 4.1H on the lee side of the hill compared to the 2 m nested and fine-reference simulation, which both show a recirculation that extends up to about 3.75H downstream of the hill top. When further increasing the grid resolution to 1 m, the recirculation zone further shortens to about 3H. Figures 14 and 15 show the corresponding standard deviations of the u and w components sampled at different locations along the centerline of the hill. Upwind of the hill the standard deviations of the u and w components agree with the observations and show no significant difference between the simulations. However, leeward of the hill at 1.25H, the LES underestimates the standard deviations of the u and w components in the 2 and 4 m simulations, which is most pronounced in the coarse-grid reference simulation, while the profiles in the 1 m child domain of the nested simulation agree fairly well with the measurements. Further downstream, the coarse-grid reference run still slightly underestimates the observed standard deviations, while the 2 m child domain result and fine-grid reference result slightly overestimate the standard deviations, which is in agreement with results from the EPFL-LES model presented in Diebold et al. (2013), who employed a similar fine-grid resolution for this hill flow. With 1 m grid spacing the standard deviations further downstream are still slightly overestimated, though their vertical shape and amplitude is captured quite well. In order to provide a more quantitative measure, Table 1 provides the root-mean-square deviation (RMSD) of the profiles shown in Figs. 14 and 15. RMSD is defined as follows: