the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

Assessing the benefits of approximately exact step sizes for Picard and Newton solver in simulating ice flow (FEniCS-full-Stokes v.1.3.2)

Angelika Humbert

Thomas Slawig

Solving the momentum balance is the computationally expensive part of simulating the evolution of ice sheets. The momentum balance is described by the nonlinear full-Stokes equations, which are solved iteratively. We use the Picard iteration and Newton's method combined with Armijo step sizes and approximately exact step sizes, respectively, to solve these equations. The Picard iteration uses either no step size control or the approximately exact step sizes. We compare the variants of Newton's method and the Picard iteration in benchmark experiments, called ISMIP-HOM experiments A, B, E1, and E2. The ISMIP-HOM experiments consist of a more realistic domain and are designed to test the quality of ice models. For an even more realistic test case, we simulate the experiments E1 and E2 with a time-dependent surface. We obtain that approximately exact step sizes greatly reduce the necessary number of iterations for the Picard iteration and Newton's method with nearly no increase in the computation time for each iteration.

- Article

(2042 KB) - Full-text XML

- BibTeX

- EndNote

Simulating the evolution of the ice sheets in Greenland and Antarctica in adequate physics and resolution is a challenging task. The dynamics of ice sheets is described as a fluid mechanical problem with the momentum balance reduced to a Stokes problem as acceleration and Coriolis forces are negligible. In the past, computational constraints led to the reduction of the problem by approximating the momentum balance. If a sufficiently large spatial resolution cannot be chosen, the benefit from solving the Stokes problem is lost. Consequently, in practical terms, Stokes models lead to large problems, and thus efficient solvers are inevitable. This is what this study focuses on.

The full-Stokes equations are nonlinear partial differential equations described as shear thinning, which means that the viscosity depends nonlinearly on the symmetric gradient. More precisely, we consider the stationary variant of these equations in the variational formulation. In ice models, the stationary equations are solved to calculate the velocity field. The velocity field is then used to calculate the new shape of the glacier. The variational formulation is needed to calculate a solution with finite elements. A common method of calculating the solution of these equations is the Picard iteration (see Colinge and Rappaz, 1999). The Picard iteration fixes the nonlinear viscosity, calculates a new velocity, and updates the viscosity.

The Picard iteration is, for example, used in the ice models ISSM (Larour et al., 2012) and FELIX-S (Leng et al., 2012). Elmer/Ice (Gagliardini et al., 2013) uses the Picard iteration for the first few iterations and the Newton method for the last iterations. This approach was also extended to a nonlinear friction law. However, Elmer/Ice does not use a step size control. Thereby, Newton's method does not converge from every initial point (Gagliardini et al., 2013). The ice models Elmer/Ice and FELIX-S are compared in Zhang et al. (2017). For some glacier simulations, COMSOL multiphysics is used (see Rückamp et al., 2022). Newton's method in COMSOL multiphysics switches between choosing the negative gradient and Newton directions. Additionally, a trust-region method is used to determine the step sizes. The trust-region radius is the maximum step size that one would trust the step size to be so it is a good choice (for more mathematical details, see Sect. 6.4.2 in Dennis and Schnabel (1996)). The trust-region method in COMSOL multiphysics uses the residual norm (see Sect. “The Fully Coupled Attribute and the Double Dogleg Method” in COM, 2018). Instead, we will discuss another approach, which is algorithmically simpler and uses the problem-specific information of a convex function. Additionally, the convex function allows us to use different step size methods.

We employ Newton's method by formulating the variational formulation as a root problem. If we start near the solution, Newton's method is superlinear convergent (Hinze et al., 2009). Thus, the error between the approximation and the real solution reduces faster than the linear convergent Picard iteration (see Fraters et al., 2019). However, starting with an unsuitable initial velocity field for Newton's method could lead to a diverging velocity. A step size control guarantees convergence from every initial guess. This step size control is constructed by defining a function that we want to minimize. One variant is presented in Fraters et al. (2019). We consider another approach that only needs to calculate integrals. It allows us to use two different step size controls. Newton's method with one of these step sizes converges from every initial guess to the solution (Schmidt, 2023). Additionally, we employ approximately exact step sizes for the Picard iteration to provide a possibility of reducing the necessary number of iterations without implementing Newton's method. The exact step sizes are the solution of a one-dimensional minimization problem. As we can only approximate these step sizes arbitrarily precisely, we called them approximately exact and call them exact step sizes in this paper for brevity.

The computation of the step size is computationally cheap compared to solving the linear systems of equations in each iteration. The work of Habbal et al. (2017) considers different solvers to reduce the simulation time for solving the system of linear equations. Nonetheless, for all solvers, the system of linear equations is still the main computational effort. Our step size control reduces the computation time by reducing the necessary number of iterations.

As a test case, we use the ISMIP-HOM experiments A, B, E1, and E2. These experiments are designed to test the quality of glaciological models. They reflect a large domain of the glaciers, a large aspect ratio, and a sinusoidal bedrock and the Glacier d'Arolla, respectively. We simulate the Glacier d'Arolla experiments E1 and E2 also as time-dependent.

The paper has the following structure: in Sect. 2, we introduce the equations in the variational formulation and the Picard iteration. In the subsequent section, we formulate Newton's method. In Sect. 4, we introduce the new idea, the step size control that decreases the number of iterations and verifies convergence from every initial guess. In Sect. 5, we discuss the stationary ISMIP-HOM experiments A and B and compare them with the results in Pattyn et al. (2008). In Sect. 6, we solve instationary problems with and without sliding derived from the ISMIP-HOM experiments E1 and E2. Finally, we give a summary in Sect. 7 and an outlook in Sect. 8.

Let Ω⊆ℝN, with . For describing the movement of ice, we need the second-order tensor σ, the density ρ, and the gravitational acceleration g. These quantities describe the full-Stokes equations, the most complex equations for simulating ice, by

on the domain Ω. We describe the stress tensor, σ, with the pressure, p; the identity tensor (matrix), I; the symmetric gradient, D; the velocity, v; and the viscosity, μ, by . We define the nonlinear viscosity, μ, as

with and , δ>0. The constant δ>0 guarantees μ<∞. We choose n=3 for the experiments as in Pattyn et al. (2008). The boundary consists of the bedrock, Γb; the surface, Γs; and the lateral boundary, Γℓ. Our boundary conditions are

with the outer normal vector n. Here, σ⋅n is the inner tensor-product (matrix–vector multiplication).

In Sect. 6, we also consider the sliding boundary condition . Due to zero values of the friction coefficient in this experiment, only the spaces for the variational formulation change by including . Thus, for simplicity, we neglect this additional boundary condition in our upcoming derivation.

We derive the variational formulation in infinite dimensions because we can implement it directly in FEniCS (see Logg et al., 2012). We determine the variational formulation by multiplying with test functions and using partial integration, and, second, we explain the function spaces used. We define an operator by

where v∈H and p∈L are the solution and ϕ∈H and ψ∈L are test functions. The angular brackets on the left-hand side of the equation are used because, formally, we have a function that maps to the dual space. The dual space is denoted by the star after the space, e.g., H* and L*. The solution of the full-Stokes equations is , with

We added the diffusive term μ0>0 to get a well-posed directional derivative and a well-posed Picard iteration (for details, see Appendix A1). Additionally, we are now in a Hilbert space formulation and set , where H1(Ω)N is the space of vector-valued square integrable functions with a square integrable derivative. We set for the space of square integrable functions with a zero integral. There exists a unique solution to that problem for μ0>0 (see Schmidt, 2023). Nonetheless, we perform all experiments with μ0=0.

We formulate the problem in infinite-dimensional spaces H and L. In these infinite-dimensional spaces, mathematical convergence properties are independent of the mesh resolution and the finite elements used as long as the finite elements are a subspace of the infinite-dimensional spaces. Ice models often use finite elements. Moreover, the formulation in discretized spaces is identical; only the functions are from finite-dimensional spaces.

A common method to solve the variational formulation of the full-Stokes equations in glaciological models is the Picard iteration (see Algorithm 1). It is used in ISSM (see Larour et al., 2012), FELIX-S (see Leng et al., 2012). Elmer/Ice can use the Picard iteration and Newton's method, which we introduce in the next section. Elmer/Ice can use the Picard iteration to get near the solution and then can use Newton's method (see Gagliardini et al., 2013).

Algorithm 1Picard iteration.

The Picard iteration converges slowly (see Fraters et al., 2019). Thus, it can be beneficial to consider faster-converging algorithms. Newton's method is often superlinear convergent, also in infinite dimensions (see Hinze et al., 2009). For Newton's method, the calculation of the derivative is necessary. Due to the variational formulation, we can only express the derivative of G in terms of the direction and the test functions. The derivative of G in (v,p) in direction (w,q) is

A mathematical proof that G is differentiable in all directions (w,q) is presented in Schmidt (2023). A more detailed deduction of the derivative is in Sect. A2.

Newton's method can solve the full-Stokes equations by Algorithm 2. Because Newton's method is only locally convergent, we use a step size control in Algorithm 2. We explain the step size control in the next section.

Algorithm 2Globalized Newton's method.

In this section, we derive a global convergent Newton method using a step size control; we have the current velocity field v and the direction w. Instead of setting our new field, , we choose α>0, with . We want an algorithm for choosing this α. Classical approaches for determining the step size α check if the norm reduces enough compared to . What enough reduction means is discussed in, for example, Hinze et al. (2009). However, we use an alternative approach. Solving is equivalent to minimizing as in Eq. 7 (see Schmidt, 2023).

We need the last summand because the time-dependent experiments lead to initial guesses for the velocity field that are not divergence-free; we start with a divergence-free initial guess, calculate the velocity field, and use the velocity field to calculate the new domain. (We explain the calculation of the new domain in Sect. 6.2.) The grid points with the velocity information are moved correspondingly to fit the new domain. On this new domain, our old velocity field is our initial guess and slightly not divergence-free. (Despite divv being near 0, the step size control did not work without the last summand in Eq. 7 for time-dependent problems.)

The convex functions were also used in Hirn (2013) for μ0=0 with Dirichlet boundary conditions, and Chen et al. (2013) for δ=0 and μ0=0, with more realistic boundary conditions. The equivalence between minimizing this convex function and solving the full-Stokes equations is clear because the minimizer of the function and the root of the derivative are at the same point for strict convex functions.

A classical approach to determine a suitable step size α is the use of an Armijo step size as in Hinze et al. (2009) (see Algorithm 3).

Algorithm 3Armijo step size.

We describe the idea of Armijo step sizes: for a function, the negative gradient is the direction of the steepest descent. To find the minimum, we need enough reduction compared to the steepest descent multiplied with the step size and a factor between 0 and 1 as we expect less reduction than the steepest descent. This condition is stated in line 4 of Algorithm 3. Line 3 guarantees that the step size is not too small. For Newton's method, one chooses α:=1 (see Nocedal and Wright, 2006, Algorithm 3.1). Newton's method converges fast for (see Nocedal and Wright, 2006, Theorem 3.6). Typically, is chosen (see Nocedal and Wright, 2006). However, we choose as 10−4 was to strict. We only need a direction that reduces the function value to use Armijo step sizes.

However, we can exploit the convexity of J for constructing other step sizes; we define the auxiliary function Jk:

The function Jk is strictly convex for wk≠0 because J is strictly convex with respect to the first argument and convex with respect to the second argument. Thus, the following equation:

is negative for α=0 and positive for a big enough α because of the strict convexity of Jk for wk≠0. As is continuous, a simple bisection (see Algorithm 4) calculates the minimum of Jk. In practice, we approximate the exact step size arbitrarily precisely. Thus, we denote the approximate exact step size as the exact step size. Exact step sizes have the advantage of allowing us to really calculate the minimum in a direction instead of just noting some reduction. Nonetheless, the exact step size is only rarely used in practice. One needs more conditions on the problem, which is the strict convexity of Jk for wk≠0 here. We could not find the minimum of

for the direction (wk,qk) because we have no information on where the minimum is.

For simplicity, we choose 25 iterations to approximate the exact step size. As we expect step sizes of length 1 to often be a good choice, we choose a:=0 for the minimum step length and b:=4 for the maximum step length in our implementation. We then obtain an accuracy of for the step size α. The calculation of α is computationally not expensive.

Algorithm 4Exact step size.

We modify the Picard iteration (see Algorithm 1) by a step size control; we set

and choose αk as α in Algorithm 4.

We analyze the four algorithms we introduced: the Picard iteration without a step size control as a reference, exact step sizes for the Picard iteration and Newton's method, and Armijo step sizes for Newton's method. We implemented all these algorithms in FEniCS version 2019.1.0 (see Logg et al., 2012). FEniCS is a library that allows for the implementation of variational formulations easily. Hence, it allows for fast testing of algorithms without implementing them in complex codes. We determine the performance of these algorithms by comparing each iteration step with a reference solution for the experiments ISMIP-HOM A and B (see Pattyn et al., 2008). The reference solution is calculated with 80 prescribed Picard iterations without a step size control. We choose 80 iterations as this method converges slowly and the reference solution should be more accurate than the solutions calculated by the compared methods. In Pattyn et al. (2008), the authors described the ISMIP-HOM experiments to analyze the quality of ice models. Moreover, they compared simulation results.

We set the physical variables according to Pattyn et al. (2008): (Pa)−3 a−1, ρ:=910 kg m−3, and m s−2.

We set the constant a−1 and μ0:=0 kg a m−1 s−2. We derive the unit for μ0 by . In the experimental design, the nonlinear term is instead of (see Pattyn et al., 2008). We choose the constant δ such that δ is smaller than the typical magnitude of Dv, and 3 a−1, multiplied by the machine precision, eps:

for typical values of . We defined the infinite-dimensional algorithms for μ0>0 as they are not well posed for μ0=0. However, we perform all simulations with μ0=0 because the additional diffusion term is not used in ice models and the diffusion term is not needed in the finite-dimensional finite-element spaces (Hirn, 2013). In all experiments, we calculate the initial velocity by replacing with 106 and solving this linear problem. As starting with a−1 leads to a and starting with a constant velocity field leads to a, we chose 106 between both values.

5.1 The original experiment ISMIP-HOM B

In this subsection, we introduce details from Pattyn et al. (2008) that are specific to the experiment ISMIP-HOM B. This experiment has a domain with a sinusoidal, slightly tilted (0.5°) bottom. The boundaries at the left and right are vertical, and the boundary at the top has a linear slope of 0.5°. Furthermore, periodic boundary conditions are used at Γℓ. The experiment prescribes Dirichlet zero boundary conditions, v=0 on Γb and on Γs.

The length L:=5 km is the horizontal extent. The angle β:=0.5° describes a slight decline at the surface and the bottom by

with .

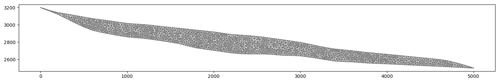

5.2 Modifications to the experiment ISMIP-HOM B

Formulating the convex function J (see Eq. (7)) that corresponds to periodic boundary conditions is complicated. Thus, we use the alternative introduced in the supplement of Pattyn et al. (2008) by copying the glacier to the right and the left. We have three copies to the right and the left (see Fig. 1). At the lateral boundary, Γℓ, we impose Dirichlet zero boundary conditions. Also, the resolution at the outer copies is lower than for the original domain. This reduces the number of elements for the two-dimensional experiment by 30 % and in three dimensions by 51 %. Nevertheless, the three-dimensional experiment was performed on a high-performance computer. In two dimensions, the local refinement has no relevant impact on the solution. Also, one can simulate the two-dimensional experiment on a laptop.

Figure 1The domain, with a grid with red dots and three copies to the right with green, purple, and blue dots.

Instead of the slope, we rotate the gravity. Thus, we should rotate the lateral boundary, Γℓ of the domain. We neglect this and stick to vertical boundaries on the left and the right.

5.3 Results for experiment ISMIP-HOM B

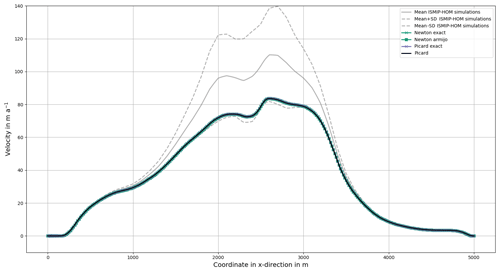

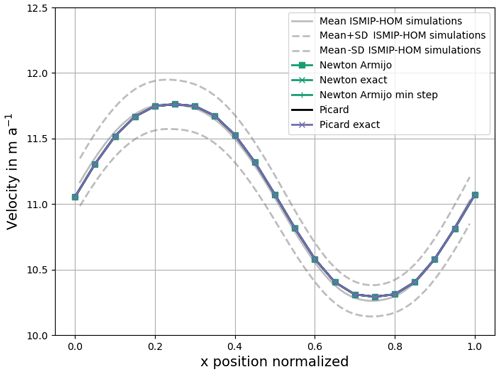

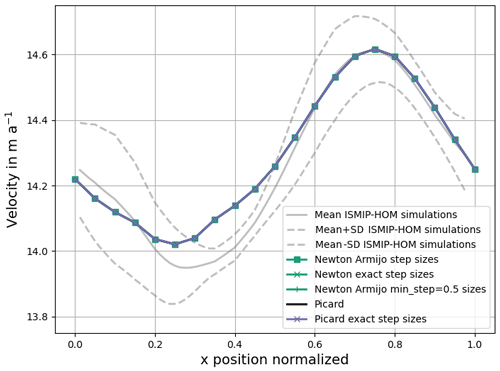

Our velocity fields at the surface (see Fig. 2) are close to the mean of the full-Stokes simulations in Pattyn et al. (2008).

Figure 2Simulated surface velocity for different solvers for ISMIP-HOM B. All our calculated velocity fields overlap with each other. The mean and the standard deviation (SD) from Pattyn et al. (2008) with nine models are plotted in grey. The mean and standard deviations have no values at x=0 and x=1 due to missing values.

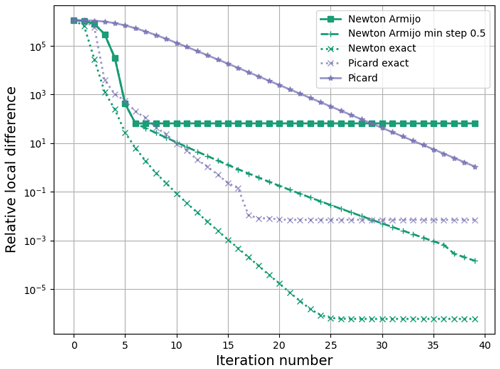

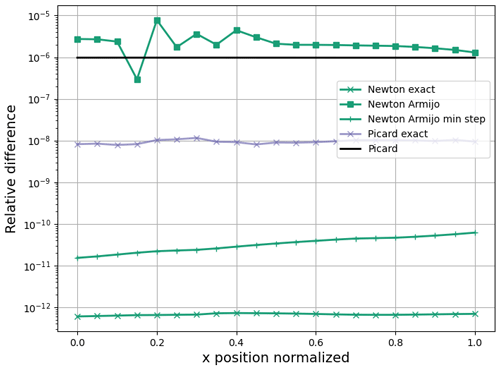

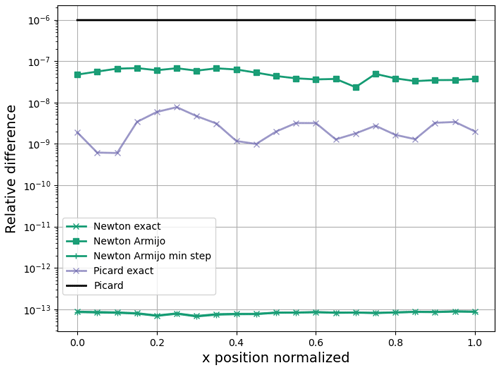

Figure 3Relative difference in for each grid point at the surface. The reference solution is the solution from 80 Picard iterations without a step size control.

Also, all our methods produce very similar velocity fields at the surface, as displayed in Fig. 3. Next, we compare how many iterations are necessary to reduce the relative difference and relative local difference compared to the reference solution. We calculate the relative difference and the relative local difference for a velocity v and the reference solution vref by

with c=1 mm a−1. Then, c is much smaller than 10 m a−1, and below this velocity speed, slight differences are seen as not so important (Joughin et al., 2010, Sect. 2.3). We use two error measurements because one method could be better for one purpose and the other for another. The local relative difference reflects that regions with small velocities should also be represented with a small relative error. Both error measurements consider the velocity field for the whole domain of the glacier. In contrast, the original experiment (Pattyn et al., 2008) only considers the velocity field at the surface.

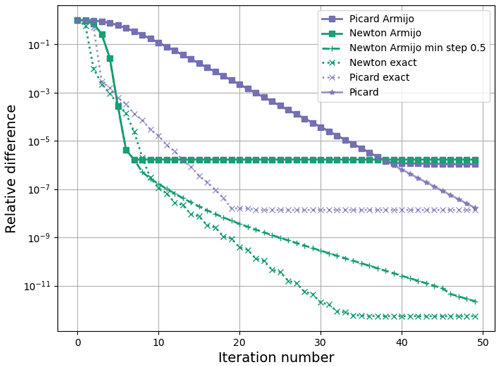

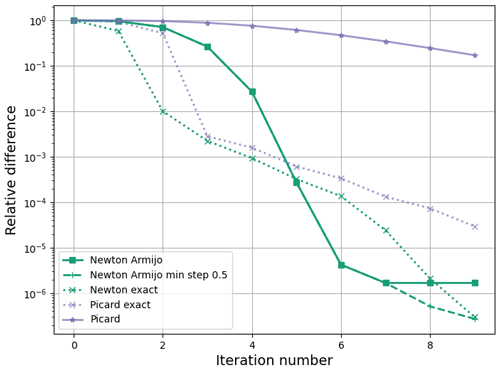

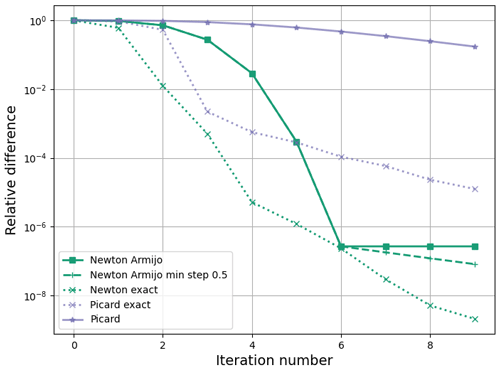

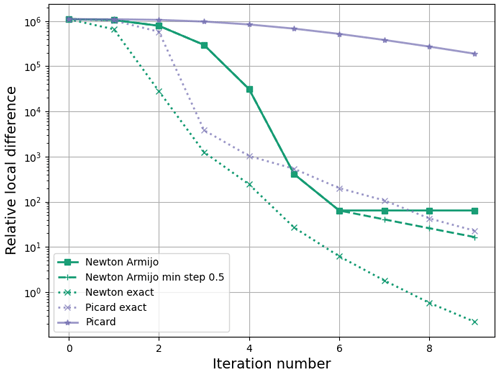

Figure 4 displays the relative difference over the iteration number. First, we discuss the Picard variants. Without a step size control, the convergence rate is slow. The method needs 39 iterations to obtain a reduction to 10−6. Exact step sizes lead to a faster reduction in the relative difference.

For Newton's method, the exact step sizes reduce the relative difference really fast. We see this even better if we consider just the first nine iterations (Fig. 5). The Armijo step sizes obtain the reduction in the Picard iteration without a step size control after only seven iterations. This reduces the necessary number of iterations by 82 %.

Figure 5Relative difference compared to the reference solution for ISMIP-HOM B for the first 9 iterations.

However, after a few iterations, the relative difference does not reduce anymore. This could be seen as either a mistake in the step size control or Newton's method. However, the Picard iteration with Armijo step sizes has the same problem. It is identical to the Picard iteration without a step size control up to iteration 39 and then chooses smaller step sizes to stall at a similar difference as Newton's method. Imposing a minimum step size of 0.5 helps to circumvent this problem. Newton's method then reduces the relative difference up to iteration 39.

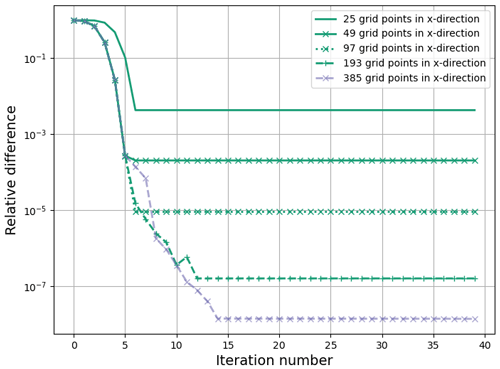

We think the reason for this behavior is that the discretized minimum of the convex function, J, and the root of the full-Stokes equations are slightly different. To verify this claim, we solve the full-Stokes equations on one grid and refinements of it, ensuring that all grid points on the coarser grids are also on the finer grids. We see the dependence on the resolution in Fig. 6. By halving the grid size, we reduce the relative difference by a bit more than a factor of 10. The increase in the relative difference from iteration 10 to 11 for the resolution with 193 grid points in the x direction seems nonintuitive. But, we remind that the Armijo step size control tries to minimize the function J not find the root.

Figure 6Experiments with 25, 49, 97, 193, and 385 grid points in x direction and 3, 5, 9, 17, and 34 grid points in z direction for the experiment ISMIP-HOM B for Newton's method with Armijo step sizes.

Also, Hirn (2013) reported accuracy problems for a small value of δ. Hirn considered a channel flow with ρg=0 and . The stopping criterion is not reached for n=10 and for n=5 for higher resolutions. In the second experiment, Hirn introduced δ>0, with and and the mesh size h. Both variants converged to the wanted accuracy. Additionally, the calculated solutions for all resolutions were not too different from the analytical solution compared to the original problem with δ0=0. Finally, Hirn (2013) counted the number of Newton iterations to reach the wanted accuracy: the variants with always converge and need a lower number of iterations compared to δ0=0.

Thus, a higher δ value could lead to the expected quadratic convergence. In contrast, the exact step sizes do not seem to have this problem as they do not rely on evaluating the function J. Newton's method with exact step sizes has the advantage that the error reduces even more without using a minimal step size. Thus, one less parameter needs to be selected. Even the Picard iteration with exact step sizes is much better than the Picard iteration without a step size control. It only needs 15 iterations to obtain the accuracy, for which the Picard iteration without a step size control needs 39 iterations. That corresponds to a reduction of 62 %. The latter approach also has the advantage that there is no need to implement a new method to solve the problem. Only the relatively simple calculation of the step sizes needs to be implemented.

The results are really similar for our second measure of the accuracy, the relative local error (see Fig. 7).

All our algorithms are better than the Picard iteration without a step size control in this experiment. The reduction with Newton's approach with both step size controls is 77 % now. The fast convergence is again impressive, especially for the first nine iterations (see Fig. 8).

5.4 The experiment ISMIP-HOM A

Because real-world applications are three-dimensional, we consider experiment ISMIP-HOM A. This experiment extends ISMIP-HOM B to three dimensions. All chosen constants are the same as in the experiment ISMIP-HOM B. The experiment ISMIP-HOM A has a sinusoidal bottom in both horizontal dimensions. Again, we have three copies of the glacier in both horizontal directions. Thus, we have in total 48 copies. We describe the surface and bottom by

5.5 Results for experiment ISMIP-HOM A

All our methods produce very similar results and overlap (see Figs. 9 and 10). Our simulations reproduce the surface velocity at from Pattyn et al. (2008) for the full-Stokes simulations for the majority of the glacier, but they produce higher velocity values than the mean added to the standard deviation around . Nonetheless, the maximum relative difference is less than 0.02 (see Fig. 9).

Figure 9Simulated surface velocity at in meters per year for different solvers for ISMIP-HOM A. All our calculated velocity fields overlap with each other. The mean and the standard deviation from Pattyn et al. (2008) with five models are plotted in grey. The mean and standard deviations have no values at x=0 and x=1 due to missing values.

Figure 10Relative difference in for each grid point at the surface at for ISMIP-HOM A. The reference solution is the solution from 80 Picard iterations without a step size control. The relative difference for Newton with exact step sizes and Newton with Armijo step sizes and a minimal step of 0.5 are nearly identical.

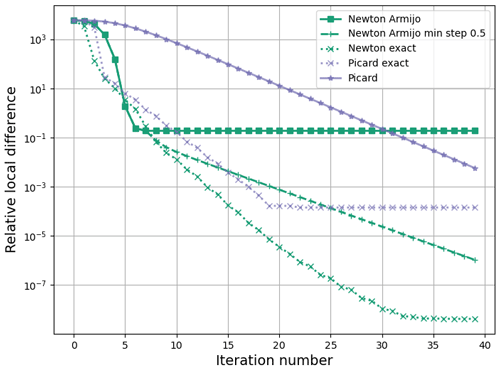

The general convergence behavior for the three-dimensional experiment is similar to the two-dimensional experiment.

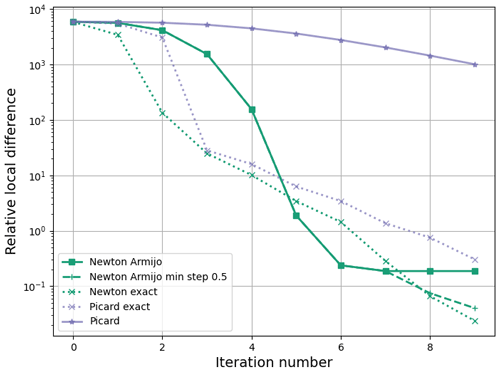

However, Newton's method is even better in three dimensions (see Fig. 11). Again, zooming to the first few iterations states the benefit from Newton's method and the step size control more impressing (see Fig. 12). The Picard iteration without a step size control needs 39 iterations to have the same accuracy as Newton's method using Armijo step sizes after 6 iterations. Thus, the necessary number of iterations is reduced by more than 85 %. Again, a minimum step size of α=0.5 helps to reduce the relative difference after a few iterations. The exact step sizes are even better. They decrease the relative difference (see Fig. 11) and the relative local difference (see Fig. 13) further than the Armijo step sizes.

For the Picard iteration, we again see a slow convergence without a step size control, especially if we consider the first few iterations (see Fig. 14). This figure emphasizes that the Picard iteration without a step size control converges slowly compared to the other methods. Exact steps lead to a great improvement.

Figure 12Relative difference compared to the reference solution for ISMIP-HOM A for the first nine iterations.

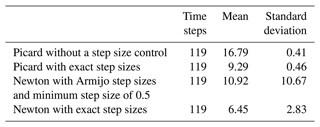

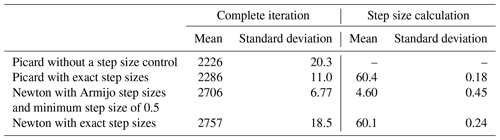

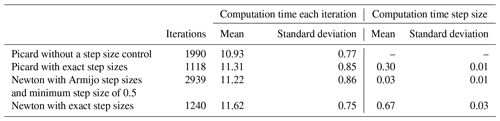

As this experiment is more realistic regarding the number of grid points, we calculated the computation time for each iteration in experiment ISMIP-HOM A (see Table 1). There are two key findings: The computation times for the Newton variants are about 20 % higher than for the Picard variants. Additionally, the step size control is computationally cheap compared to the rest of Newton's method or the Picard iteration.

However, the three-dimensional experiment has the additional uncertainty in precise computation times as we used the same processor type but a different processor on the high-performance computer.

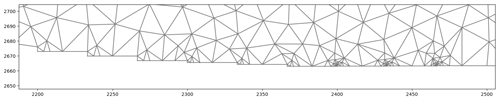

In this section, we simulate a time-dependent version of the Haut Glacier d'Arolla without and with sliding. In a first step, we verify that our model produces similar results as in the experiments ISMIP-HOM E1 and E2 (see Pattyn et al., 2008). The top and the bottom of the glacier are given by an input file. At the bottom, we have Dirichlet boundary conditions, and at the top, . The domain is represented in Fig. 15. In contrast to the stationary problems in Sect. 5, we do not have a reference solution. A stopping criterion in Gagliardini and Zwinger (2008, Sect. 2.4.2) is

To include the step size, we would multiply the right-hand side with α. This criterion is suitable for stopping the iteration in a real simulation if the velocity field only has small changes. However, checking the relative difference let the Newton variants with step size control stop earlier in our test simulation without decreasing the error as much as the Picard variants did. As we compare solvers, we need to check if G(vk,pk) is close enough to zero. Thus, we check if we are close enough to our solution compared to the initial guess:

with . A relative stopping criterion seems necessary to reduce dependence in the domain and the absolute velocities. Thus, the calculated velocity field should have an error of 0.1 % compared to the initial guess after each time step. Our initial guess for time-dependent problems is the solution of a Stokes problem before the first step. After calculating a velocity field, the surface velocity determines the new surface. The grid points are moved according to the new surface. The initial guess for the velocity on the new domain is our velocity field shifted to the new domain. The stopping criterion has the advantage that we count the number of iterations needed to reduce the error by a certain factor. Therefore, the wanted error reduction is the same for all our solvers.

Figure 14Relative local difference compared to the reference solution for ISMIP-HOM A for the first nine iterations.

Table 1Computation time in seconds without diagnostic calculations like the residual norm for the complete iteration.

We know . The Riesz isomorphism yields the existence of with

Thus, we have to solve another Stokes problem in each iteration. Note that this Stokes problem is only diagnostic, and we do not need to solve it in practice. As the numerical analysis focuses on the velocity field, we calculate our error by

For the experiment with sliding, we have to handle a difficulty arising in FEniCS: we can only force the boundary condition on horizontal and vertical boundaries. Thus, we use nine small stairs at the bottom instead of the slope in the original problem (see Fig. 16). On the bottom boundary with , we employ the boundary condition vz=0 for .

6.1 Stationary solutions

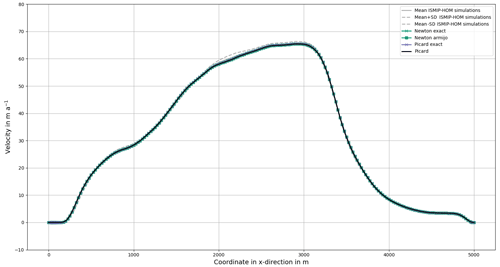

We only discuss the accuracy of our model in simulating the experiments ISMIP-HOM E1 and E2 without considering convergence speed. We discuss the convergence speed for these time-dependent problems. The simulation of the velocity field is quite similar to the results in Pattyn et al. (2008) (see Fig. 17). Our velocity field at the surface is mostly within the mean with the standard deviation of the reference solutions (see Fig. 17). In some small parts, the velocity is slightly lower.

For the experiment with sliding, the calculated velocity field is near the mean minus the standard deviation of the reference solutions in Pattyn et al. (2008) (see Fig. 18).

Often, it is even a bit less. However, it is still a suitable approximation, and we use both problems for the time-dependent simulation.

6.2 Time-dependent problem – mass transport

For the instationary problem, the surface develops dependent on the velocity field. In our case, we describe the height of the glacier by Eq. (18) (see Pattyn et al., 2008).

The height is fixed at x=0. Let be the discretization with x0=0 and xN=5000. We approximate the spatial differential quotient by an upwinding scheme:

The upwinding scheme stabilizes the solution of the discretized Eq. (18). Moreover, it helps in our experiments for the conversation of mass compared to the forward difference quotient and the central difference quotient. We use an explicit Euler method in time and conclude for the (k+1)th time step:

Together, we obtain the following for the (k+1)th time step and the ith grid point at the surface:

In our problem, the value z(x0) is fixed. Mathematically, we are not allowed to fix z(xN) because this value is determined by Eq. (18). Therefore, we add a grid point at (5000,2505), slightly above the bottom at (5000,2500). We impose on the newly generated right boundary. Hence, the mass can flow outside the glacier or physically interpreted ice is melting.

We calculate over 30 years to simulate a changing velocity field with the highest surface velocity at the right edge of the domain. We choose a time step size of 0.25 years to fulfill the CFL condition for experiment E1. In experiment E2, we choose the same time step sizes to have a comparable experiment.

6.3 Time-dependent simulation without friction

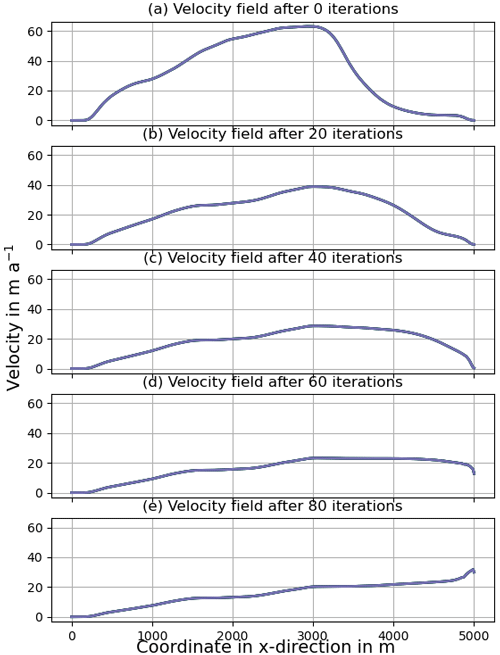

In this subsection, we visualize the velocity field of the glacier at the surface over the time simulation and discuss the computational effort for the experiment without friction.

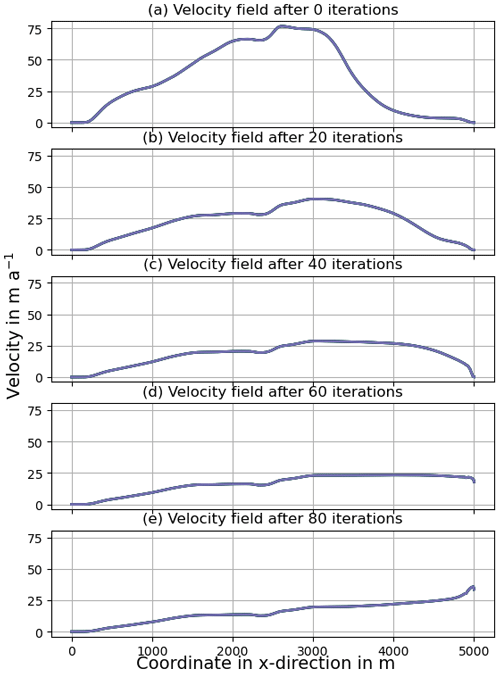

All simulations produce similar surface velocities over time (see Fig. 19).

Figure 19Surface velocity fields for the Picard iteration without a step size control, the Picard iteration with exact step sizes, Newton's method with Armijo step sizes, and Newton's method with exact step sizes. The velocity fields for the different methods are nearly identical.

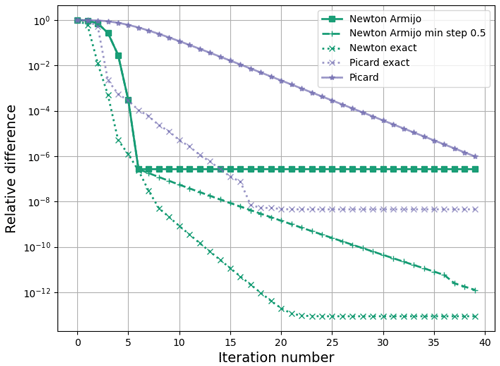

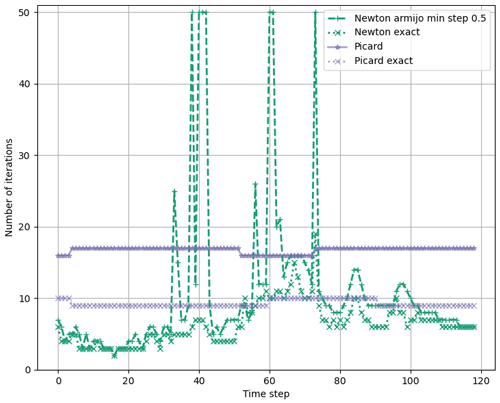

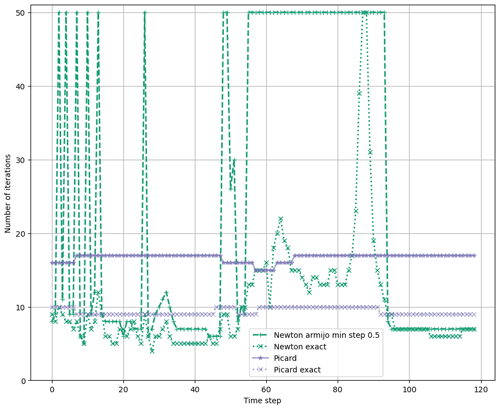

Thus, all methods seem to calculate the solution appropriately. Now, we discuss the computational effort. The number of iterations needed is shown in Fig. 20. The corresponding mean and standard deviations are in Table 2.

First, we discuss Newton's method. Armijo step sizes with a minimum step size of 0.5 have the problem that they do not always converge. This also leads to the largest standard deviation. The exact step sizes always converge and have the lowest mean. The standard deviation is still larger than for the Picard variants.

Second, we discuss the Picard variants. Without a step size control, the mean number of iterations is the highest of all four algorithms. The standard deviation is the lowest, and the number of iterations only fluctuates by one. The exact step sizes reduce the necessary number of iterations by more than 40 % and only need about two iterations more than Newton's method with these step sizes. The standard deviation is nearly the same.

Figure 20The number of iterations needed to solve the full-Stokes equations between each time step. The maximum number of iterations in each time step is set to 50.

We measured the computation time for the time-dependent experiment (see Table 3).

Table 3Computation time in seconds for the complete iteration without diagnostic calculations like the residual norm.

The computation of the step sizes takes 9 % or less of the total computation time for each iteration. The computation time for calculating diagnostics like the residual norm was not measured as it is unnecessary for the application.

6.4 Time-dependent simulation with friction

In this subsection, we visualize the velocity field of the glacier at the surface over the time simulation and discuss the computational effort for the experiment with friction.

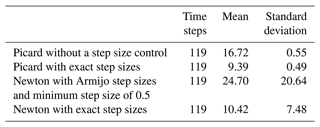

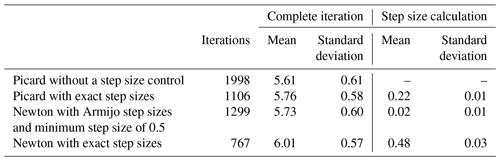

All simulations produce similar surface velocities over time (see Fig. 21). Thus, all methods seem to calculate the solution appropriately. Now, we discuss the computational effort. The number of iterations needed is shown in Fig. 22. The corresponding mean and standard deviations are in Table 4.

Figure 21Surface velocity fields for the Picard iteration without a step size control, the Picard iteration with exact step sizes, Newton's method with Armijo step sizes, and Newton's method with exact step sizes. The velocity fields for the different methods are nearly identical.

First, we discuss Newton's method. Armijo step sizes with a minimum step size of 0.5 have the problem that they often do not converge. This also leads to the largest mean number of iterations and the highest standard deviation. The exact step sizes do not converge in two cases. This also leads to a large standard deviation.

Second, we discuss the Picard variants. Without a step size control, the necessary number of iterations is nearly always the same but relatively large. The use of exact step sizes leads to the smallest mean and standard deviations. Thus, this method is the most efficient and, with a small standard deviation, also reliable. The exact step sizes for the Picard iteration are still better than for Newton's method without the two time steps that do not converge. The Picard variants also need nearly the same number of iterations for the experiment with and without sliding in contrast to the Newton variants.

Figure 22Number of iterations to solve the full-Stokes equations between each time step. The maximum number of iterations in each time step is set to 50.

We measured the computation time for the time-dependent simulation (see Table 5). The computation time for calculating diagnostics like the residual norm was not measured as it is unnecessary for the application.

Table 5Computation time in seconds for the complete iteration without diagnostic calculations like the residual norm.

The computation of the step sizes takes 6.5 % or less of the total computation time for each iteration.

Solving the full-Stokes equations is equivalent to minimizing a function. We use this function to introduce approximately exact step sizes. For a comparison, we also use Armijo step sizes. We test the algorithms for benchmark experiments with a sinusoidal bottom in two and three dimensions, for the Glacier d'Arolla benchmark experiment, and for a time-dependent variant of the Glacier d'Arolla experiment with and without sliding.

We observe that our calculated solutions are similar to those in Pattyn et al. (2008). However, the approximately exact step sizes greatly improve the convergence speed of the Picard iteration and ensure the convergence of Newton's method for nearly all situations except two cases in the time-dependent simulation with friction (see Fig. 22). Thus, the approximately exact step sizes seem to be better than the Armijo step sizes.

The computation time of the step sizes is only a small part of the complete iteration. In our experiments, the time for calculating the step sizes took 9 %, 6.5 %, and 3 %. The ratio is even smaller for higher resolutions. The concrete computation times in seconds are irrelevant as they depend on the hardware.

The effort to implement the algorithms above is relatively low. For every boundary condition added to those above, one has to check if a convex function exists. One only needs to implement these convex functions, the directional derivatives, and the Armijo and exact step sizes, respectively. The Picard iteration without a step size control or Newton's method should already be implemented for solving the full-Stokes equations.

There are a few possible directions to work on: the computation of the step size could be done more efficiently by parallelizing the calculation of the integrals and testing how many bisections are necessary for calculating the exact step sizes.

Also, more realistic three-dimensional examples or different sliding laws could be tested. The mathematical theory for a nonlinear sliding boundary condition is discussed in Schmidt (2023).

The implementation in ice models is another way to check if the presented algorithms work in real-world applications. Lastly, the step size control might reduce the number of iterations for the higher-order equations. Solving those equations is also equivalent to finding the minimum of a convex function (see Schoof, 2010).

A1 The variational formulation

For the well-posedness of the Picard iteration without a step size control (see Algorithm 1), the following applies:

has to be integrable. Due to a bounded ice rheology B and δ>0, follows the boundedness with c∈ℝ and

Thus, we need, for integrability, . This is only fulfilled for and with . Hence, we can not use (see Belenki et al., 2012, Sect. 2.3), which is the suitable space for μ0=0 as it allows for the proof of existence and uniqueness of the solution.

However, expression (A1) is well defined for . The additional diffusion term with μ0>0 verifies that the solution of the full-Stokes equations is in H. Similar reasons make the diffusion term necessary for Newton's method; the directional derivative (see Eq. 6) is only defined for .

A2 The directional derivative of G

In this subsection, we compute the derivative of G at the velocity v∈H and pressure p∈L in the direction w∈H and q∈L, with the diffusion μ0>0. Because we have a variational formulation, we can only interpret this derivative for test functions ϕ∈H and ψ∈L. We calculate

The limits for the last three lines on the right-hand side of the last equality are clear. For the first line, we use the Taylor expansion. Therefore, we define the function as

Its derivative is

We calculate the derivative by assuming we can draw the limes into the integral. A detailed explanation of why we can do this is in Schmidt (2023). We obtain the following with for the Taylor expansion:

The model is available at https://doi.org/10.5281/zenodo.10979366 (Schmidt, 2024). The latest version of the source code is available at https://github.com/Niko-ich/FEniCS-full-Stokes (last access: 13 June 2024).

The paper is written by NS with contributions and suggestions from TS and AH. The code is implemented by NS with support from TS and AH.

The contact author has declared that none of the authors has any competing interests.

Publisher’s note: Copernicus Publications remains neutral with regard to jurisdictional claims made in the text, published maps, institutional affiliations, or any other geographical representation in this paper. While Copernicus Publications makes every effort to include appropriate place names, the final responsibility lies with the authors.

The authors appreciate helpful explanations of the ISMIP-HOM experiments from Martin Rückamp from the Bavarian Academy of Sciences and Humanities and Thomas Kleiner from Alfred-Wegener-Institut in Bremerhaven. We also thank Mathieu Morlighem from Dartmouth College in Hanover, New Hampshire, for answering questions about ISSM and Fabien Gillet-Chaulet from the Université Grenoble Alpes for answering questions about Elmer/Ice. We thank the reviewers for suggesting experiments to increase the relevance of the paper and other helpful ideas to improve the paper.

We acknowledge financial support by DFG within the funding programme Open Access-Publikationskosten.

This paper was edited by Ludovic Räss and reviewed by Ludovic Räss and one anonymous referee.

COM: COMSOL Multiphysics Reference Manual, https://doc.comsol.com/5.4/doc/com.comsol.help.comsol/COMSOL_ReferenceManual.pdf (last access: 13 June 2024), 2018. a

Belenki, L., Berselli, L. C., Diening, L., and Růžička, M.: On the finite element approximation of p-stokes systems, SIAM J. Numer. Anal., 50, 373–397, 2012. a

Chen, Q., Gunzburger, M., and Perego, M.: Well-Posedness Results for a Nonlinear Stokes Problem Arising in Glaciology, SIAM J. Math. Anal., 45, 2710–2733, https://doi.org/10.1137/110848694, 2013. a

Colinge, J. and Rappaz, J.: A strongly nonlinear problem arising in glaciology, ESAIM-Math. Model. Num., 33, 395–406, https://doi.org/10.1051/m2an:1999122, 1999. a

Dennis, J. and Schnabel, R.: Numerical Methods for Unconstrained Optimization and Nonlinear Equations, Society for Industrial and Applied Mathematics, Philadelphia, xv + 375 pp., https://doi.org/10.1137/1.9781611971200, 1996. a

Fraters, M. R. T., Bangerth, W., Thieulot, C., Glerum, A. C., and Spakman, W.: Efficient and practical Newton solvers for non-linear Stokes systems in geodynamic problems, Geophys. J. Int., 218, 873–894, https://doi.org/10.1093/gji/ggz183, 2019. a, b, c

Gagliardini, O. and Zwinger, T.: The ISMIP-HOM benchmark experiments performed using the Finite-Element code Elmer, The Cryosphere, 2, 67–76, https://doi.org/10.5194/tc-2-67-2008, 2008. a

Gagliardini, O., Zwinger, T., Gillet-Chaulet, F., Durand, G., Favier, L., de Fleurian, B., Greve, R., Malinen, M., Martín, C., Råback, P., Ruokolainen, J., Sacchettini, M., Schäfer, M., Seddik, H., and Thies, J.: Capabilities and performance of Elmer/Ice, a new-generation ice sheet model, Geosci. Model Dev., 6, 1299–1318, https://doi.org/10.5194/gmd-6-1299-2013, 2013. a, b, c

Habbal, F., Larour, E., Morlighem, M., Seroussi, H., Borstad, C. P., and Rignot, E.: Optimal numerical solvers for transient simulations of ice flow using the Ice Sheet System Model (ISSM versions 4.2.5 and 4.11), Geosci. Model Dev., 10, 155–168, https://doi.org/10.5194/gmd-10-155-2017, 2017. a

Hinze, M., Pinnau, R., Ulbrich, M., and Ulbrich, S.: Optimization with PDE Constraints, Springer Netherlands, https://doi.org/10.1007/978-1-4020-8839-1, 2009. a, b, c, d

Hirn, A.: Finite element approximation of singular power-law systems, Math. Comput., 82, 1247–1268, 2013. a, b, c, d

Joughin, I., Smith, B. E., Howat, I. M., Scambos, T., and Moon, T.: Greenland flow variability from ice-sheet-wide velocity mapping, J. Glaciol., 56, 415–430, https://doi.org/10.3189/002214310792447734, 2010. a

Larour, E., Seroussi, H., Morlighem, M., and Rignot, E.: Continental scale, high order, high spatial resolution, ice sheet modeling using the Ice Sheet System Model (ISSM), J. Geophys. Res.-Earth, 117, F01022, https://doi.org/10.1029/2011jf002140, 2012. a, b

Leng, W., Ju, L., Gunzburger, M., Price, S., and Ringler, T.: A parallel high-order accurate finite element nonlinear Stokes ice sheet model and benchmark experiments, J. Geophys. Res.-Earth, 117, F01001, https://doi.org/10.1029/2011jf001962, 2012. a, b

Logg, A., Mardal, K.-A., and Wells, G. (Eds.): Automated Solution of Differential Equations by the Finite Element Method, Springer Berlin Heidelberg, https://doi.org/10.1007/978-3-642-23099-8, 2012. a, b

Nocedal, J. and Wright, S.: Numerical Optimization, Springer series in operations research and financial engineering, Springer, New York, 2nd Edn., https://doi.org/10.1007/978-0-387-40065-5, 2006. a, b, c

Pattyn, F., Perichon, L., Aschwanden, A., Breuer, B., de Smedt, B., Gagliardini, O., Gudmundsson, G. H., Hindmarsh, R. C. A., Hubbard, A., Johnson, J. V., Kleiner, T., Konovalov, Y., Martin, C., Payne, A. J., Pollard, D., Price, S., Rückamp, M., Saito, F., Souček, O., Sugiyama, S., and Zwinger, T.: Benchmark experiments for higher-order and full-Stokes ice sheet models (ISMIP–HOM), The Cryosphere, 2, 95–108, https://doi.org/10.5194/tc-2-95-2008, 2008. a, b, c, d, e, f, g, h, i, j, k, l, m, n, o, p, q, r

Rückamp, M., Kleiner, T., and Humbert, A.: Comparison of ice dynamics using full-Stokes and Blatter–Pattyn approximation: application to the Northeast Greenland Ice Stream, The Cryosphere, 16, 1675–1696, https://doi.org/10.5194/tc-16-1675-2022, 2022. a

Schmidt, N.: Global Convergence of the Infinite-Dimensional Newton’s Method for the Regularized P-Stokes Equations, Research Square [preprint], https://doi.org/10.21203/rs.3.rs-3354498/v1, 2023. a, b, c, d, e, f

Schmidt, N.: FEniCS-full-Stokes, Zenodo [code and data set], https://doi.org/10.5281/zenodo.10979366, 2024. a

Schoof, C.: Coloumb friction and other sliding laws in a higher-order glacier flow model, Math. Mod. Meth. Appl. S., 20, 157–189, https://doi.org/10.1142/s0218202510004180, 2010. a

Zhang, T., Price, S., Ju, L., Leng, W., Brondex, J., Durand, G., and Gagliardini, O.: A comparison of two Stokes ice sheet models applied to the Marine Ice Sheet Model Intercomparison Project for plan view models (MISMIP3d), The Cryosphere, 11, 179–190, https://doi.org/10.5194/tc-11-179-2017, 2017. a

- Abstract

- Introduction

- The full-Stokes equations as a root problem

- Newton's method

- Step size control

- Stationary experiments

- Instationary experiments

- Summary and conclusion

- Outlook

- Appendix A: Mathematical derivations

- Code and data availability

- Author contributions

- Competing interests

- Disclaimer

- Acknowledgements

- Financial support

- Review statement

- References

- Abstract

- Introduction

- The full-Stokes equations as a root problem

- Newton's method

- Step size control

- Stationary experiments

- Instationary experiments

- Summary and conclusion

- Outlook

- Appendix A: Mathematical derivations

- Code and data availability

- Author contributions

- Competing interests

- Disclaimer

- Acknowledgements

- Financial support

- Review statement

- References