the Creative Commons Attribution 4.0 License.

the Creative Commons Attribution 4.0 License.

The Utrecht Finite Volume Ice-Sheet Model: UFEMISM (version 1.0)

Heiko Goelzer

Roderik S. W. van de Wal

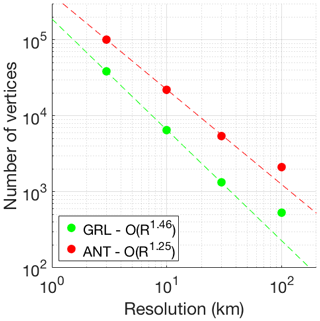

Improving our confidence in future projections of sea-level rise requires models that can simulate ice-sheet evolution both in the future and in the geological past. A physically accurate treatment of large changes in ice-sheet geometry requires a proper treatment of processes near the margin, like grounding line dynamics, which in turn requires a high spatial resolution in that specific region, so that small-scale topographical features are resolved. This leads to a demand for computationally efficient models, where such a high resolution can be feasibly applied in simulations of 105–107 years in duration. Here, we present and evaluate a new ice-sheet model that solves the hybrid SIA–SSA approximation of the stress balance, including a heuristic rule for the grounding-line flux. This is done on a dynamic adaptive mesh which is adapted to the modelled ice-sheet geometry during a simulation. Mesh resolution can be configured to be fine only at specified areas, such as the calving front or the grounding line, as well as specified point locations such as ice-core drill sites. This strongly reduces the number of grid points where the equations need to be solved, increasing the computational efficiency. A high resolution allows the model to resolve small geometrical features, such as outlet glaciers and sub-shelf pinning points, which can significantly affect large-scale ice-sheet dynamics. We show that the model reproduces the analytical solutions or model intercomparison benchmarks for a number of schematic ice-sheet configurations, indicating that the numerical approach is valid. Because of the unstructured triangular mesh, the number of vertices increases less rapidly with resolution than in a square-grid model, greatly reducing the required computation time for high resolutions. A simulation of all four continental ice sheets during an entire 120 kyr glacial cycle, with a 4 km resolution near the grounding line, is expected to take 100–200 wall clock hours on a 16-core system (1600–3200 core hours), implying that this model can be feasibly used for high-resolution palaeo-ice-sheet simulations.

- Article

(3137 KB) - Full-text XML

- BibTeX

- EndNote

The response of the Greenland and Antarctic ice sheets to the warming climate forms the largest uncertainty in long-term sea-level projections (e.g. Oppenheimer et al., 2019; van de Wal et al., 2019). Since the dynamical evolution of ice sheets has components that are slow compared to the human timescale, observational evidence alone cannot sufficiently reduce this uncertainty. Instead, reconstructions of the evolution of ice sheets during the geological past are required to improve our understanding of the long-term evolution of these systems and the constraints this provides for future ice-sheet retreat. Recent work has focused on using ice-sheet models and climate models, with varying degrees of inter-model coupling, to reproduce different periods of the geological past, with climates that have been both significantly warmer and colder than the present (e.g. Abe-Ouchi et al., 2013; Pollard et al., 2013; DeConto and Pollard, 2016; Stap et al., 2017; Berends et al., 2018, 2019; Willeit et al., 2019). These studies have highlighted and reemphasized the importance of understanding, and properly modelling, the different physical interactions between ice sheets, sea level, the solid Earth, and the regional and global climate. Since several of these processes become relevant only when significant changes in ice-sheet geometry occur, studying them requires very long (105–107 years) ice-sheet model simulations.

The dynamics of the Antarctic ice sheet at present are strongly influenced by the presence of floating ice shelves (Pattyn, 2018). Different studies have investigated the physical processes affecting these ice shelves, including surface melt induced by atmospheric processes (Bevan et al., 2017; Kuipers Munneke et al., 2018), bottom melt induced by intrusion of warm ocean water (Depoorter et al., 2013; Lazeroms et al., 2018, Reese et al., 2018a), brittle fracturing of ice cliffs (Pollard et al., 2015), and the response of the grounding line to changes in sea level and bedrock elevation (Gomez et al., 2013; Barletta et al., 2018). Ice dynamics around the grounding line have been of particular interest, with some studies suggesting that a very high model resolution (<100 m) is needed to accurately resolve the physical processes involved (Schoof, 2007; Gladstone et al., 2012; Pattyn et al., 2012, 2013). Since this not achievable for palaeo-applications, different approaches have been proposed where semi-analytical solutions (Schoof, 2007; Tsai et al., 2015) are implemented as boundary conditions in numerical models, to maintain physical accuracy at lower resolutions (Pollard and DeConto, 2012; Pattyn, 2017) of 1–40 km. However, while these approaches achieve good results in situations without buttressing (Pattyn et al., 2013), their performance in simulating realistic ice shelves, where buttressing is usually a significant factor, is poor (Reese et al., 2018b). Furthermore, a coarse model resolution can affect simulated ice-sheet evolution not just through numerical errors, but also by insufficiently resolving small-scale topographical features such as fjords and mountains (Cuzzone et al., 2019).

Ice-sheet model computation time increases rapidly with model resolution, due to the increasing number of grid points, the decreasing time step required for numerical stability, and the increasing number of topographic features that are resolved. This means that the need for both a high model resolution and long simulations results in a computational paradox. Most research groups working on palaeo-ice-sheet simulations consider the uncertainties in palaeoclimate reconstructions used as forcing to be much larger than the physical inaccuracy resulting from a low model resolution. The ice-sheet models used by these groups therefore typically have a low-resolution, fixed grid and solve a simplified version of the Navier–Stokes equations, which makes them very computationally efficient, and relatively easy to compile, run, and modify (e.g. SICOPOLIS, Greve et al., 2011; PISM, Winkelmann et al., 2011; ANICE, de Boer et al., 2014; f.ETISh, Pattyn, 2017; GRISLI, Quiquet et al., 2018; CISM, Lipscomb et al., 2019; Yelmo, Robinson et al., 2020). Palaeo-simulations of the Antarctic ice sheet with such models have used resolutions of, for example, 10 (DeConto and Pollard, 2016), 32 (Robinson et al., 2020), 40 (Berends et al., 2019), 80 (Willeit et al., 2019), or 110 km (Abe-Ouchi et al., 2013), too low to properly capture grounding line dynamics. A few existing models, which are mainly intended for relatively short (101–103 years) simulations, use high-resolution, static adaptive grids (“static” meaning that the grid is adapted to the initial ice-sheet geometry but is not updated during a simulation, e.g. ISSM, Larour et al., 2012; Elmer/Ice, Gagliardini et al., 2013; MALI, Hoffman et al., 2018) or even dynamic adaptive grids (“dynamic” meaning that the grid is adapted to the evolving ice-sheet geometry during a simulation, e.g. BISICLES (Cornford et al., 2013). These more sophisticated models solve for more terms in the Navier–Stokes equations (either the higher-order Blatter–Pattyn (Pattyn, 2003) approximation, or even the full Stokes system) using finite element methods, making them more physically accurate. While recent developments have improved their computational efficiency enough to make small-scale (single ice-sheet basin, 104 years) palaeo-simulations feasible (Cuzzone et al., 2018, 2019), they tend to be too computationally demanding for the 105–107-year simulations needed for palaeo-ice-sheet simulations.

Here, we present and evaluate a new ice-sheet model that constitutes a compromise between these two families: the Utrecht Finite Volume Ice-Sheet Model (UFEMISM). It combines the hybrid SIA–SSA approximation to the stress balance used in most palaeo-ice-sheet models with a dynamic adaptive grid, which allows it to achieve a high (<5 km) resolution near the grounding line, while retaining the computational speed required for feasibly performing long palaeo-ice-sheet simulations. This makes it especially useful for studying the impact of ice-sheet–solid Earth–sea-level interactions on long-term ice-sheet evolution. In Sect. 2, we provide a brief description of the physical equations for ice dynamics and thermodynamics that are solved by the model, as well as a description of the dynamic adaptive grid upon which those equations are solved. In Sect. 3, we present results from a number of benchmark experiments performed with UFEMISM, showing that the model output agrees well with different analytical solutions, as well as with output from other ice-sheet models. In Sect. 4, we present results from a detailed analysis of the computational performance of the model.

2.1 Overview

UFEMISM is a variable-resolution ice-sheet model. Flow velocities for grounded ice are calculated using the shallow ice approximation (SIA; Morland and Johnson, 1980), while the shallow shelf approximation (SSA; Morland, 1987) is used both for calculating flow velocities for floating ice, and as a “sliding law” for grounded ice, using the hybrid approach by Bueler and Brown (2009). These equations are discretized and solved on a dynamic adaptive grid (also called a mesh), which is generated and updated internally based on the modelled ice-sheet geometry. The resolution can vary from as coarse as 200 km over open ocean or 100 km over the interior of a large ice-sheet, to as fine as 1 km at the grounding line, with the precise numbers specified at run-time through a configuration file. Using a finite volume approach (hence the name), ice velocities and fluxes are calculated on cell boundaries, similar to the “staggered” Arakawa C grid used in many ice models based on finite differences (Arakawa and Lamb, 1977). By explicitly calculating the mass of ice moved between individual vertices in every time step, this approach guarantees conservation of mass. The model is thermomechanically coupled, solving for the diffusion and advection of heat, which enters the ice sheet through the surface and base, as well as through strain heating. For this purpose, the ice sheet is divided vertically into 15 unequally spaced layers (also configurable), decreasing in thickness near the base, where most of the deformation takes place. The resulting three-dimensional englacial temperature field is used to determine the ice viscosity.

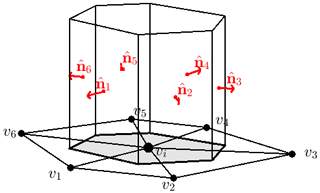

2.2 Unstructured triangular mesh

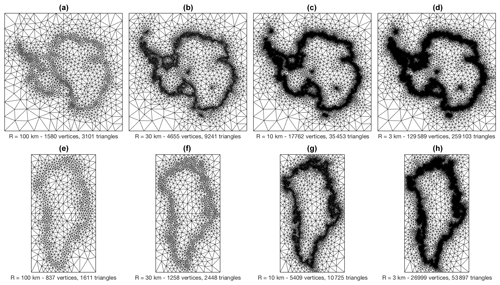

The main distinguishing feature of UFEMISM with respect to other palaeo-ice-sheet models is the unstructured triangular mesh on which the physical equations are solved. UFEMISM includes its own mesh generation code, which is based on an extended version of Ruppert's algorithm (Ruppert, 1995). This algorithm is discussed in more detail in Appendix E. Shown in Fig. 1 are four meshes generated for both Antarctica and Greenland, based on present-day ice-sheet geometry (BedMachine Greenland version 3, Morlighem et al., 2017, and BedMachine Antarctica version 1, Morlighem et al., 2019), with maximum ice-margin (including both the grounding line and the calving front) resolutions of 100, 30, 10, and 3 km, respectively. For Antarctica, two high-resolution locations are included: one at the South Pole, and one at the EPICA Dome C drill site.

Figure 1Meshes generated for Antarctica (a–d) and Greenland (e–h), based on present-day ice-sheet geometry, with maximum ice-margin (including grounding line and calving front) resolutions of 100, 30, 10, and 3 km, respectively. For Antarctica, two high-resolution locations are included: one at the South Pole, and one at the EPICA Dome C drill site. Both have been prescribed the same resolution as the ice margin.

Since the ice margin, the calving front and the grounding line are one-dimensional regions, increasing the desired resolution only over these regions results in a total number of vertices and triangles that scales almost linearly with this resolution (though not quite, as increasing the resolution resolves more geographical features, increasing the length of the lines). How the number of vertices and the computational speed of the model scale with the prescribed resolution is investigated in more detail in Sect. 4. UFEMISM uses a dynamic adaptive mesh, which is adapted to the modelled ice-sheet geometry during a simulation, so that the grounding line and other regions of interest are always captured at a high resolution, even when the ice-sheet geometry changes substantially. The exact way this is achieved is illustrated in Appendix E.

2.3 Ice dynamics

UFEMISM uses the hybrid SIA–SSA approximation to the stress balance developed by Bueler and Brown (2009). In this approximation, ice velocities over land are calculated using the SIA, whereas ice velocities for floating ice, as well as sliding velocities on land, are calculated using the SSA. The two velocity fields are then added together, following the approach developed for PISM by Winkelmann et al. (2011), who argued that the weighted average used by Bueler and Brown (2009) introduces a new degree of freedom, while not appreciably changing the solution.

The SIA relates the (depth-dependent) ice velocities u(z), v(z) directly to local ice thickness H, the temperature-dependent viscosity A(T∗), and the surface slopes (with the dependence on x, y, t in all variables left out for ease of notation):

Here, D(z) (which very much resembles the diffusivity in SIA-only ice models, e.g. Eq. 16 in Huybrechts et al., 1996) is defined as a function of the ice density ρ, gravitational acceleration g, ice thickness H, surface gradient ∇h, Glen's flow law exponent n, and the temperature-dependent ice flow factor A(T∗). For a comprehensive derivation of Eqs. (1) and (2), see for example Bueler and Brown (2009). In order to calculate ice thickness changes over time, the depth-dependent velocities in Eq. (2) are vertically averaged.

A concrete version of the SSA stress balance, expressed in terms of the vertically averaged horizontal ice velocities u, v, is given by Bueler and Brown (2009). Here, subscripts denote partial derivatives, e.g. :

The first two terms on the left describe the extensional stresses, also called “membrane stresses”. The third term describes the basal shear stress for a Coulomb-type friction law, which is commonly used in hybrid SIA–SSA models since the vanishing friction at the grounding line yields better results than the discontinuous Weertman-type friction law. The right-hand side describes the gravitational driving stress. The vertically averaged ice viscosity is described by MacAyeal (1989) as a function of ice velocity:

In order to ensure proper grounding line migration, a semi-analytical solution for grounding line flux with a Coulomb-type sliding law (Tsai et al., 2015) is applied as a boundary condition for the SSA:

The way this solution is implemented is described in detail in Appendix C.

The way these equations are discretized on the unstructured triangular mesh is derived in Appendix A. Ice thickness changes over time are then calculated using a finite volume approach: by calculating both the surface slopes and and the resulting ice velocities u and v, on the boundaries between vertices (using the “staggered mesh” approach described in Appendix A), ice fluxes between individual vertices are calculated explicitly, and moved from one vertex to the other in every time step. This guarantees conservation of mass. The finite volume approach is explained in more detail in Appendix B.

The SIA and SSA are solved asynchronously, with the time steps determined as a function of the local resolution Rc (defined as the distance between the two regular vertices connected by the staggered vertex vc), the staggered SIA diffusivity Dc, and the staggered SSA ice velocities uc, vc, similar to the approach used in PISM (Bueler et al., 2007):

2.4 Thermodynamics

UFEMISM uses a mixed implicit–explicit finite differencing scheme with a fixed time step to solve the heat equation in a flowing medium:

The three terms on the right-hand side of Eq. (7) respectively represent diffusion, advection, and strain heating (using a strain heating expression that is valid only for the SIA; a simplification that will need to be addressed in future improvements). In UFEMISM, horizontal diffusion of heat is neglected, simplifying Eq. (7) to

This equation is discretized on an irregular grid in the vertical direction; all vertical derivatives are discretized implicitly, whereas horizontal derivatives are discretized explicitly. This mixed approach has a long history of use in palaeo-ice-sheet models (e.g. Huybrechts, 1992; Greve, 1997), as it is numerically stable (since both the steepest gradients and shortest grid distances are in the vertical direction), relatively easy to implement, and fast to compute. Using 15 layers in the vertical direction, a time step of 10 years is typically sufficient to maintain numerical stability for the range of resolutions investigated here. The iterative scheme for solving this equation is derived in Appendix C.

In order to verify the numerical solution to the ice dynamical equations, we performed several benchmark experiments, where we compare our model output to analytical solutions (Halfar, 1981; Bueler et al., 2005), and to results from the EISMINT intercomparison exercise (Huybrechts et al., 1996) for the SIA part of the solution, and finally for the complete hybrid SIA–SSA to the MISMIP experiments (Pattyn et al. 2012). All of the experiments were performed using the dynamic adaptive mesh.

3.1 Verification using analytical solutions

For several schematic, simplified ice-sheet configurations, analytical solutions exist for the time evolution of the ice sheet. One of these was derived by Halfar (1981), describing a “similarity solution” for the time evolution of a radially symmetrical, isothermal ice sheet lying on top of a flat bed, with a uniform zero mass balance. For an ice sheet which, at time t0, has a thickness at the dome H0 and margin radius R0, the time-dependent solution to the SIA, with Glen's flow law exponent n, reads as follows:

Since the surface mass balance is zero, any change in ice thickness is caused only by ice dynamics, making this a useful experiment for verifying ice-sheet model numerics.

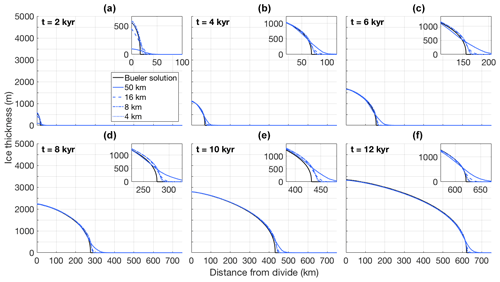

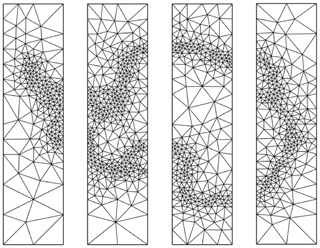

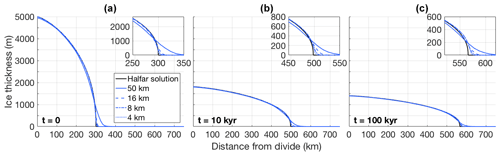

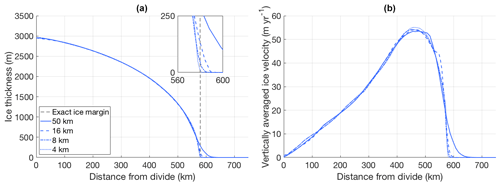

We performed several simulations with UFEMISM of an ice sheet that starts at t=t0 with the Halfar solution for H0=5000 m, R0=300 km, Pa3 yr−1, and n=3. The ice-margin resolutions for the different simulations were set to 50, 16, 8, and 4 km. Shown in Fig. 2 are transects of the simulated ice sheet at different points in time, compared to the analytical solution.

Figure 2The evolution through time of a schematic, radially symmetric, isothermal ice sheet, as simulated by UFEMISM at different ice-margin resolutions, compared to the Halfar solution starting at t=t0. The small sub-panels in the top-right corner of the panels show a zoomed-in view of the ice margin, showing how the simulated ice margin converges to the analytical solution as the model resolution increases.

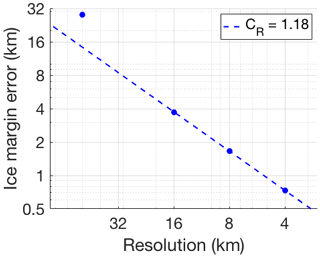

UFEMISM reproduces the analytical solution well, with the largest errors occurring at the margin, and decreasing with resolution. As was shown by Bueler et al. (2005), this is the case for all spatially discrete SIA models and is due to the fact that such models are intrinsically unable to reproduce the infinite surface slope at the margin predicted by the continuum model. They also show that these errors do not “corrupt” the model solution over the interior. This matches our results, where the modelled ice-sheet interior after 100 000 years is still close to the analytical solution. At that time, the modelled ice-sheet margin at 4 km resolution differs from the analytical solution by about 10 km.

A generalization of the solution by Halfar (1981), applicable to problems including a simple elevation-dependent accumulation rate, was derived by Bueler et al. (2005). For an accumulation given by

the solution for the ice thickness over time reads as follows:

The special case of zero mass balance, described by λ=0, gives the solution by Halfar (1981). The results of this experiment are shown in Fig. 3, for the case of λ=5, which describes a positive accumulation rate, resulting in a rapidly expanding ice sheet. Here, too, UFEMISM reproduces the analytical solution well, with the largest errors occurring at the ice-sheet margin and decreasing with resolution.

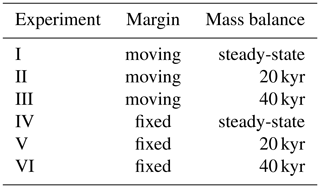

3.2 EISMINT benchmark experiments

In order to further investigate the validity of our numerical schemes for ice dynamics and thermodynamics, we used UFEMISM to perform the first set of EISMINT benchmark experiments (Huybrechts et al., 1996). All of the six experiments consist of a radially symmetric ice sheet, lying atop a flat, non-deformable bedrock. While the temperature of the ice is calculated dynamically, the ice flow factor is kept fixed at a value of Pa3 yr−1, meaning that ice temperature is a purely diagnostic variable. The six experiments are divided into two groups of three: a “moving margin” and a “fixed margin” group (Table 1). In the “fixed margin” experiments, the mass balance is such that the expected theoretical ice margin lies outside the model domain, and ice thickness at the boundary is artificially kept at zero. In realistic model configurations, such a margin (i.e. where the ice thickness does not approach zero) can occur at a calving front. A moving margin is achieved by setting a zero mass balance integral over a bounded region fully enclosed within the model domain. The three experiments within a group have different mass balances; a fixed, “steady-state” mass balance, one with an added 20 kyr sinusoid, and one with a 40 kyr sinusoid, which is useful for investigating the performance of the model in terms of temporal evolution. Simulations for each experiment were performed with ice-margin resolutions of the original EISMINT resolution of 50 km, as well as finer resolutions of 16, 8, and 4 km.

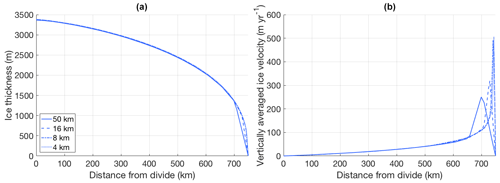

Shown in Fig. 4 are the simulated ice thickness and ice velocity of a radial transect of the ice sheet in experiment I, at the end of a 120 kyr simulation that was initialized with an ice thickness of zero. These results agree well with those presented by Huybrechts et al. (1996), showing an ice sheet that is ∼2960 m thick at the divide and has a maximum outward ice velocity of ∼55 m yr−1 at approximately 450 km away from the divide. The small sub-panel in the upper right corner of panel (a) zooms in on the ice margin, showing that the modelled ice margin converges to the analytical ice margin (the perimeter of the circle where the mass balance integrates to zero) as the resolution increases.

Figure 4(a) Ice thickness and (b) vertically averaged ice velocity of a radial transect of the ice sheet in experiment I, simulated by UFEMISM at 50, 16, 8, and 4 km ice-margin resolutions. The vertical dashed line in (a) denotes the analytical ice margin.

Shown in Fig. 5 are the same transects for experiment IV (steady-state, fixed margin), showing an ice sheet that is ∼3400 m thick at the divide, compared to 3340–3420 m in Huybrechts et al. (1996).

Figure 5(a) Ice thickness and (b) vertically averaged ice velocity of a radial transect of the ice sheet in experiment IV, simulated by UFEMISM at 50, 16, 8, and 4 km ice-margin resolutions. The sharp peaks in the velocity near the margin are a result of the singularity in these Nye–Vialov margins, and the ice thickness approaches zero as one approaches the margin, but the ice flux remains finite, implying an infinite velocity.

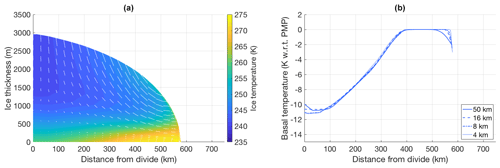

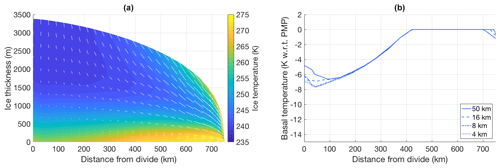

Figures 6 and 7 show the temperature transect of the ice sheet for experiments I and IV at 4 km resolution, and the basal temperature transects for all simulations in these experiments. Following the specifications from Huybrechts at al. (1996), the thermal conductivity and specific heat capacity of ice are kept fixed at uniform values of k=2.1 and cp=2009 , respectively. Here, too, results agree well with those reported by Huybrechts et al. (1996). In experiment I, basal temperatures at the ice divide are 11–12 K below the pressure melting point (PMP), increasing along the outward transect until they reach the PMP at 350–400 km from the divide. In experiment IV we see similar results, with basal temperatures at the ice divide lying around 8 K below PMP, reaching PMP slightly further towards the margin at 400–450 km from the divide. Preliminary experiments with a one-dimension set-up (vertical column only) show that these results are robust across different choices of vertical resolutions.

Figure 6(a) Ice temperature and velocity vectors for the steady-state ice sheet in experiment I, as produced by UFEMISM with a 4 km ice-margin resolution. (b) Basal temperature transects for the different simulations in the same experiment.

Figure 7(a) Ice temperature and velocity vectors for the steady-state ice sheet in experiment IV, as produced by UFEMISM with a 4 km ice-margin resolution. (b) Basal temperature transects for the different simulations in the same experiment.

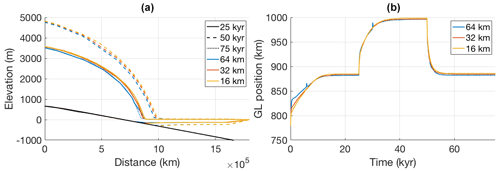

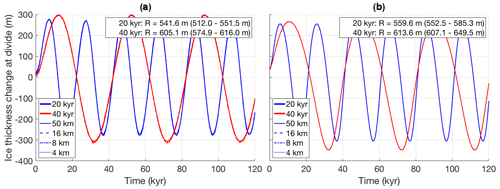

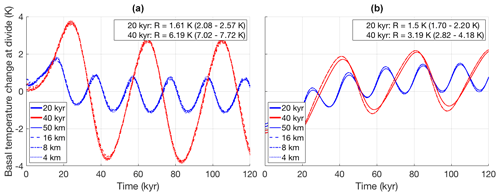

Figure 8 shows the ice thickness change at the ice divide over time for experiments II, III, V, and VI. Here too, the results from simulations with UFEMISM at different resolutions agree with the results published by Huybrechts et al. (1996). The glacial–interglacial ice thickness (G–IG) changes for all four experiments lie within the ranges reported by Huybrechts et al. (1996), as listed in the top-right corners of both panels of Fig. 8. The simulated ice thickness time series at different resolutions are not distinguishable. Figure 9 shows the basal temperature relative to the pressure melting point over time, for the same experiments. We find a G–IG temperature changes that are slightly smaller than the values reported by Huybrechts et al. (1996), lying just outside their reported ranges. In agreement with the findings of Huybrechts et al. (1996), introducing glacial cycles in the moving margin experiments (Fig. 9a) results in G–IG mean temperature decrease of about 1 K, while the fixed margin experiments (Fig. 9b) see a temperature increase of about 2.5 K.

Figure 8(a) Ice thickness change at the divide for experiments II and III (moving margin, respectively 20 (blue) and 40 kyr (red) sinusoid mass balance perturbation). (b) The same for the fixed margin experiments (V and VI), simulated by UFEMISM at 50, 16, 8, and 4 km ice-margin resolutions. Listed in the top-right corners of both panels are the glacial–interglacial ice thickness changes simulated by UFEMISM, compared to the ranges reported by Huybrechts et al. (1996) between brackets.

Figure 9(a) Basal temperature change at the divide for experiments II and III (fixed margin, respectively 20 (blue) and 40 kyr (red) sinusoid mass balance perturbation). (b) The same for the fixed margin experiments (V and VI), simulated by UFEMISM at 50, 16, 8, and 4 km ice-margin resolutions. Listed in the top-right corners of both panels are the glacial–interglacial basal temperature changes simulated by UFEMISM, compared to the ranges reported by Huybrechts et al. (1996) between brackets.

3.3 MISMIP benchmark experiment

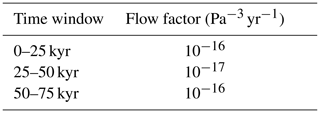

In order to validate our solution to the hybrid SIA–SSA stress balance, we performed the first MISMIP experiment (Pattyn et al., 2012), modified from a 1-D flow line experiment to 2-D plan-view experiment in a manner similar to Pattyn (2017). This schematic experiment consists of a cone-shaped island at the centre of the model grid (described by ), under a spatially and temporally uniform mass balance forcing of 30 cm yr−1. This results in a circular ice sheet, surrounded by an ice shelf that extends to the domain boundary. In order to assess grounding line dynamics in the model, the ice flow factor A is step-wise increased (leading to grounding-line advance) and subsequently decreased (leading to grounding-line retreat). Pattyn et al. (2012) showed that, while most participating ice-sheet models produce some amount of grounding line advance in the first phase, many of them failed to retreat back to their initial position in the second phase. The “best” performance (i.e. a one-to-one relation between flow factor and grounding-line position, without any hysteresis) was observed in models that included a semi-analytical solution to the grounding line flux (Schoof, 2007; Tsai et al., 2015), either as a boundary condition to the SSA, or by overwriting the numerically derived grounding line flux (typically using a heuristic rule to determine which grid cells to apply the analytical solution to). We chose the former approach in UFEMISM, using the semi-analytical solution by Tsai et al. (2015) for a grounding line flux with a Coulomb-type sliding law, as a boundary condition in the SSA. This was achieved by solving the SSA simultaneously on both the regular and the “staggered” mesh (explained in more detail in Appendix C), and keeping the values on the staggered grounding line vertices (lying halfway between a grounded and a floating vertex) fixed at the analytical solution. We then prescribe a flow factor with step-wise changes every 25 000 years, as shown in Table 2. This experiment was performed with grounding-line resolutions of 64, 32, and 16 km.

The results of this experiment with UFEMISM are shown in Fig. 10. Panel (a) shows cross sections of the modelled ice sheets at the end of the three time windows. As can be seen, the ice sheets at 25 and 75 kyr are nearly indistinguishable (as they should be for the same flow factor) and show no appreciable dependence on resolution. Panel (b) shows the grounding line position over time, showing that the grounding line retreats exactly to its initial position after the flow factor is returned to its initial value, and that the results are resolution-independent.

The first version of UFEMISM presented here is parallelized using the Message Passing Interface (MPI) construct of “shared memory”, allowing the program to run in parallel on a number of processor cores that are able to directly access the same physical memory (usually either 16, 24, or 32 cores on typical computer clusters or supercomputers). This is a temporary choice; the effort to extend the parallelization to multiple nodes, using the full capability of MPI, is still ongoing but is beyond the scope of this paper.

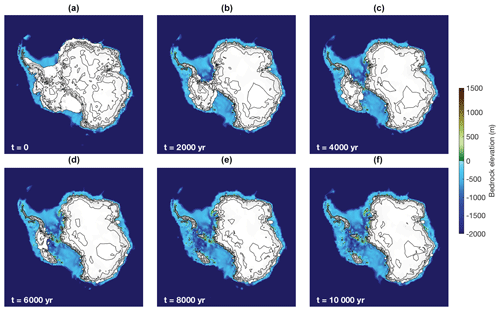

In order to investigate the computational performance of the different model components, we performed a series of simulations of Antarctic ice-sheet retreat over a period of 10 000 years. For these simulations, the climate was set to the present-day observed climate (ERA-40; Uppala et al., 2005) plus a spatially and temporally uniform 5 ∘C warming. The version of UFEMISM presented here already includes the IMAU-ITM mass balance model (Fettweis et al., 2020), so that a 5 ∘C warming leads to a substantial retreat through the increase in surface melt. The model was initialized with the BedMachine Antarctica v2 (Morlighem et al., 2019) bed topography and ice geometry. Sub-shelf melt was calculated using the temperature- or depth-dependent parameterization by Winkelmann et al. (2011) and Martin et al. (2011), using a constant uniform ocean temperature. The ice flow factor, thermal conductivity, specific heat, and pressure melting point were all calculated using the temperature-dependent expressions given by Huybrechts (1992). The basal shear stress in the Coulomb-type sliding law implemented in the SSA was calculated using the parameterizations for the till friction angle and pore water pressure from Martin et al. (2011). A constant, uniform geothermal heat flux of 1.72×106 (Sclater et al., 1980) was prescribed. No glacial isostatic adjustment or changes in sea level were included. This experiment is not meant to represent a realistic projection of Antarctic retreat; the choice of forcing is merely convenient because it ensures a rapidly changing ice-sheet geometry, forcing frequent mesh updates. The results of one of these simulations are shown in Fig. 11.

Figure 11Results from the 10 000-year Antarctic retreat simulation with a 4 km grounding line resolution. Panels (a–f) show the modelled ice sheet at 2000-year intervals. Bedrock elevation is indicated by colours, surface elevation contours are shown at 1000 m intervals.

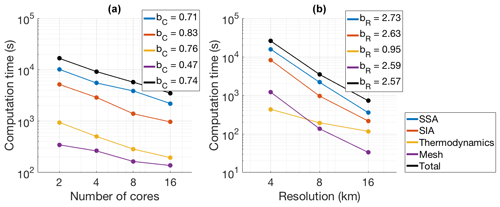

The computation times of the different model components as a function of number of cores and model resolution are shown in Fig. 12. The simulations described in Sect. 4 were run on the LISA computer cluster operated by SURFsara, using an Intel Xeon Gold 6130 Processor with a 2.1 GHz clock frequency and 22 MB cache, and were compiled with the ifort compiler.

Figure 12(a) Computation times vs. number of cores for the SSA (blue), SIA (red), thermodynamics (yellow), and mesh updating (purple) model components, as well as for the entire model (black), for a 10 000-year Antarctic retreat simulation at 8 km resolution. These four components together account for ∼99.8 % of the total computation time. Logarithmic fits of the form have been made to the data, with the scaling coefficients bC shown in the legend. (b) Computation times vs. model resolution for the same model components, run on 16 cores. Logarithmic fits of the form have been made to the data, with the scaling coefficients bC shown in the legend.

Figure 12a shows the degree of parallelization of the model components. For each model component, logarithmic fits have been made between the computation time t and the number of cores C of the form . The coefficient bC describes the degree of parallelization, such that bC=1 describes perfect parallelization, i.e. doubling the number of cores reduces the computation time by half. The three physics modules have a good degree of parallelization, ranging from bC=0.71 for the SSA to bC=0.83 for the SIA. Mesh updating scales less well, with bC=0.47. This is likely to do with the fact that the entire mesh generation code was writing by the authors themselves, instead of relying upon available external software packages. Since mesh updating accounts for only 2 %–4 % of total computation time, this does not noticeably affect the parallelization of the complete model, which has bC=0.74. The SSA dominates the total computation time across all resolutions and numbers of processors, requiring as much or more computation time as all other model components combined. The routine that applies the finite volume method described in Appendix B to update the ice thickness through time is included in the SIA computation time.

Figure 12b shows computation versus model resolution for the same model components. For each model component, logarithmic fits have been made between the computation time t and the resolution R of the form . In an idealized situation (such as the EISMINT experiments), the SIA and SSA should scale with the resolution to order bR=3 in a square-grid model (two orders from the number of grid cells, and one from the time-step dependence on resolution, according to the numerical stability criterion for the solution of the mass conservation equation). In UFEMISM, this can be theoretically reduced to order bR=2, since a high resolution is only applied over the one-dimensional ice-sheet margin, where the diffusivity is not necessarily the largest of the model domain. The reason that the results shown in Fig. 12 deviate from this idealized case is because, for a realistic ice sheet, increasing the resolution resolves more topographical features, which increases the length of the one-dimensional domains of the ice margin, the grounding line, and the calving front. This is illustrated in Fig. 13, which shows the number of vertices of meshes for Greenland and Antarctica versus the model resolution at the ice margin.

Similarly, resolving more topographical features also leads to an increased ice diffusivity in areas with steep gradients, decreasing the critical time step and increasing the computation time. Preliminary experiments with a simple square-grid model showed the same effect, and the computation time for those experiments scaled with resolution to order bR=3.5–4.0, rather than the bR=3 in the idealized case.

The computation time of the mesh updating component also scales well with model resolution, to the order bR=2.59. The thermodynamics component scales even better, with order bR=0.95. The reason that this is so much lower than the SIA and SSA components is that the thermodynamics uses a constant rather than a dynamic time step, which is related to the choice of vertical discretization rather than to the horizontal model resolution.

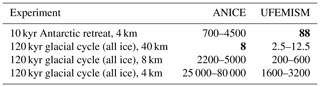

In these experiments, the entire ice margin, including the grounding line and calving front, was given the same resolution. When using UFEMISM for actual palaeo-simulations, such a high resolution would only be used for the grounding line, where it directly increases the physical accuracy of the solution. In one last experiment, we performed the same Antarctic retreat simulation, with the resolution set to 4 km for the grounding line, 16 km for the rest of the ice margin, and 200 km over the ice sheet interior. Run on 16 cores, the simulation took about 88 core hours (5 h 30 m wall-clock time) to complete. Extrapolating these numbers to the Greenland, North American, and Eurasian ice sheets is difficult, due to the differences in size, glacial–interglacial geometry changes, and relative area of floating ice. Previous work with the square-grid model ANICE (Berends et al., 2018, 2019) indicates that the Antarctic and North American ice sheets typically have roughly the same computational expense when they are at their maximum extent, while the Eurasian and Greenland ice sheets respectively require about one-half and one-quarter as much computation time. Based on these numbers, a full glacial cycle simulation of all four ice sheets would take somewhere between 1.5 and 3 times as much as one for only Antarctica, implying a required computation time of about 1600–3200 core hours (100–200 wall clock hours on a 16-core system). For comparison, a full glacial cycle simulation with ANICE at 40 km resolution takes about 8 core hours, which implies a simulation at 4 km would take about 25 000–80 000 core hours (numbers based on extrapolation), meaning that UFEMISM is about 10–30 times faster. If the grounding line resolution is decreased to 8 km, these numbers decrease to about 200–600 core hours (20–40 wall hours) for UFEMISM, and 2200–5000 core hours for ANICE. These numbers are summarized in Table 3.

Table 3Observed and extrapolated computation times (in core hours) for different simulations with ANICE and UFEMISM. Bold-faced numbers are observed times, all others are estimates based on extrapolation.

UFEMISM can be compiled with both the Gfortran and ifort compilers, requiring only LAPACK, NetCDF, and MPI as external packages, and can run on any number of processors that can access the same shared memory chip. This means that it should be possible to compile and run the model on most consumer-grade systems. The model contains roughly 200 double precision data fields. For the 3 km resolution Antarctica mesh (∼100 000 vertices) shown in Fig. 1, this implies a memory usage of about 1.6 GB. Output is written to NetCDF files at about 10 kb per vertex, implying that a 120 kyr glacial cycle simulation of Antarctica at 3 km (100 000 vertices), where output is written every 1000 model years, would generate about 150 GB of data.

We have presented and evaluated a new thermomechanically coupled ice-sheet model, UFEMISM, which solves the SIA and SSA versions of the ice-dynamical equations on a fully adaptive mesh. The model is able to accurately reproduce the analytical solutions for the evolution of schematic ice sheets by Halfar (1981) and Bueler et al. (2005), as well as the EISMINT benchmark experiments (Huybrechts et al., 1996), indicating that the numerical schemes for solving the SIA and integrating the mass conservation law are valid. In a modified version of the first MISMIP experiment (Pattyn et al., 2012), adapted from a 1-D flowline to a 2-D plan view, UFEMISM shows a grounding line position that is resolution-independent and displays no hysteresis during forced advance–retreat cycles. Analysis of the computational performance of the model indicates that it scales well with both number of processors and model resolution. Based on those results, UFEMISM should be able to simulate the evolution of the four large continental ice sheets over an entire glacial cycle, with a grounding line resolution of 4 km, in 1600–3200 core hours (100–200 wall hours on 16 processors), which is very feasible on typical facilities for scientific computation.

The MISMIP experiment (Pattyn et al., 2012) used here to validate our solution to the SSA requires further consideration. Pattyn et al. (2012) showed that simply solving the SSA without any special treatment of the grounding line results in grounding line positions that are strongly resolution-dependent and yield significant hysteresis during advance–retreat cycles, unless model resolution is lower than ∼100 m (a value that is not feasible in palaeoglaciological simulations). This problem is not particular to the SSA, as the same problems are observed in the full-Stokes model Elmer/ice, where a ∼30 km hysteresis in grounding-line position is observed at a model resolution of 200 m (Gagliardini et al., 2016). Different semi-analytical solutions for the ice flux across the grounding line in the absence of buttressing have been proposed (Schoof, 2007; Tsai et al., 2015), and Pattyn et al. (2012) showed that using these solutions as a boundary condition decreases both the resolution dependence and the hysteresis (which is confirmed by our own results presented here). However, implementing these analytical solutions in 3D numerical models has proven difficult (Pollard and DeConto, 2012; Pattyn et al., 2013), and recent work has demonstrated that these analytical solutions cannot (yet) be feasibly altered to account for buttressing (Reese et al., 2018b), which is absent in the MISMIP experiment we performed, but which plays an important role at the majority of Antarctic grounding lines (Reese et al., 2018b). Our model therefore meets the same performance standards as existing models for grounding line dynamics but probably does not produce correct grounding line dynamics under all circumstances, as the role of buttressing remains a matter of debate. Just how large an effect this has on palaeo-ice-sheet dynamics relative to the uncertainties arising from proxy data, palaeoclimate forcing, and other physical processes, is still unclear.

The current version of UFEMISM has been parallelised using MPI shared memory, meaning the number of processors that can run the model depends on the hardware system, limited by how many processors can access the same memory chip. The three most computationally expensive model components (the SSA, SIA, and thermodynamics modules, respectively) are shown to scale well. Extending the parallelisation framework to allow the model to run on multiple nodes might therefore substantially reduce the wall clock time for large simulations. The current version of UFEMISM uses domain decomposition to parallelize the mesh generation algorithm. This approach lends itself well to multi-node parallelisation, as each node can be assigned a region of the model domain.

The iterative scheme used in solving the SSA is currently the most computationally demanding model component, requiring as much or more computation time as all the other model components combined. Preliminary experiments have identified several possible solutions that could substantially reduce this, including a spatially variable relaxation parameter (see Appendix B) and/or a multigrid scheme. Efforts to develop these solutions into applications that are robust and stable enough for the large-scale, long-term simulations for which UFEMISM is intended are ongoing but are beyond the scope of this study.

As UFEMISM is intended to be used for palaeo-simulations, it has been developed to be able to include an elaborate climate forcing and mass balance forcing. Previous work by our group has focused on developing a computationally efficient climate forcing that explicitly includes important feedback processes in the ice–climate system, such as the altitude–temperature feedback, ice–albedo feedback, and orographic precipitation feedback (Berends et al., 2018). While these solutions have not yet been implemented in UFEMISM, it has been designed with these solutions in mind, so that implementing them should be straightforward. Future work will also focus on improving the thermodynamics (probably using an energy-conserving enthalpy-based approach along the lines of Aschwanden et al., 2012), adding a basal hydrology model (Bueler and van Pelt, 2015), and including recently developed parameterizations for cliff failure and shelf hydrofracturing (Pollard et al., 2015). We have also previously worked on developing a coupled ice-sheet–sea-level model (de Boer et al., 2014). A key improvement in UFEMISM with respect to our previous ice-sheet model is the improved treatment of grounding line dynamics, which makes it important to accurately account for changes in the geoid and the resulting changes in water depth at the grounding line. While UFEMISM has not yet been coupled to a sea-level model, it has been designed with such a future coupling in mind.

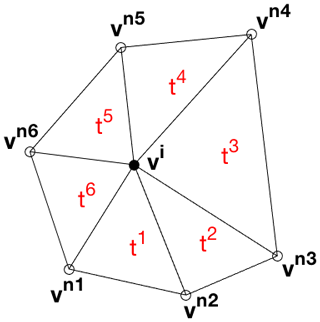

A1 First-order partial derivatives

In UFEMISM, derivatives are discretized on the unstructured triangular mesh using an averaged-gradient approach. In short, gradients are defined on the triangles surrounding a vertex (which are piecewise smooth surfaces, having unique gradients). The gradient on a vertex is then defined simply as the average of the gradients on the surrounding triangles. In the following derivation, we will show that this results in a linear combination of the function values on a vertex and its direct neighbours. These coefficients, which we here call “neighbour functions” (also known as “numerical stencils”), are a function of mesh geometry, which means they need to be calculated only once for a new mesh, and can be stored in memory for later use. This averaged-gradient approach is very similar to an unweighted least-squares approach (Syrakos et al., 2017), and in Sect. A3 we will show that, as expected, the resulting accuracy is second-order convergent for the first derivatives fx and fy, and first-order convergent for the second derivatives fxx, fxy, and fyy.

Before getting started, we introduce “star notation” as shorthand for the modulo function: . Using this notation, “the next neighbour vertex counter-clockwise from neighbour s” becomes , while “the next surrounding triangle clockwise from triangle t” becomes .

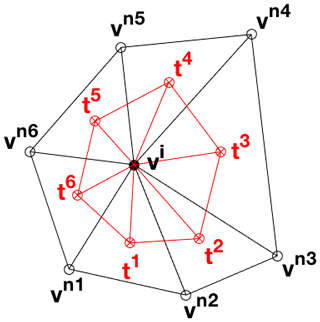

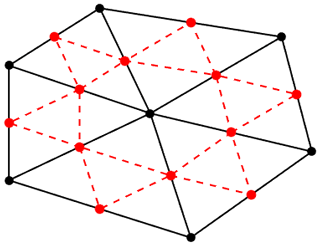

Consider the unstructured triangular mesh in Fig. A1. We first define the partial derivatives fx and fy of a function f on the triangles surrounding vertex i. Since these triangles are plane sections, they have well-defined first-order derivatives, which can be expressed using the normal vector to the plane. Treating the value fi of f on vertex i as a coordinate in a third dimension, we can define , such that the upward normal vector nt to triangle t, spanned by vi, vt, and , is given by

The first-order spatial derivatives and of f on t are then given by

These expressions can be simplified by introducing the “neighbour functions” on triangle t:

Equation (A2) can then be written as

We approximate the derivative of f on vertex i by averaging the derivatives on the n surrounding triangles:

Lowering the indices in the last sum term by one, this can be rearranged to read as follows:

This can be simplified by introducing the neighbour functions on vertex i:

This simplifies Eq. (A6) to

For vertices lying on the domain boundary, which therefore have n neighbours but n−1 surrounding triangles, it can be shown that the neighbour functions can be expressed as

A2 Second-order partial derivatives

In order to discretize the second derivatives fxx, fxy, and fyy, we treat the geometric centres of the triangles surrounding vertex i as “staggered” vertices, where the staggered first derivatives are calculated according to Eq. (A4). We then construct a new set of “sub-triangles”, spanned by the vertex i and these staggered vertices, as illustrated in Fig. A2. Since we know the values of fx and fy on all of them, we can use the same approach as before to calculate the gradients of the first derivatives (i.e. the second derivatives) on the sub-triangles, and average them to get the values on vertex i. Preliminary experiments showed that using the geometric centre instead of the circumcentre yields more stable solutions when using these to solve differential equations. A mathematical proof of why this is the case might be interesting but lies beyond the scope of this study.

Figure A2The geometric centres of the triangles surrounding vertex vimake up the vertices of a new set of “sub-triangles”, which can be used to define the second derivative of a function on vertex vi.

A new, staggered vertex in triangle t is created, using the horizontal coordinates of the geometric centre of t, and the derivative of fon t as the vertical coordinate:

The sub-triangle s is spanned by , , and , where (with as given by Eq. A8). The normal vector to sub-triangle s (using the derivatives of f on the vertex i and the triangles s and as the vertical coordinate) is then given by

The second-order derivatives and of f on s are then given by

We then introduce the “sub-triangle neighbour functions”, similar to Eqs. (A3) (which, again, depend only on mesh geometry):

Substituting these into Eqs. (A12) yields

We then substitute Eqs. (A4) and (A8) into Eq. (A14). From here on, only the xx derivative is shown – xy and yy follow similar derivations:

Again, we approximate the second derivative of f on i by averaging over the surrounding sub-triangles:

First, we isolate the neighbour function of vertex i itself:

Then, we rearrange the sum terms containing by lowering the summing index by one:

We do the same for the term containing :

Then, by observing that the inner sum in the last term in Eq. (A16) does not depend on the index of the outer sum, we arrange this term to

Substituting Eqs. (A17)–(A20) into Eq. (A16) yields

As we see, this simplifies into the same form as Eq. (A8):

The neighbour functions for the second derivative (and, following the same derivation, and ) of f on a non-boundary vertex i are therefore given by

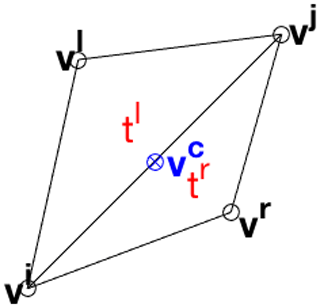

A3 First-order partial derivatives on staggered vertices

In order to describe fluxes between adjacent vertices, we need to define a discretization of the derivatives on a point lying in between those two vertices. While it is possible to just take the average value of the derivatives on the vertices themselves, as defined by Eq. (A8), the resulting value would be a linear combination of the function values on all the neighbours of these two vertices, resulting in an undesirable degree of numerical diffusion.

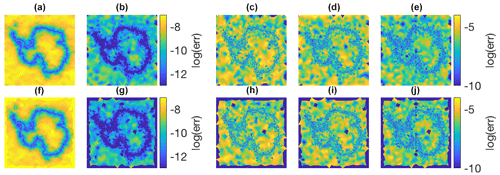

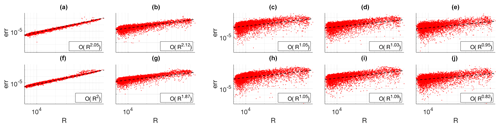

Figure A4(a–e) Discretization errors for the averaged-gradient approach used in UFEMISM for, from left to right, fx, fy, fxx, fxy, and fyy. (f–j) The same for the least-squares approach from Syrakos et al. (2017).

Figure A5(a–e) Discretization errors vs. mesh resolution for the averaged-gradient approach used in UFEMISM for, from left to right, fx, fy, fxx, fxy, and fyy. (f–j) The same for the least-squares approach from Syrakos et al. (2017). Log-linear fits have been made to all errors, with the order of convergence shown in the legends. Both approaches show very similar absolute errors and rates of convergence.

Consider the simple mesh in Fig. A3, consisting of four vertices spanning two triangles. We defined the staggered vertex vc as the midpoint between vertices vi and vj. On the two adjacent triangles tl and tr, the derivatives , , , and are well-defined:

Similar to the approach on the regular rectangular Arakawa C grid, we define the derivatives and of f on vc as the average of the values on the two adjacent triangles:

The purpose of the Arakawa C grid is to separate velocities on cell boundaries into components parallel and orthogonal to those boundaries. The component orthogonal to the boundary carries a flux from one cell into another, while the parallel component does not. On the mesh, this is complicated by the fact that the orientation of boundaries is not fixed. Let u be the vector pointing from vi to vj:

The parallel and orthogonal derivatives and of f on vc are then given by

It can be shown that this results in

This is, of course, simply the slope of the line between vi and vj.

A4 Convergence of the discretization scheme

The discretization errors and convergence behaviour of the neighbour functions are shown in Figs. A4 and A5.

Here, the derivatives of a simple function are calculated on a mesh generated by UFEMISM for Antarctica, and compared to their analytical value. The absolute errors are shown in Fig. A4, clearly showing the dependence on grid resolution (which is finest at the grounding line). This dependence is shown in Fig. A5, with log-linear curves fitted for all five derivatives showing the expected second- and first-order convergence for the first and second derivatives, respectively. For comparison, the same errors and convergence rates are shown for the least-squares approach derived by Syrakos et al. (2017).

To demonstrate convergence in the ice-sheet model itself, Fig. A6 shows the error in the modelled steady-state ice-margin radius for EISMINT experiment I, which decreases linearly with resolution. This matches the results of the previous section, as the SIA is a diffusive equation, meaning that the change in ice thickness is dictated strongly by the curvature of the surface (a second-order partial derivative, which the earlier convergence experiment shows to be first-order convergent).

In UFEMISM, ice thickness changes over time are calculated using a finite volume approach, which is conceptually very similar to the more commonly used combination of finite differencing with a staggered grid. Consider the conservation law for flowing ice, equating the time derivative of the ice thickness H to the (two-dimensional) divergence of the ice flux (being the product of H and the vertically averaged ice velocity v), and the balances M:

By applying the divergence theorem, this can be rewritten as

Here, Ω is some arbitrary 2-D region (the control “volume” after which the finite volume approach is named), enclosed by the 1-D curve ∂Ω with outward unit normal vector . The unstructured triangular mesh partitions the 2-D domain into Voronoi cells, which function as the control volumes (see Fig. B1).

The conservation law for a single Voronoi cell reads as follows:

Here, the Voronoi cell of vertex i shares the boundary ∂c with that of neighbouring vertex c. The equation is then discretized in space by assuming that the ice velocity v and the ice thickness H are constant on ∂c, so that the line integral becomes a simple multiplication with the length Lc of the shared boundary ∂c:

Lastly, the equation is discretized explicitly in time:

In order to solve this equation, we need to know the ice velocities vc on the Voronoi cell boundaries. Both the SIA and the SSA are therefore solved on the staggered vertices described in Appendix A. The staggered ice thickness Hc is determined using an up-wind scheme (Hc=Hi if ice flows from i to j, Hc=Hj if it flows from j to i). This means that the finite volume approach is essentially identical to the “mass-conserving up-wind finite difference scheme” used in PISM (Winkelmann et al., 2011), and very similar to the combination of finite differences with a staggered grid used in many other ice-sheet models.

A concrete form of the stress balance resulting from the SSA is given by Bueler and Brown (2009), based on the work by MacAyeal (1989) and Weis et al. (1999):

The first two terms on the left-hand sides of these equations describe the membrane stresses, in terms of the horizontal ice velocities u and v, the vertically averaged ice viscosity , and the ice thickness H. The last terms on the left-hand side describe the basal stress resulting from a Coulomb sliding law, in terms of the till yield stress τc (which is spatially variable and is zero for floating ice) and the horizontal ice velocities u and v. These stresses are balanced out by the driving stress, which is described on the right-hand sides in terms of the ice thickness H and the surface slopes hx and hy. In order to simplify Eq. (C1) we follow the approach by Determann (1991) and Huybrechts (1992), where the lateral variations of the effective strain rate are neglected. Determann (1991) and Huybrechts (1992) show that, since these terms are small compared to variations of the individual strain rates, this does not significantly affect the solution, while improving numerical stability and computational efficiency. Using this simplification, and setting the basal shear stress , Eq. (C1) simplifies to

Although both and τb are functions of u and v, this dependence is relatively weak. Bueler and Brown (2009) show that a good approach to solving these equations is to use two nested iterative loops: an inner loop that solves u and v for a given and τb, and an outer loop that updates and τb with the new values of u and v. They show that all four variables converge to a unique, stable solution. Since and τb are not updated in the inner loop, the derivation for the relaxation iteration is greatly simplified. Replacing the derivatives of u and v in Eq. (3) with their discretized approximations (as derived in Appendix A) yields

Isolating ui and vi yields

Defining the “centre coefficients” and and rearranging the terms in Eq. (5) yields

These equations now express the function values ui and vi in terms of the values of their direct neighbours, in the form . This can be adapted into an SOR iteration of the form . This yields the following equations:

Rearranging these terms gives the equations that can be found in the UFEMISM source code:

Finding the optimal value for the relaxation parameter ω, to achieve the highest possible convergence without creating numerical instability, is one of the most-studied subjects in numerical mathematics. In the experiments described in Sects. 3 and 4, a value of 1.1 was used; in the schematic MISMIP experiments higher values were often possible, resulting in substantially faster convergence, but this sometimes resulted in instability in the realistic experiments, which have steeper gradients in surface slopes and ice viscosity. Preliminary experiments with UFEMISM have suggested that, when ω is too high, numerical instability primarily occurs at vertices that are surrounded by sharp triangles. This suggests that, in order to optimize computational performance, ω could be made into a spatially variable field ωi, with values depending on mesh geometry. Further preliminary experiments showed that, while this does indeed significantly improve performance, it is difficult to create a robust setup that is guaranteed to either always avoid, or otherwise cope with, numerical instability. Solving this problem is deemed to be beyond the scope of the current study.

Since ice velocities are desired on the staggered vertices, this is where the SSA must be solved. However, the neighbour functions for staggered vertices, derived in Appendix A, only work for the first-order partial derivatives. With only four surrounding regular vertices, it is not possible to uniquely define all five first- and second-order partial derivatives. This problem is solved by solving the SSA on both the regular and the staggered vertices simultaneously (see Fig. C1). Each staggered vertex is then surrounded by six neighbours (two regular vertices and four staggered vertices), and both the first and second derivatives can be discretized using neighbour functions. The SSA, which includes the second derivatives of the ice velocities, can then be solved on the staggered vertices, so that it can be used in the finite volume approach.

Figure C1By combining the regular (black) and staggered (red) vertices into a single mesh, each staggered vertex has six direct neighbours, so that neighbour functions for both the first and second derivatives can be calculated, using the approach from Appendix A.

The semi-analytical solution to the grounding-line flux by Tsai et al. (2015) given by Eq. (4) is implemented as a boundary condition to achieve better grounding-line dynamics. This is done by designating staggered vertices as grounding-line vertices if they lie between a grounded and a floating regular vertex. If this is the case, the analytical ice flux solution is calculated for this staggered grounding-line vertex (finding the sub-grid grounding-line ice thickness by interpolating the thickness above flotation between the two regular vertices). The flux is divided by the ice thickness to find the velocity, which is assumed to have a direction antiparallel to the gradient of the thickness above flotation (i.e. perpendicular to the grounding line). During the SOR iteration, ice velocities at these staggered grounding-line vertices are simply kept fixed to this analytical solution. Other implementations that do not alter the SSA solution, but rather overwrite the ice flux in the free surface update, require a “heuristic” to determine which grid cell to apply the flux to (an approach which violates conservation of mass). Our approach circumvents this problem.

In order to calculate a depth-dependent temperature distribution, a vertical spatial discretization is required. Following the approach used by many ice-sheet models, we adopt the scaled vertical coordinate:

This guarantees that the top and bottom of the vertical ice column always coincide with the first and last vertical grid point, respectively. This coordinate transformation results in the appearance of a few extra terms in the heat equation (Eq. 5):

The different spatial derivatives of ζ follow from Eq. (C1):

The scaled vertical derivatives of a function, e.g. and , can be discretized to yield expressions of the following form:

These expressions are very similar to the neighbour functions that were used in Appendix A. We will not include their derivation here. Using these expressions to discretize the vertical derivatives in Eq. (D2), and using an implicit time discretization, yields

This can be rearranged to read

This system of equations can be represented by the matrix equation , where the lower diagonal α, central diagonal β and upper diagonal γ of the tridiagonal matrix A, and the solution δ, are given by

This matrix equation has to be solved for every individual grid cell. In UFEMISM, this is done with the Fortran package LAPACK; in MATLAB, it can be done with the “backslash method”: T = A\delta. We apply a Dirichlet boundary condition at the top of the column, keeping ice temperature equal to surface air temperature. At the base, a Neumann boundary condition is applied, keeping the vertical temperature gradient fixed to a value dictated by the geothermal heat flux and the frictional heating from sliding. Ice temperature throughout the vertical column is limited to the depth-dependent pressure melting point.

UFEMISM uses a modified version of Ruppert's algorithm to generate the unstructured triangular meshes on which the model equations are solved. Ruppert's algorithm (Ruppert, 1995) iteratively adds vertices to an existing Delaunay triangulation (a process called refinement), until the smallest internal angle of all triangles no longer lies below a pre-defined threshold value (typically 25 degrees). Since the stability of many numerical iterative schemes for solving differential equations strongly depends on this particular property of the mesh geometry (Ruppert, 1995), Ruppert's algorithm is a very useful tool for generating meshes suitable for use in physical models. During mesh refinement, a triangle is marked as “bad” if its smallest internal angle lies below the threshold value. If this is the case, the triangle is “split”, by adding a vertex at the triangle's circumcentre, and updating the Delaunay triangulation. Ruppert (1995) showed that, as long as the perimeter of the mesh does not contain any angles sharper than the threshold value, this algorithm always converges, i.e. results in a mesh with no “bad” triangles and a finite, typically small number of vertices.

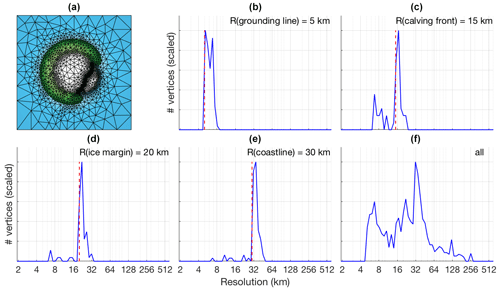

Figure E1The results of a schematic experiment, where an ice sheet and shelf are simulated over a small island with an embayment on the south-east side. Blue indicates open ocean, green indicates ice-free land, white indicates ice. (b–f) Resolution distributions for, respectively, the grounding line, the calving front, the ice margin, the coastline, and the entire mesh. The resolutions that have been prescribed for these different regions are indicated by the vertical dashed red lines.

In UFEMISM, several additional conditions have been added which can cause a triangle to be marked as “bad” and which depend upon the triangle's “model content” and size. If, for example, the modelled grounding line passes through a triangle whose longest edge exceeds the specified maximum grounding line resolution, that triangle is also marked as “bad”.

Figure E1a shows the results of a simple schematic experiment, where an ice sheet and shelf are simulated on a semi-circular island, which has a small embayment on the south-east side (so that a small ice shelf forms). This configuration serves only to provide a clear separation of the different areas of interest, so that the behaviour of the mesh generation code can be investigated when different resolutions are prescribed for the grounding line, the calving front, the ice margin on land, and the coastline. Figure E1b–f show the resolution distribution for vertices lying on these lines, with the prescribed resolutions shown by the vertical dashed red lines. As can be seen, the resulting resolutions are very close to their desired values.

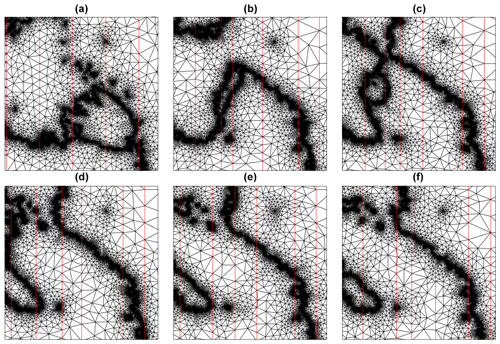

Figure E3The meshes of the Antarctic retreat simulation shown in Fig. 11, zoomed in on the Ross Ice Shelf. The retreat of the ice sheet is clearly visible in the mesh structure, as are the processor domains resulting from the load-balanced domain subdivision (borders highlighted in red).

Extensive preliminary experiments showed that this particular approach to mesh generation is robust, resulting in a mesh with resolutions over the specified areas that lie very close to the specified value. Other conditions for mesh refinement that can be included, but will not be discussed here, include a maximum vertical error in surface elevation based on surface curvature (useful for the interior close to the ice margin), and a maximum resolution derived from ice velocity (useful for ice streams). Lastly, a maximum resolution can be prescribed at specific geographic locations, such as ice core drill sites, which is demonstrated in Fig. 1. Since future plans for UFEMISM include the addition of a tracer tracking module, this feature will be very useful for creating pseudo-ice cores which can be directly compared to observations. The resolutions shown in Fig. E1 were chosen simply as a demonstration. As far as we have been able to find in preliminary experiments, there is no hard limit to how fine a resolution can be prescribed. In practice, the resolution for palaeo-simulations will always be limited by the computation time.

Mesh generation has been parallelised by subdividing the model domain into separate regions for each processor, which generates a mesh for only that section. These “submeshes” are then merged to produce one single mesh that covers the entire model domain. An example of four such submeshes that have been generated in parallel for Antarctica is shown in Fig. E2. Although these particular submeshes have equally sized domains (for illustration purposes), in general the size of each subdomain is chosen such that they contain approximately equal numbers of vertices, to ensure proper load balancing. The results presented in Sect. 4 show that this approach results in a computation time that scales with the number of processors to order 0.47. Note that this form of domain partitioning is only used in the parallelisation of the mesh generation. Once a mesh has been generated, the use of MPI shared memory means that every processor can access all data on the entire mesh, and operations are partitioned simply by number of vertices.

The mesh adapts to the ice-sheet geometry as it evolves through time. During a simulation, a “mesh fitness factor”, defined as the fraction of triangles that still meet all the fitness criteria in the extended version of Ruppert's algorithm, is calculated periodically (with a time step that can be specified through the configuration file, typically 50 years). For a newly generated mesh, this fraction is, by definition, 100 %. As the ice-sheet geometry evolves over time, the fitness factor slowly decreases. When it falls below a prescribed threshold value (typically 95 %), a mesh update is triggered, and an entire new mesh is generated. In glacial cycle simulations, the typical time between mesh updates is 50–500 model years. Although Ruppert's algorithm provides a very intuitive rule for refining a mesh, no such rules can be easily defined for “un-refining” a mesh. The simplest approach is therefore to generate an entirely new mesh once the fitness of the current mesh falls below the threshold value. Figure E3 shows the meshes of the Antarctic retreat simulation shown in Fig. 11, zoomed in on the Ross Ice Shelf. Here we see how the high-resolution sections of the mesh follow the retreating ice sheet, ensuring the grounding line is always resolved at the desired resolution. The parallelisation by domain subdivision is also visible; the borders between the domains of the different processors are indicated by the red lines.

Once a new mesh has been generated, the ice thickness and englacial temperature are remapped from the old mesh to the new one, using conservative remapping based on the method by Jones (1999), adapted from spherical to Cartesian coordinates. Extensive preliminary experiments have shown that conservative remapping is crucial for achieving accurate results; using simple trilinear interpolation, or other easily implemented approaches, results in ice sheets that deviate significantly from the analytical solutions of the Halfar and Bueler benchmark experiments discussed in Sect. 3.1, especially when a high resolution is used (since this results in more frequent mesh updates). In the EISMINT experiments, ice thickness is less affected since this is mostly dictated by the mass balance forcing, but large errors occur in the englacial temperature when non-conservative remapping schemes are used.

The Fortran90 source code of UFEMISM, as well as a collection of MATLAB scripts for analysing and visualizing output data, and some more elaborate documentation are freely available at https://github.com/IMAU-paleo/UFEMISM (Berends et al., 2021). The different benchmark experiments described in Sect. 3 can be run with the supplied configuration files. The Antarctic retreat simulations described in Sect. 4 require input files describing the present-day Antarctic ice sheet and climate. For the ice-sheet and bedrock geometry, we used the Bedmachine Antarctica v1 data by Morlighem et al. (2019), which is freely available as supplementary material with their publication. For the present-day climate we used the ERA-40 reanalysis (Uppala et al., 2005), which can be downloaded from the ECMWF website (https://www.ecmwf.int/, last access: 18 December 2019) after creating a free user account.

CJB created the model and performed the experiments, with some helpful input from HG. CJB drafted the first version of the manuscript, and all authors contributed to the final version.

Heiko Goelzer is a member of the editorial board of Geoscientific Model Development.

This publication was generated in the framework of Beyond EPICA. The project has received funding from the European Union's Horizon 2020 research and innovation programme under grant agreement no. 815384 (Oldest Ice Core). It is supported by national partners and funding agencies in Belgium, Denmark, France, Germany, Italy, Norway, Sweden, Switzerland, the Netherlands, and the United Kingdom. Logistic support was mainly provided by PNRA and IPEV through the Concordia Station system. Heiko Goelzer was funded by the program of the Netherlands Earth System Science Centre (NESSC; Dutch Ministry of Education, Culture and Science (OCW) grant no. 024.002.001). The use of supercomputer facilities was sponsored by NWO Exact and Natural Sciences. Model runs were performed on the LISA Computer Cluster, we would like to acknowledge SurfSARA Computing and Networking Services for their support. The opinions expressed and arguments employed herein do not necessarily reflect the official views of the European Union funding agency or other national funding bodies. This is Beyond EPICA publication number 16.

This paper was edited by Alexander Robel and reviewed by Ralf Greve and Josefin Ahlkrona.

Abe-Ouchi, A., Saito, F., Kawamura, K., Raymo, M. E., Okuno, J., Takahashi, K., and Blatter, H.: Insolation-driven 100,000 year glacial cycles and hysteresis of ice-sheet volume, Nature Letters, 500, 190–194, 2013.

Arakawa, A. and Lamb, V. R.: Computational Design of the Basic Dynamical Processes of the UCLA General Circulation Model, Methods in Computationl Physics: Advances in Research and Applications 17, 173–265, 1977.

Aschwanden, A., Bueler, E., Khroulev, C., and Blatter, H.: An enthalpy formulation for glaciers and ice sheets, J. Glaciol., 58, 441–457, 2012.

Barletta, V. R., Bevis, M., Smith, B. E., Wilson, T., Brown, A., Bordoni, A., Willis, M., Abbas Khan, S., Rovira-Navarro, M., Dalziel, I., Smalley, R. J., Kendrick, E., Konfal, S., Caccamise, D. J. I., Aster, R. C., Nyblade, A., and Wiens, D. A.: Observed rapid bedrock uplift in Amundsen Sea Embayment promotes ice-sheet stability, Science, 360, 1335–1339, 2018.

Berends, C. J., de Boer, B., and van de Wal, R. S. W.: Application of HadCM3@Bristolv1.0 simulations of paleoclimate as forcing for an ice-sheet model, ANICE2.1: set-up and benchmark experiments, Geosci. Model Dev., 11, 4657–4675, https://doi.org/10.5194/gmd-11-4657-2018, 2018.

Berends, C. J., de Boer, B., Dolan, A. M., Hill, D. J., and van de Wal, R. S. W.: Modelling ice sheet evolution and atmospheric CO2 during the Late Pliocene, Clim. Past, 15, 1603–1619, 2019.

Berends, C. J., van de Wal, R. S. W., and Goelzer, H.: The Utrecht FinitE voluMe Ice-Sheet Model, Github, available at: https://github.com/IMAU-paleo/UFEMISM (last access: 4 May 2021), 2021.

Bevan, S. L., Luckman, A., Hubbard, B., Kulessa, B., Ashmore, D., Kuipers Munneke, P., O'Leary, M., Booth, A., Sevestre, H., and McGrath, D.: Centuries of intense surface melt on Larsen C Ice Shelf, The Cryosphere, 11, 2743–2753, https://doi.org/10.5194/tc-11-2743-2017, 2017.

Bueler, E., Lingle, C. S., Kallen-Brown, J. A., Covey, D. N., and Bowman, L. N.: Exact solutions and verification of numerical models for isothermal ice sheets, J. Glaciol., 51, 291–306, 2005.

Bueler, E. and Brown, J.: Shallow shelf approximation as a “sliding law” in a thermomechanically coupled ice sheet model, J. Geophys. Res., 114, F03008, https://doi.org/10.1029/2008JF001179, 2009.

Bueler, E. and van Pelt, W.: Mass-conserving subglacial hydrology in the Parallel Ice Sheet Model version 0.6, Geosci. Model Dev., 8, 1613–1635, https://doi.org/10.5194/gmd-8-1613-2015, 2015.

Bueler, E., Lingle, C. S., Kallen-Brown, J. A., Covey, D. N., and Bowman, L. N.: Exact solutions and verification of numerical models for isothermal ice sheets, J. Glaciol., 51, 291–306, 2005.

Bueler, E., Brown, J., and Lingle, C. S.: Exact solutions to the thermomechanically coupled shallow-ice approximation: effective tools for verification, J. Glaciol., 53, 499–516, 2007.

Cornford, S. L., Martin, D. F., Graves, D. T., Ranken, D. F., Le Brocq, A. M., Gladstone, R. M., Payne, A. J., Ng, E. G., and Lipscomb, W. H.: Adaptive mesh, finite volume modeling of marine ice sheets, J. Comput. Phys., 232, 529–549, 2013.

Cuzzone, J. K., Morlighem, M., Larour, E., Schlegel, N., and Seroussi, H.: Implementation of higher-order vertical finite elements in ISSM v4.13 for improved ice sheet flow modeling over paleoclimate timescales, Geosci. Model Dev., 11, 1683–1694, https://doi.org/10.5194/gmd-11-1683-2018, 2018.

Cuzzone, J. K., Schlegel, N.-J., Morlighem, M., Larour, E., Briner, J. P., Seroussi, H., and Caron, L.: The impact of model resolution on the simulated Holocene retreat of the southwestern Greenland ice sheet using the Ice Sheet System Model (ISSM), The Cryosphere, 13, 879–893, https://doi.org/10.5194/tc-13-879-2019, 2019.

de Boer, B., Stocchi, P., and van de Wal, R. S. W.: A fully coupled 3-D ice-sheet–sea-level model: algorithm and applications, Geosci. Model Dev., 7, 2141–2156, https://doi.org/10.5194/gmd-7-2141-2014, 2014.

DeConto, R. M. and Pollard, D.: Contribution of Antarctica to past and future sea-level rise, Nature, 531, 591–597, 2016.

Depoorter, M. A., Bamber, J. L., Griggs, J. A., Lenaerts, J. T. M., Ligtenberg, S. R. M., van den Broeke, M. R., and Moholdt, G.: Calving fluxes and basal melt rates of Antarctic ice shelves, Nature Letters, 502, 89–93, 2013.

Determann, J.: Numerical modelling of ice shelf dynamics, Antarct. Sci., 3, 187–195, 1991.

Fettweis, X., Hofer, S., Krebs-Kanzow, U., Amory, C., Aoki, T., Berends, C. J., Born, A., Box, J. E., Delhasse, A., Fujita, K., Gierz, P., Goelzer, H., Hanna, E., Hashimoto, A., Huybrechts, P., Kapsch, M.-L., King, M. D., Kittel, C., Lang, C., Langen, P. L., Lenaerts, J. T. M., Liston, G. E., Lohmann, G., Mernild, S. H., Mikolajewicz, U., Modali, K., Mottram, R. H., Niwano, M., Noël, B., Ryan, J. C., Smith, A., Streffing, J., Tedesco, M., van de Berg, W. J., van den Broeke, M., van de Wal, R. S. W., van Kampenhout, L., Wilton, D., Wouters, B., Ziemen, F., and Zolles, T.: GrSMBMIP: intercomparison of the modelled 1980–2012 surface mass balance over the Greenland Ice Sheet, The Cryosphere, 14, 3935–3958, https://doi.org/10.5194/tc-14-3935-2020, 2020.